Many enterprises find their initial foray into the world of iPaaS simple and straightforward, either because they start with greenfield initiatives or because their early projects involve relatively small numbers of endpoints, environments, or both.

Such projects can be met with a fairly simple approach, as seen here:

In such a system you deploy an API gateway, ESB or iPaaS and use adapters to connect or proxy services from various systems – file or database adapters, cloud or SaaS adapters, etc.

With connectivity established, the ESB or iPaaS handles the transformation of messages and orchestration of interactions (typically with REST as developers know it so well).

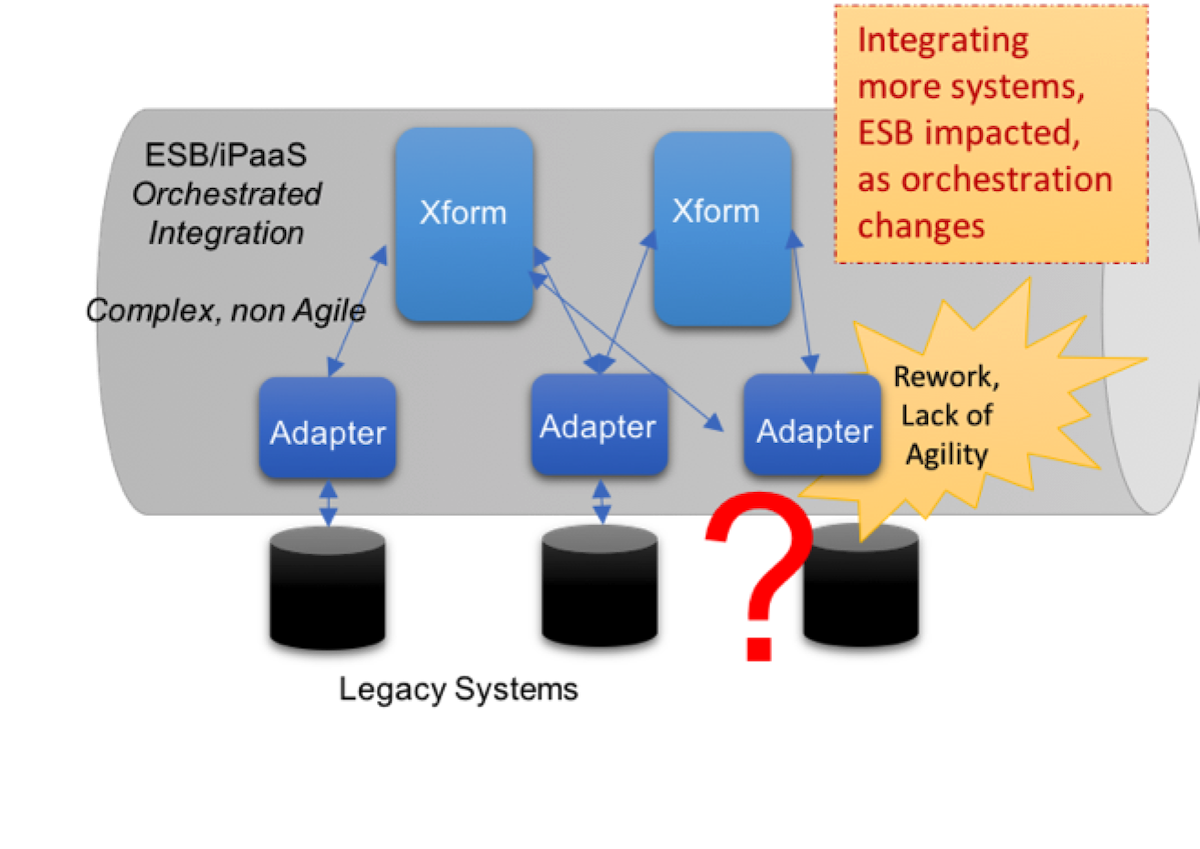

This simple, point-to-point, tightly-coupled RESTful architecture can work at small scale, but it doesn’t scale well and it doesn’t offer the kind of agility, robustness and response time that enterprises and users require and expect today. As more systems get added, point-to-point integration gets exponentially more complex. This is especially true if the same messages/APIs need to be sent to multiple downstream systems.

The 7 challenges of scaling an iPaaS when relying only on REST:

1. Maintaining high availability and performance

For every integration point, producer and consumer applications need to be connected directly, i.e., aware of each other and their respective locations or addresses. Because of this coupling, the performance and availability of both producers and consumers can be negatively impacted by any bug, change or outage.

2. Handling of bursts and slow consumers

If a downstream application is disconnected or just unable to accept and process messages as quickly as they are being sent, the iPaaS will have a load build up which could put back-pressure on sending applications, cause lost messages, and potentially even cause the platform to slow down or crash.

3. Guaranteeing the delivery of messages

When you need guaranteed and lossless data transfer, a RESTful approach puts the burden of recognizing non-delivery and redelivery attempts on the publishing application. The producer has to ensure delivery to all downstream consumers, and store undelivered messages until they can be delivered. This makes the coupling worse and often leads to data loss.

4. Ensuring architectural agility

With a totally RESTful approach, every time a new integration point is added the iPaaS flows have to be rewired to include it. All performance characteristics of the new integration point are suddenly put upon the existing flow, including latency and the potential for slowdowns or downtime. Such high impact integration can slow time to market, as rolling out new features and services makes the system less responsive and robust.

5. Governing your system

Other issues exist such as difficulty in governance, running parallel versions in production (Blue Green or AB testing).

6. Incorporating AI services

More and more enterprises want to integrate AI and machine learning services into their decision support and business process automation initiatives. Tying your systems together with tightly-coupled point-to-point RESTful connections makes it nearly impossible to do so.

7. Integrating on-prem assets

Most cloud migration efforts happen gradually, requiring systems now running in private or public clouds to interact with other systems still running in on-prem data centers. A pure REST/API-based approach poses the same challenges as explained above, but since you’re dealing with WAN you’ve got a lot more risk of network issues causing problems.

Fortunately, the challenges of scaling an iPaaS are easily addressed by pairing your iPaaS of choice with a robust, enterprise-grade, cloud-native event-broker that can handle the sophisticated guaranteed distribution of any number of events, between any number of endpoints, across any environment.

Four Factors to Consider When Choosing Between a Built-in vs. 3rd-Party Event Broker for iPaaSIs it better to use the event broker provided by the iPaaS solution itself or a 3rd-party event broker? Here are four things to consider as you decide.

Explore other posts from categories: For Architects | For Developers

Solace

Solace