Read the post in プレスリリースを読む 보도 자료 읽기

As the backbone of supply chain management processes, your IT infrastructure can enable or constrain activities that affect your customers’ experience and your bottom line. Moving forward with digital transformation in supply chain management often comes down to three things: cost, customer experience, and agility. All of these things are impacted significantly by real-time communication and the accessibility of real-time data – and it’s where things are headed as we begin this new decade.

Many companies have already event enabled their supply chain in an effort to compete against giants like Amazon, Unilever, Alibaba, and Shopify; to stay on top of international shipments; to accurately control pricing; to and to take advantage of new tools like AI, IoT and streaming analytics to predict and avoid shipping delays. These companies have embraced digital transformation in supply chain management to optimize operational efficiency and generate better customer experiences – recognizing that their IT infrastructure needed to be able to adapt to real-time data.

If you’re interested in a high level webinar on this topic, check out this video:

Supply Chain and Traditional Integration Solutions

It’s no surprise that sea-going shipments are often delayed by weather, mechanical problems, or delays at ports. It’s also no surprise that each delay has a ripple effect down the supply chain. For example, a hurricane delays a shipment that was due to arrive at port this week. You’d have to reschedule the transport trucks/lorries scheduled to collect the container so they don’t just sit there, and delay the production job that was going to use the shipment..

Traditional integration solutions would use polling and point-to-point integration to manage this part of the supply chain – you would poll the vessel management service (often a SaaS such as ClearMetal or OceanIT), to check the status of all shipments. If there was nothing to report, the return messages would indicate such. You would also have to stitch together the transport manager application (eg. Oracle OTM) and the port’s scheduling application with point-to-point integrations, perhaps using a REST API. If you wanted to add the production management or enterprise resource planning (ERP) you would have to add another point-to-point integration.

Event-driven architecture is a different way of thinking about distributed systems like supply chain management systems. It doesn’t come without its own difficulties, but in return you get huge benefits in scalability, flexibility, and agility that you would not get with traditional integration solutions.

If the IT infrastructure for the supply chain management example above were built with event-driven architecture , the delay would be “published” to an event broker with a topic that indicates what it’s about, and the event broker would deliver the event to every application that has “subscribed” to receive events with that topic. This leads to a loosely coupled architecture, where applications are not directly tied to each another, and generally not even aware of each other.

Why Loose Coupling is Beneficial in Supply Chain Management

In event-driven architecture, events are sent without the expectation of a reply. Why is this a good idea for IT infrastructure in supply chain management? Well, it means there is no dependency between the sender and receiver.

In the example discussed earlier, the ship management application doesn’t know what other applications are listening to the events regarding delayed shipments. So you can add an ERP application to the mix by having them subscribe to events about delays without modifying or even notifying the ship management systems. This makes it much quicker and easier to add, design, and deploy new applications that do something new with those same events. This decoupling can drive better business decisions, because you’re not handcuffed by what’s already in place, or worried about adding or changing applications because it will mean writing new code or modifying existing systems.

What’s more, you don’t have to wait for the polling interval to expire before realizing you need to do something, so processing and taking action can happen in real-time. No more wasting processing bandwidth and processing power on unchanged data, and no more risk of missing a production/shipping window due to infrequent polling.

Events in Motion: An Event Broker’s Responsibility

In event-driven architecture, an event broker, coupled with topic hierarchy best practices, takes on the role of ensuring an event is received, filtered, and routed to whatever applications are interested – no matter where they are located (cloud, on-premises). All applications connect to the event broker, and the broker takes events from the emitter and delivers them to the listener.

Topic Routing and Filtering

If an application needs to get an event, it registers its interest by subscribing to the topic – also known as the publish-subscribe messaging pattern. This subscription uses wildcards to select which events it should receive.

By using topic taxonomy and subscriptions, you can fulfil two rules of event-driven architecture:

- A subscriber should subscribe only to the events it needs. The subscription should do the filtering, not the business logic.

- A publisher should only send an event once, to one topic.

A Supply Chain Management Example

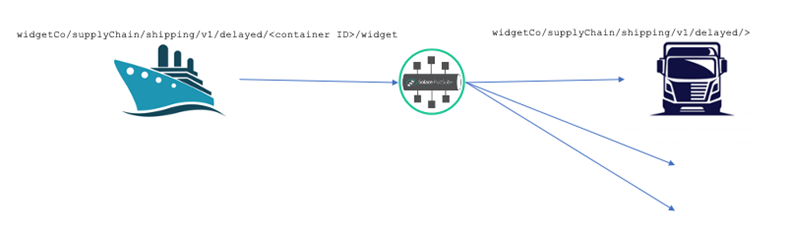

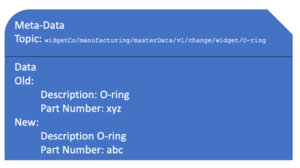

Let’s explore a shipping example: a container of widgets (needed to build one ultra-widget) is delayed due to a storm. The container vessel management system emits an event:

widgetCo/supplyChain/shipping/v1/delayed/<container ID>/widget

This event has a payload that reflects the details of the change, like the old and new arrival times, and the widget details, like article number, etc.

The transport management system needs to know about any delays, so it subscribes to these events:

widgetCo/supplyChain/shipping/v1/delayed/>

Notice the wildcard “/>”; this means that transport management will receive all shipping delay notifications.

Event-Driven Concepts for Supply Chain Management Digital Transformation

There are some key architectural concepts to consider before moving forward with supply chain management digital transformation. These concepts include:

- Event mesh

- Publish-subscribe messaging pattern

- Choreography vs. orchestration

- Event management

- Event-driven digital twin

Event Mesh for Supply Chain Management

In the examples above, I’ve shown how consumers of events can use subscriptions with wildcards to filter events to only those of interest. But what about event routing?

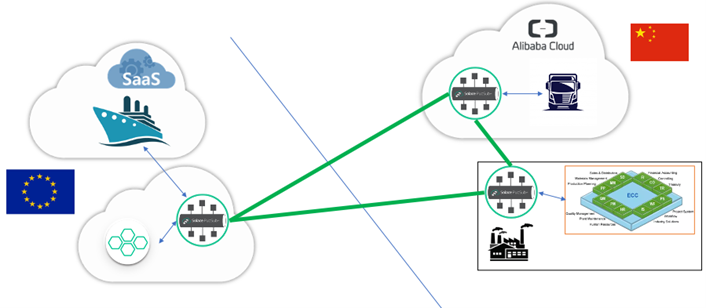

Applications may be hosted in different environments (on premises, in multiple clouds) and in different geographies. In supply chain management its very likely that you’re sending goods internationally. To avoid sending events all over the globe, or having everything connect to one central point, you have to decide which event should be sent where – called event routing. There’s no point in sending an event for a Singapore-bound container delay to a transport management application in Canada, unless it affects them directly.

Fortunately, there’s an easy way of doing this – you just look at the topic. All you need is for event brokers at the various locations to share information about what topic is being consumed where, and the brokers can decide on how and where events should be routed. Connecting event brokers together in a network so that they share consumer topic subscription information and dynamically route messages amongst themselves is called an event mesh.

In the example above, a Singapore event broker tells all the other brokers it has a listener for container delay events in Singapore. The example below demonstrates how an event mesh (connected event brokers) effectively eliminate geographical boundaries and boundaries previously imposed by differing environments or cloud services:

- An on-premises ERP in China, where Widget & Co.’s assembly line is

- A transport management application SaaS in a cloud service in China

- A European shipping line’s container management SaaS hosted in Europe

Real-Time Insight into Supply Chain Performance with Publish-Subscribe

Build an event stream and the listeners will come. As is their right, some business leaders would like up-to-date insights into supply chain performance. If you imagine part of the critical path of fulfilling an order for a luxury vehicle is the time it takes to get through customs checks and delivered to the customer, you would need a dashboard of the vehicle order progressing through shipping, port, customs, and land transport, complete with transit times. If you have an event mesh in place, the data will already be flowing (published), you would just need your dashboard to capture it (subscribe).

Your dashboard would subscribe to the following events (note, “EventMesh01” is a custom registration plate for the vehicle):

…/shipping/…/vehicle/EventMesh01/loadedOnBoard

…/shipping/…/vehicle/EventMesh01/unloaded

…/port/…/vehicle/EventMesh01/atCustoms

…/port/…/vehicle/EventMesh01/awaitingOnward

…/logistics/…/vehicle/EventMesh01/collected

…/logistics/…/vehicle/EventMesh01/deliveredToDealer

…/dealer/…/vehicle/EventMesh01/customerNotified

Real-time events (at customs, delivered to dealer) would be fed to the dashboard so business leaders would to be able to visualize the state of the business, on luxury vehicle at a time if they so desired.

If you take it one step further, the luxury car manufacturer could have a mobile application the customer could download and have is subscribe to …/*/…/vehicle/EventMesh01/> , which would notify the customer of delivery status in real-time.

Supply Chain Coordination Through Choreography

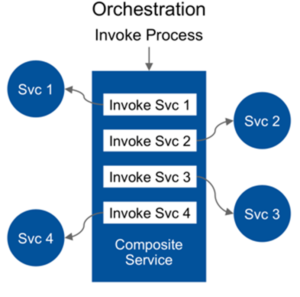

As you can imagine, supply chain management for a global enterprise with thousands of services would need some serious coordination. You could choose to have a single service that keeps track of all the other services, taking action if there’s an error. This would be considered orchestration – a single point of coordination – providing a single point of reference when tracing a problem, but also a single point of failure and a bottleneck.

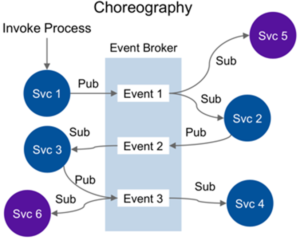

With event-driven architecture, services are relied upon to understand what to do with an incoming event, perhaps even generating further events. This leads to a “dance” of individual services doing their own things, but when combined, producing an implicitly co-ordinated response – hence the term choreography. My colleague Jonathan Schabowsky has written a brilliant blog post on this topic, which I encourage you to read: Microservices Choreography vs Orchestration: The Benefits of Choreography.

You may be wondering “But what about error conditions?” Well, an error is also an event – so services that would be affected by such an error would be set up to consume them and react accordingly. This has extraordinary relevance in supply chain management use cases. Let’s look at another example, starting right at the beginning of the supply chain:

A farmer is growing some vanilla pods to provide flavour for a premium ice-cream brand. The pod is scheduled to be harvested on a set date, but poor weather the day before harvest delays the harvest for the entire crop. If you’re the ice-cream company using orchestration, your vanilla pod harvest service must:

- Delay road transport to pick up the pods;

- Delay the vessel scheduled to collect the pods, making a decision whether to dock the vessel as usual or have it wait outside port;

- Re-schedule the port slot;

- Ensure the same is done at each factory location for the onward leg to the factories;

- Delay shipments of milk and other ingredients, and the downstream logistics; and

- Update ERP so that factory production slots are updated.

That’s a lot of moving pieces. This complex service would have many interactions that would make it difficult to change or add new services without worrying that you’ll break something or introduce delay that will break the SLA times of the service as a whole.

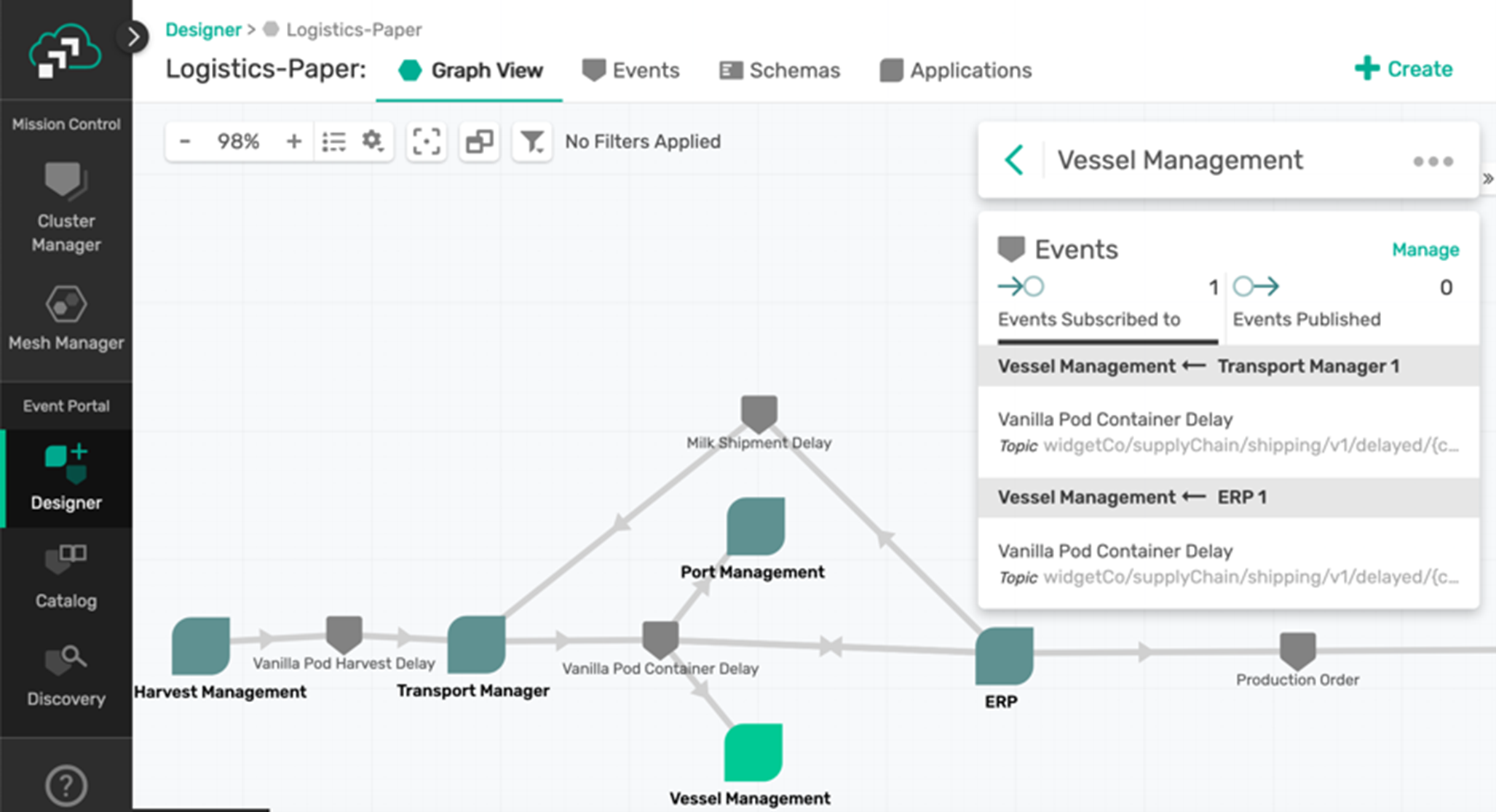

Now consider the choreography approach. The harvest management application would broadcast a “vanilla pod delayed” event. Its job is now done. The other applications would consume the event and act accordingly:

- The land transport management application re-schedules the appropriate transport trucks/lorries and emits a “vanilla pod container delayed” event;

- The vessel shipment management application and port management application receive the container delayed event, and business logic decides on the scheduling impact. Subsequent vessel and port delay events are generated.

- The ERP is updated to reschedule production.

By localizing the responsibility to act on an event to the event consumer itself, rather than relying on an orchestrator service, we achieve better decoupling and separation of concerns. We also eliminate the orchestrator as a bottleneck both in performance terms and in agility/complexity terms.

It may still seem complicated to understand and coordinate, but there is tooling available for management, visualization, design, and sharing event streams – also known as an event portal.

Supply Chain Event Management with an Event Portal

As you’ve seen from the vanilla pod example, it can be difficult to identify the best way to address a delay. You would need information from both the port and the vessel to understand the optimal solution of how to manage the shipping of the delayed vanilla pod containers.

An event portal is a solution that lets people create, catalog, share and manage events and event-driven applications. An event portal can help you visualize them as interconnected network diagrams that your team can go over in design reviews. That way when you deploy your events and event-driven applications, it’s easy to see if the design is in sync with the reality in runtime (and changes are all version controlled and tracked).

Event-Driven Digital Twin

This is where the concept of a digital twin can help – a simulation model of the actors in a business process, so that scenarios can be played out to understand their consequences. For example, you may wish to delay a vessel so that it can load the vanilla pod containers as they arrive from the farm, but what about the costs of delays to other containers already on board?

To accurately reflect the current state of the business, the simulation models – the digital twins of containers, vessels etc. – must be kept up to date with the latest state of the business. A heavily loaded vessel may be more delayed by weather than a lightly loaded one. This means the digital twins must absorb state updates from a large number of sources within the business.

Would you like the responsibility of updating every relevant orchestration process to update a digital twin? Probably not. By creating an event-driven digital twin, you can make use of the event mesh to subscribe to all relevant events, gradually refining your modelling as the twin is developed.

Conclusion: Why EDA for Supply Chain Management Digital Transformation?

I hope I have effectively made the case that event-driven architecture is a worthwhile improvement to your supply chain management infrastructure. To recap, here are the main principles of event-driven architecture that apply to supply chain management:

- Take advantage of the publish/subscribe messaging pattern.

- Use event brokers to make sure the right services and applications get the right events

- Create an event mesh to distribute events among decoupled applications, cloud services and devices.

- Use topics to make sure an event is only sent once and that applications only receive what they need.

- Choreograph services with event streams rather than introducing a single source of failure with orchestration.

- Manage your event-driven architecture with an event portal for visualization, design, and discovery.

- Create an event-driven digital twin to develop simulation models for making better business decisions.

Why should you do all of this?

- Responsiveness. Since everything happens as soon as possible and nobody is waiting on anyone else, event-driven architecture provides the fastest possible response time.

- Scalability. Since you don’t have to consider what’s happening downstream, you can add service instances to scale. Topic routing and filtering can divide up services quickly and easily – as in command query responsibility segregation.

- Agility. If you want to add another service, you can just have it subscribe to an event and have it generate new events of its own. The existing services don’t know or care that this has happened, so there’s no impact on them.

- Agility again. By using an event mesh you can deploy services wherever you want: cloud, on premises, in a different country, etc. Since the event mesh learns where subscribers are, you can move services around without the other services knowing.

These advantages are especially relevant in supply chain management use cases where a single change can have huge consequences rippling all the way down the chain. Being able to react to real-time information and being able to add new services and analytics quickly and easily, considerably enhances supply chain operations and management.

If you’d like to dive into more detail, you can check out my technical webinar:

Find out more about digital transformation in supply chain management and how building an event mesh can improve efficiency and customer experience:

Explore other posts from categories: For Architects | Use Cases

Tom Fairbairn

Tom Fairbairn