When capital markets firms began using rich internet applications (RIAs) built with Javascript, Flash/Flex, Silverlight and Objective C, it was accepted that applications designed for internet environments come with internet-like latencies. So capital markets firms that measure the performance of internal trading systems in the tens of microseconds got used to tens of milliseconds of latency when building Single Dealer Platforms, retail brokerage consoles, or other real time applications.

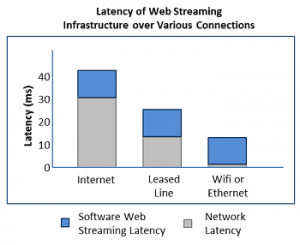

After all, we’re talking about connecting over the public internet, where the best you can hope for is about 20-30 milliseconds round trip time just for the network. So who cares if the infrastructure distributing data to the RIAs adds a handful of milliseconds? That’s a small percentage of total latency right?

Not necessarily.

RIAs Not Just for the Public Internet

RIAs may have been created with the public internet in mind, but they’re so feature rich that companies are now using them as a single application/interface for people connecting over all kinds of other networks including:

- leased lines (between offices)

- wifi (think iPad on the trading floor)

- LANs

Suddenly that RIA infrastructure latency that seemed like noise in context of an internet round trip time is significant on a much faster network.

Hardware Brings True Low Latency to Web Streaming and RIAs

Stay Flexible My Friends

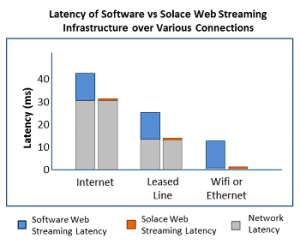

If you’re building trading applications and you can choose to save 5-10 milliseconds in a loaded web streaming environment, regardless of the underlying network, why wouldn’t you? On a high speed LAN, you’d be crazy to introduce that much latency, and over the internet, it’s a very meaningful reduction. As a bonus, that same environment is more reliable, has a smaller datacenter footprint, lower cost and is pre-integrated with inside-the-firewall messaging. RIAs are likely to find their way into many parts of the enterprise, inside and out, why not build on a platform that gives you the lowest possible latency and leaves you ready for anything?

Explore other posts from category: Use Cases

Solace

Solace