When building an application using a microservices architecture, it is much easier to horizontally scale those microservices – i.e, adding more service instances on demand – when those microservices are stateless. However, implementing timers can be a challenge. How can you “set aside” an event to be processed later, perhaps by a different instance of the microservice, without storing processing state in the microservice? The answer is to use a delayed delivery queue in your event mesh.

What is a Stateless Microservice?

According to O’Reilly, “Stateless microservices do not maintain any state within the services across calls. They take in a request, process it, and send a response back without persisting any state information.” On the other hand, stateful microservices maintain processing state in RAM or on disk so that when a new request or event is received, the service instance can retrieve the previous processing state, and use that state to correctly process the new request.

It is easier to horizontally scale your microservices (adding more processing threads as workload increases) when the services are stateless. Each instance of the microservice operates completely independently. When microservices are stateful, you either need to find a way to share state amongst service instances (which can adversely impact the gains achieved through horizontal scaling), or you need to further complicate the architecture by making the event mesh more aware of the application logic (so that the mesh can ensure all requests or events associated with a given business transaction are delivered to the same service instance). Also, stateful service instances might need to keep state for thousands or tens of thousands of transactions. Before you know it, you have resource-hungry, tightly coupled service instances… the very things you were trying to avoid with a microservices architecture!

For all these reasons, it is preferable to build stateless microservices whenever you can. But, how do you implement something like a timer, which is inherently stateful? The answer is to use the event mesh. It turns out to be really easy to use delayed delivery queues in a PubSub+ event mesh to implement the timers your stateless services might need.

Stateless Microservices Example: Time Delays

Using Delayed Delivery Queues in an E-Commerce Microservices Application

In this blog post, I’ll explore two scenarios in an e-commerce application example where delayed delivery queues really come in handy:

- Retrying processing after a time delay. I’ll use a credit card purchase authorization in an e-commerce application to illustrate this use case.

- One event, published to the event mesh, processed by different microservices at different times

1. Delayed Redelivery – Retrying Processing After a Time Delay

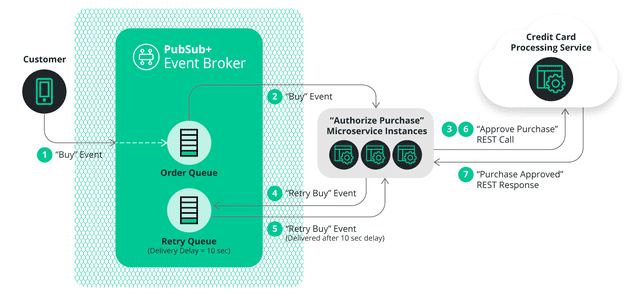

In an e-commerce application, your customer is going to add some items to a shopping cart, enter their credit card information, and then (hopefully) press the “buy now” button on your shopping website. At that point, a “buy” event will be generated by the web page and sent into the event mesh.

The “buy” event will be received by an “authorize purchase” microservice, which is responsible for authorizing the customer’s credit card purchase. That means making a REST call to your credit card processor. Credit card services process large numbers of transactions for many clients, and it is not unusual for the REST calls to occasionally timeout or return a transient or retriable error such as “503 Service Temporarily Unavailable”.

How is a stateless microservice supposed to react to this? The answer is to implement a backout/retry pattern.

A delayed delivery queue offers an elegant solution for implementing the backout/retry pattern. On a timeout or “try again” response, design the microservice to send the “buy” event to a “retry” queue that it is configured with a delivery delay time. The “authorize purchase” microservice can then go on to processing other events that it receives from the event mesh. Once the delivery delay time has passed, the delayed delivery queue will deliver the “buy” event to an instance of the “authorize purchase” microservice (it could be the original instance, or a different instance) and the microservice can retry the credit card authorization. (Implementation note – it is a good idea for the microservice to insert a “retry count” field into the “buy” event, and increment that counter every time it attempts to authorize the purchase, so the purchase can be failed by the microservice after a set number of unsuccessful retries).

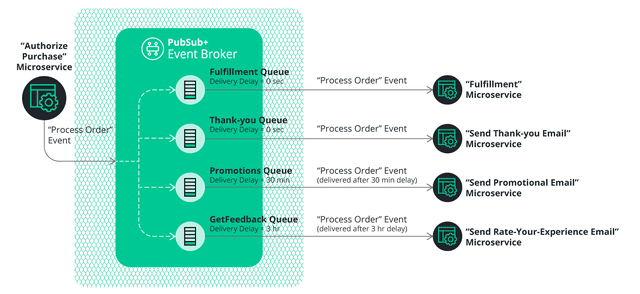

Once the credit authorization succeeds, the microservice can send a “process order” event into the event mesh. That single “process order” event might need to trigger different microservices at different times, which brings us to our second use case.

2. One Event Processed by Different Microservices at Different Times

With an event mesh, it is easy to have a single event delivered to several queues serviced by different microservices, each performing a different action on the event. And if some of those microservices are consuming from delayed delivery queues, the processing can occur at different times after the event was generated.

In this e-commerce example, the “process order” event could be queued to 4 different microservices:

- A “fulfillment” service which dispatches instructions to the warehouse to pick the items off the shelf and package the order. No time delay needed before processing this event.

- A “thank-you” service which sends a “thank you” email to the customer. No time delay needed before processing this event.

- A “promotions” service which, 30 minutes after the order is received, sends the customer an email with some suggested add-on purchases, based on the products the customer ordered.

- A “rate-your-experience” service which, 3 hours after the order is received, sends the customer an email with a link to a survey where they can rate their online shopping experience.

The time delays are all implemented using delayed delivery queues. The microservices don’t need to keep any state or run their own timers — the event mesh takes care of it for you.

Conclusion: Delayed Delivery Queues in Stateless Microservices

No matter what your microservices application might be, if the service needs a time delay before processing an event, delayed delivery queues will provide those delays so you don’t need to add complex state to your microservices. Delayed delivery queues are supported in the PubSub+ Event Broker Release 9.10 and beyond.

Explore other posts from category: For Developers

Steve Buchko

Steve Buchko