The Internet of Things is finally happening at mass scale as the cost of the sensors, networks and computing power has made the economics work. There are many articles on the web that focus on the sensors, the processes and the opportunity for analytics to drive new efficiencies using IoT, so I will focus on how the data moves from place to place within the architecture. You might also be interested in a blog post called “Why IoT Needs Messaging”.

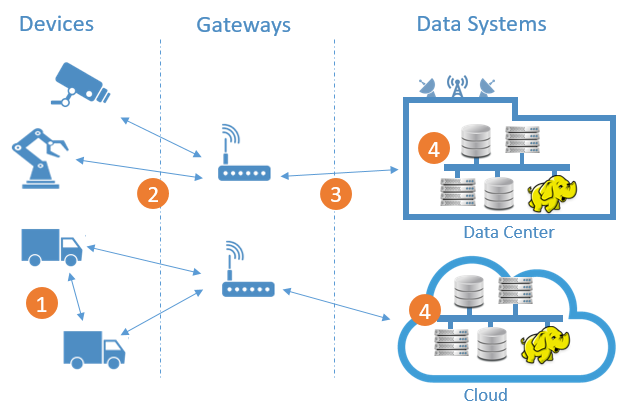

In an IoT architecture there are four different kinds of connections across which data moves:

1) device to device, 2) device to gateway, 3) gateway to data system and 4) between data systems.

Device to device communications

Device to device communications

When devices need to communicate with other devices, the use case likely requires that they receive data instantaneously because the timeliness of the incoming data is paramount. Examples of device to device communications include:

- Connected cars sharing information to cooperate for safer, more efficient traffic flow. Military command and control where drones, planes and ground forces need to coordinate but no reliable network is available.

- On the factory floor where industrial control systems, robots and sensors must work together to ensure the safe, efficient assembly of components within very specific parameters.

- In the field during emergency response or military scenarios where equipment like remote cameras and other sensors, drones and robots must coordinate their movement and actions as they assist and inform decision makers, operators and other personnel.

Very few IoT applications today include this form of communication, mostly because the devices are not sophisticated enough to handle them. As time passes, technology continues to advance and use cases become more sophisticated, this will become more common.

Device to gateway connections

Device to gateway connections

Each “thing” needs to send its data to an aggregating gateway node through what is sometimes called the “fog” layer. They call it the fog because gateways can physically reside in a wide range of places depending on requirements, including clouds and datacenter DMZs protected by security firewalls. Gateways perform two common functions. First they consolidate and route data from sensor devices to the appropriate data systems within the datacenter or cloud. Second, they can analyze or aggregate device data and forward that data to the core systems and/or respond back to devices if a time-sensitive exception condition is noticed.

The type of data or message exchange pattern that’s best depends on the device’s capabilities and what it is asked to do. How much power, processing, and persistent and non-persistent memory does it have? What is the network capacity and how reliable is it? How often does the device generate data? How important is its data quality? How secure is the device? These criteria will determine what protocol and quality of service is the best choice to move data between the device and the gateway.

Gateway to data systems

Gateway to data systems

The gateway is usually a much more capable computing device than the sensor, with a reliable and fast network connection. So here the determination of what message protocols and qualities of service to use is driven not by the gateway’s computing capabilities or connectivity, but by data traffic patterns such as periodic burstiness and congestion, number of concurrent connections required, and security requirements.

Between the data systems in the datacenter or cloud

Between the data systems in the datacenter or cloud

Within the secure datacenter, requirements such as integration with existing applications, high throughput, high availability, disaster recovery and ease of deployment become the critical decision factors.

Of course, this flow of data is bi-directional: while much more traffic will “fan in” from device to gateway to datacenter, select data will also “fan out” from datacenter to devices to initiate change or adaptation of the “things”. An example would be a connected car whose navigation system learns about a new accident and updates the navigation system with new route data.

IoT Protocols

When selecting messaging protocols for IoT, you will want to approach the challenge on a connection by connection basis. It is unlikely that you’ll be able to choose just one protocol for the end-to-end picture above without compromising some aspect of your system. First let’s understand each of the commonly used protocols and what they do best.

MQTT

| Pros | Cons |

|---|---|

|

|

MQTT was developed to meet device to gateway messaging requirements, and doesn’t meet most needs of gateway to a datacenter or intra-datacenter connections.

JMS

| Pros | Cons |

|---|---|

|

|

JMS makes sense for gateway to datacenter connnections, and within the datacenter. JMS is by far the most widely used datacenter messaging stack within enterprises today, and the most widely embedded messaging API across the tools like analytics engines, process engines, and monitoring platforms.

If you have a well-established JMS footprint, it may make sense to continue – it’s proven, it’s easy and it requires the least change to existing systems. If you have a greenfield application, or your toolset can as easily support AMQP or JMS, many architects would choose AMQP over JMS today for reasons I’ll explain next.

AMQP

| Pros | Cons |

|---|---|

|

|

It is worth noting that one of the most popular APIs used with AMQP is JMS. This means that if you currently rely on tools that provide JMS integration at the API level, by choosing an AMQP protocol/broker accessible via JMS, you can migrate towards AMQP without recoding applications. I’ll write more about this important concept in the future – stay tuned.

REST

| Pros | Cons |

|---|---|

|

|

CoAP

| Pros | Cons |

|---|---|

|

|

DDS

| Pros | Cons |

|---|---|

|

|

Building an End-to-End Strategy

-

Device to device communications

Here TCP’s point-to-point streams often do not meet the requirements because its reliable data transmissions require multiple retries until an acknowledgment is received. For example, two cars travelling at 100 kms per hour that want to share data with each other don’t have the luxury of waiting for data or message to be acknowledged before each can act on the new data. Each must make its best available decision with all available data from the other devices’ incoming data.

That’s why a non-TCP transport layer such as UDP is desired here. Among the protocols mentioned above, MQTT-SN, CoAP and DDS support UDP multicast. DDS’s data bus approach in matching the publishing and subscribing devices differs from the others’ approach because it does not rely on a typical hub and spoke message broker architecture: instead it relies on a data bus where the devices get discovered as peers and matched based on their data topic, type and QoS parameters. DDS’ approach is data-centric: it understands the data itself and message formats and has QoS access rules for filtering and delivery. This is quite a contrast to MQTT-SN and CoAP, which treat the body or content of a message as opaque and send the message to its destination based only on its header.

That said, device to device use cases are in their infancy and will be for a while; the vast majority of use cases have devices communicating to gateways or datacenters. As IoT devices begin to tackle this problem, it is likely that we will see more protocol evolution to better handle existing and future requirements.

-

Device to gateway connections

MQTT is best suited if a device or “thing” is very limited in its power, processing, memory or network capacity. AMQP may make sense if the device has sufficient computing resource and communications are not constrained. RESTful exchange is a necessary choice if the device is sufficiently simple that it can only support HTTP/TCP, or if you don’t need quality of service (i.e., if data loss is ok).

-

Gateway to data systems

Although MQTT is probably not the best choice here due to its lack of message properties and lack of support for message queues, if it meets the application’s messaging requirements, it could be a good enough choice. JMS may be the right choice here if existing datacenter’s applications already use JMS as their messaging service. AMQP may be the best choice if there is no messaging service in place yet, or as a strategy to migrate JMS API supporting applications towards an open protocol to avoid vendor lock-in.

-

Between data systems in the datacenter or cloud

The most important consideration for any messaging protocol in the datacenter will be to integrate seamlessly with the existing applications’ messaging service(s). Today this is most commonly JMS simply because it is so pervasive, although newer applications may use AMQP (possibly under a JMS API). There are many other protocols like IBM MQ, Kafka or many other vendor proprietary legacy brokers that are usually connected via protocol bridging. Also high availability (HA), disaster recovery (DR) and scalability as well as ease of deployment of a messaging solution that implements the protocols are very important considerations within the core datacenter or cloud applications.

In summary, each link in an IoT architecture has different needs and dynamics and most application requirements will likely lead you towards using multiple protocols within an end-to-end application. For any given link, your choice may be obvious based on your requirements, in which case you can just get on with it. If your pros and cons list makes the choice difficult, it is generally good advice to pick the most open protocol or API you can. It will maximize your options in the future as your application evolves.

Whichever protocol or protocols you pick, note that Solace technology can meet your message routing requirements thanks to broad protocol support and the ability to be deployed as a single HA cluster or a tiered deployment of message routers that supports millions of connections and messages per second.

Explore other posts from category: Use Cases

Solace

Solace