Kafka has established itself as a powerful event streaming platform, but organizations increasingly discover they need capabilities beyond Kafka’s core design—or find that Kafka’s operational complexity doesn’t match their requirements. This guide explores how Solace addresses these needs, whether you’re extending an existing Kafka deployment or evaluating alternatives from the start.

Two Paths Forward

Path 1: You’re Already Using Kafka

You’ve invested in Kafka infrastructure and expertise. Kafka handles high-volume stream processing well within your clusters. However, you’re encountering limitations:

- Multi-protocol support for IoT, legacy systems, or external partners

- Geographic distribution across regions or to edge locations

- Push-based delivery for low-latency use cases

- Operational complexity at scale

- Protocol translation layers you’d rather not maintain

Solace complements your Kafka deployment, extending capabilities without requiring you to abandon existing investments.

Path 2: You’re Evaluating Kafka

You’re researching event streaming platforms, and “Kafka” keeps appearing in your searches. Before committing to Kafka’s operational model, consider:

- Do you need Kafka’s specific strengths (extreme throughput, pull-based processing)?

- Can your team support the operational complexity?

- Do your applications fit Kafka’s protocol and architecture?

- Might a platform providing comparable event streaming with broader integration capabilities better match your needs?

Solace may replace Kafka entirely for use cases where operational simplicity, multi-protocol support, and geographic distribution are more important than Kafka-specific capabilities.

Are You Using Kafka Today? (Extending Scenarios)

Why Organizations Use Kafka and Solace Together

Kafka’s core strength lies in high-throughput event streaming, durable event logs, and stream processing within clusters or regions.

Solace complements Kafka by solving enterprise distribution and integration challenges that extend beyond Kafka’s design goals.

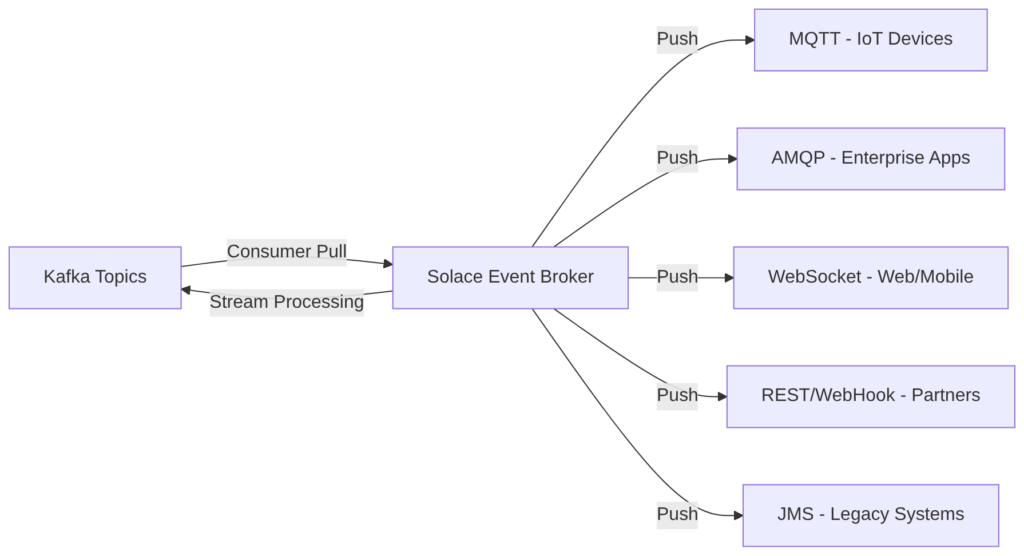

Challenge 1: Multi-Protocol Support and Broker-Push Delivery

The Kafka limitation:

Kafka implements a topic-based publish-subscribe pattern at the application level (producers publish, consumers subscribe), but uses a consumer-pull delivery mechanism at the protocol level—clients must implement polling loops to fetch events. This architecture works well for high-throughput batch processing but creates challenges:

- IoT devices expecting broker-push delivery via MQTT (minimal bandwidth, immediate delivery)

- Legacy systems requiring AMQP or JMS with broker-initiated delivery

- Partners consuming via REST APIs or WebHooks (push notifications, no polling)

- Mobile apps using WebSockets for low-latency broker-push updates

- Applications expecting asynchronous publish-subscribe with broker-driven fanout

- Mainframe applications using IBM MQ

Your current approach likely involves custom protocol gateways, adding code to maintain, latency, and operational complexity.

How Solace extends Kafka:

Solace’s event broker provides asynchronous publish-subscribe with broker-push delivery across 20+ protocols and APIs. When events arrive, Solace actively pushes them to interested subscribers—no polling loops required.

Architectural difference:

- Kafka’s pub-sub: Consumers poll → fetch batch → process → poll again (consumer-pull)

- Solace’s pub-sub: Broker pushes → consumer processes immediately (broker-push)

This delivers:

- Lower latency: No poll interval delays (sub-millisecond vs 100ms-1s typical Kafka poll)

- Reduced resource consumption: No empty polls wasting CPU and network

- Natural multi-protocol support: MQTT, AMQP, REST, WebSocket, JMS natively supported

- Simpler client code: Applications receive events via callbacks, not polling loops

Integration pattern:

Events flow seamlessly between Kafka’s consumer-pull topics and Solace’s broker-push subscribers without custom translation layers:

Challenge 2: Wide-Area Event Distribution

The Kafka limitation:

Kafka clusters perform optimally within low-latency networks (single data center or cloud region). Multi-region deployments, edge-to-cloud architectures, or global event distribution require additional tools:

- MirrorMaker 2 or Confluent Replicator for cross-region replication

- All events replicated regardless of subscriber interest

- No content-based filtering before WAN transmission

- Complex operational model for active-active deployments

- High cross-region data transfer costs

How Solace extends Kafka:

Solace’s event mesh provides intelligent event routing across geographies:

- Multiple cloud providers (AWS, Azure, Google Cloud)

- Hybrid cloud connecting on-premises data centers and cloud

- Edge locations requiring smart filtering and bandwidth optimization

- Global deployments needing low-latency event delivery across continents

Key difference: Solace brokers form a dynamic mesh that routes events based on subscription interest—only sending events where subscribers exist, dramatically reducing WAN bandwidth and costs.

Integration pattern:

Kafka clusters in multiple regions handle regional stream processing. Solace connects them intelligently:

Region 1 Kafka ← Solace mesh → Region 2 Kafka ↕ ↕ Region 1 apps Region 2 apps ↕ ↕ Edge locations Edge locations

Events flow to where they’re needed based on subscriptions, not broadcast everywhere.

Challenge 3: Enterprise Integration and Operational Complexity

The Kafka limitation:

Operating Kafka at scale requires:

- Dedicated platform teams with specialized expertise

- Complex monitoring, alerting, and incident response

- Capacity planning for brokers, storage, network

- Security implementation and ACL management

- Schema registry operations

- Multiple systems for different integration needs

How Solace extends Kafka:

Solace provides enterprise-grade event distribution infrastructure that integrates Kafka into broader event-driven architectures:

- Unified event catalog: Discover and govern events across Kafka and non-Kafka systems

- Dynamic routing: Route events based on content, not just preconfigured topics

- Quality of service: Guarantee delivery and ordering across heterogeneous systems

- Event transformation: Enrich or filter events as they flow between systems

- Central monitoring: Observe event flows across Kafka clusters, legacy systems, and cloud services

- Operational separation: Kafka teams focus on stream processing; Solace handles distribution

Integration pattern:

Organizations preserve Kafka investments while simplifying operations:

High-volume ingestion → Kafka stream processing → Solace distribution ↓ ↓ ↓ Kafka handles what it Process, aggregate, Distribute to does best: massive transform within hundreds/thousands throughput within clusters of consumers across clusters geographies/protocols

Common Integration Patterns

Pattern 1: Kafka as Stream Processing Core, Solace for Distribution

High-volume events (e.g., clickstream, IoT telemetry) are ingested into Kafka where stream processing applications perform real-time analytics, aggregations, and transformations. Processed results flow to Solace, which distributes them to hundreds or thousands of consuming applications across cloud and on-premises environments using appropriate protocols.

Benefits: Kafka does high-throughput processing; Solace handles complex distribution.

Pattern 2: Solace as Event Gateway, Kafka for Persistence and Analytics

Events arrive at Solace from diverse sources (MQTT devices, AMQP applications, REST APIs). Solace handles protocol translation, filtering, and immediate distribution to low-latency consumers. Simultaneously, events are bridged to Kafka for durable storage, long-term retention, stream processing, and data lake integration.

Benefits: Low-latency push delivery where needed; Kafka for analytics and processing.

Pattern 3: Multi-Region Event Architecture

Kafka clusters run in multiple cloud regions for regional stream processing. Solace’s event mesh connects these regional Kafka clusters, intelligently routing events between regions based on subscriptions, avoiding unnecessary data transfer, and providing disaster recovery failover.

Benefits: Regional Kafka performance; global distribution via Solace.

Pattern 4: Legacy System Integration

Existing applications using JMS, IBM MQ, or other enterprise messaging can’t easily adopt Kafka clients. Solace acts as a translation layer, exposing Kafka topics through enterprise messaging protocols, allowing gradual migration while preserving existing application interfaces.

Benefits: Kafka for new applications; legacy systems work unchanged.

How Solace Connects to Kafka

Solace integrates with Kafka through multiple approaches:

- Kafka source/sink connectors: Bi-directional event flow using Kafka Connect

- Native Kafka client integration: Solace applications produce to and consume from Kafka topics

- REST API bridges: Mapping between Kafka topics and RESTful endpoints

- Streaming data connectors: Purpose-built integrations for specific use cases

Architectural translation: Solace implements Kafka’s consumer-pull protocol to read from topics, then uses broker-push delivery to distribute events to subscribers via their native protocols (MQTT, AMQP, WebSocket, REST, etc.).

Configuration is straightforward—you specify Kafka bootstrap servers, authentication credentials, topic mappings, and transformation rules. Events flow automatically between Kafka’s consumer-pull model and Solace’s broker-push model with exactly-once or at-least-once delivery guarantees based on your requirements.

Operational Benefits of the Combined Approach

Combining Kafka and Solace provides:

- Preservation of existing investments: Kafka clusters continue handling what they do best

- Reduced custom integration code: Solace’s protocol support eliminates custom gateways

- Operational separation of concerns: Kafka teams focus on stream processing; event distribution is handled by Solace

- Flexible deployment: Solace runs as cloud service, software, or appliances—matching your infrastructure preferences

- Unified monitoring: Single pane of glass for event flows across Kafka and non-Kafka systems

- Gradual adoption: Start with one integration pattern, expand over time

Organizations don’t replace Kafka with Solace—they extend Kafka’s capabilities to address broader event-driven architecture requirements, creating a complementary event infrastructure that leverages the strengths of both technologies.

Are You Evaluating Kafka? (Replacing Scenarios)

Before You Commit to Kafka’s Operational Model

Many organizations discover “Kafka” while researching event streaming and assume they need it. Before committing to Kafka’s operational complexity, consider whether your requirements truly need Kafka’s specific strengths or whether a platform providing comparable event streaming with simpler operations better fits your needs.

When Solace Replaces Kafka Entirely

Consider Solace instead of Kafka if:

Your primary needs are:

- Multi-protocol event distribution (MQTT, AMQP, REST, WebSocket, Kafka protocol)

- Broker-push event delivery for low-latency use cases

- Geographic distribution across regions, clouds, or edge locations

- Simpler operational model (smaller team, less specialized expertise required)

- Request-reply patterns alongside event streaming

- Integration with legacy enterprise messaging

Rather than:

- Extreme throughput within a single cluster (tens of millions of events/second)

- Complex stream processing with Kafka Streams (though Solace integrates with processing frameworks)

- Requirement for Kafka-specific ecosystem tools

Solace Capabilities for Event Streaming

Solace provides comprehensive event streaming capabilities that match or exceed Kafka for many use cases:

Event durability and replay:

- Persistent queues and topics

- Event replay from any point in time

- Configurable retention policies

- Guaranteed message delivery

High throughput:

- Millions of messages per second per broker

- Horizontal scaling across multiple brokers

- Efficient multicast for fanout scenarios

- Sub-millisecond latency for push delivery

Flexible consumption models:

- Broker-push (broker initiates delivery)

- Consumer-pull (consumer requests batches)

- Request-reply patterns

- Queue and topic semantics

Multi-protocol support (no translation layers needed):

- Kafka protocol (existing Kafka clients work)

- MQTT (IoT devices)

- AMQP 1.0 and 0.9.1 (RabbitMQ compatibility)

- JMS 1.1 and 2.0 (enterprise messaging)

- REST and WebSocket (web/mobile)

- OpenMAMA (financial services)

Geographic distribution:

- Event mesh for intelligent routing across WAN

- Content-based filtering before transmission

- Multi-cloud and hybrid cloud support

- Active-active disaster recovery

Operational simplicity:

- Unified platform (not separate systems for different patterns)

- Comprehensive monitoring and management tools

- Smaller operational footprint than Kafka+Connect+Streams+Registry

- Managed cloud service (Solace PubSub+ Cloud) or self-hosted software/appliances

Direct Capability Comparison

| Capability | Kafka | Solace |

| Throughput | Tens of millions/sec per cluster | Millions/sec per broker, horizontal scaling |

| Latency | 100ms-1s (poll interval) | Sub-millisecond (broker-push delivery) |

| Protocols | Kafka binary (consumer-pull) | 20+ protocols (broker-push and consumer-pull) |

| Event replay | Yes (offset reset) | Yes (replay from any point) |

| Geographic distribution | Via MirrorMaker/Replicator | Native event mesh |

| Operational complexity | High (dedicated teams) | Lower (unified platform) |

| Request-reply | Custom implementation | Native support |

| Client types | Kafka-specific libraries | Any protocol client |

| Stream processing | Kafka Streams, Flink | Integrate with processing frameworks |

When to Start with Solace

Greenfield projects:

- Building new event-driven architectures

- Want operational simplicity from day one

- Need multi-protocol support immediately

- Plan for geographic distribution

Modernization initiatives:

- Replacing legacy enterprise messaging (IBM MQ, TIBCO, etc.)

- Consolidating disparate integration technologies

- Moving to cloud-native event architectures

- Reducing operational complexity

IoT and edge computing:

- MQTT-native support required

- Push delivery to resource-constrained devices

- WAN optimization essential

- Edge-to-cloud architectures

Hybrid and multi-cloud:

- Events flow across clouds and on-premises

- Active-active disaster recovery across regions

- Avoid vendor lock-in to specific cloud event services

Starting with Solace, Adding Kafka Later (If Needed)

Future-proof approach:

- Begin with Solace’s simpler operations and broader protocol support

- Build event-driven architecture across your organization

- Add Kafka later for specialized stream processing at massive scale (if requirements emerge)

- Your Solace infrastructure stays relevant—it integrates with Kafka when needed

This approach avoids premature optimization (adopting Kafka’s complexity before you need it) while keeping options open.

Making the Decision: Extend or Replace?

If You’re Already Using Kafka

Extend with Solace when you need:

- Multi-protocol support without custom gateways

- Broker-push delivery for low-latency use cases

- Geographic distribution beyond what MirrorMaker provides

- Simplified operations for event distribution

- To preserve existing Kafka investments

Solace complements Kafka, each handling what it does best.

If You’re Evaluating Kafka

Consider Solace instead if:

- Operational simplicity is important (smaller teams, less expertise)

- Multi-protocol support is a primary requirement

- Geographic distribution is essential from day one

- Broker-push delivery matters for your use cases

- You want a unified platform for multiple event patterns

Stick with Kafka if:

- You need extreme throughput (tens of millions of events/second in a single cluster)

- You have dedicated Kafka platform teams

- Your applications are homogeneous (all can adopt Kafka clients)

- Your architecture is contained within regions

- Consumer-pull batch processing fits all your use cases

Hybrid Approaches

Many organizations adopt both:

- Solace as the primary event distribution platform

- Kafka for specialized high-volume stream processing when needed

- Best of both worlds: simplicity where possible, specialized tools where necessary

See Solace in Action

Whether you’re extending an existing Kafka deployment or exploring alternatives, Solace provides enterprise-grade event streaming with broader capabilities and simpler operations.

Book a demo to see how Solace:

- Integrates with your existing Kafka infrastructure (if applicable)

- Provides multi-protocol event distribution with push-based delivery

- Simplifies event routing across geographies and clouds

- Reduces operational complexity compared to maintaining multiple specialized systems

[DIAGRAM: Kafka + Solace Integration Architecture] Visual showing: Kafka cluster handling high-volume stream processing in center, Solace event mesh surrounding it and connecting to diverse consumers (IoT devices via MQTT, legacy apps via JMS/AMQP, cloud services via REST, mobile via WebSocket, other Kafka clusters in different regions). Show bi-directional event flow between Kafka and Solace with protocol translation and WAN optimization labeled.

Key Takeaways

For Existing Kafka Users:

- Kafka excels at high-throughput stream processing within clusters

- Solace extends Kafka with broker-push delivery, multi-protocol support, and WAN distribution

- Common patterns preserve Kafka investments while addressing broader requirements

- Organizations use both technologies for complementary strengths

For Kafka Evaluators:

- Consider whether you need Kafka’s specific capabilities or operational complexity

- Solace provides comparable event streaming with broader protocol support and simpler operations

- Many use cases don’t require Kafka’s extreme throughput

- Starting with Solace keeps options open for adding Kafka later if needed

For Everyone:

- Architectural decisions should match organizational capabilities and requirements

- No single technology solves all event streaming needs

- Thoughtful evaluation of volume, geography, protocols, and team expertise drives better outcomes