Introduction

For SAP-powered organizations, complexity comes with the territory. Your business-critical systems likely stretch across multiple landscapes: S/4HANA in the cloud, ECC still running on-premises, maybe SuccessFactors for HR, Ariba for procurement, and a growing list of non-SAP applications that handle everything from logistics to customer engagement.

This patchwork of systems is powerful, but it also creates friction. When information moves slowly – whether through nightly batch jobs, scheduled data extracts, or custom-built integrations – it can leave you reacting to yesterday’s events instead of responding to what’s happening right now. The result? Missed opportunities, stale insights, and teams that are constantly playing catch-up.

To meet modern expectations, organizations need to act in real time. Whether it’s a change in inventory, a new customer order, or an alert from a connected device, information must move as quickly as the business itself. The systems that generate and process this data – ERP, CRM, manufacturing, analytics, AI agents – need to exchange it instantly and intelligently. That’s what it means to become event-driven.

At its core, an event is simply a change: a purchase order approved, a shipment delayed, a meter reading updated. Event-driven architecture (EDA) is an approach to building systems around these changes. Rather than waiting for someone – or something – to ask for information, systems broadcast what’s happened the moment it occurs. Other systems that care about that event can immediately respond, triggering follow-on processes, analytics, or decisions.

For SAP customers, this shift represents more than just a new integration pattern. It’s a new way of thinking about the flow of data across your enterprise. Becoming event-driven means designing your business processes – and your technology architecture – to operate on live information rather than static records.

It’s not a single project; it’s a journey. And like any journey, it begins with a mindset: thinking in terms of events instead of transactions. This guide introduces a practical, proven approach to help SAP-centric organizations start that journey, gain alignment across teams, and build a foundation for real-time responsiveness. With SAP Integration Suite, advanced event mesh (AEM) – powered by Solace – organizations can extend this responsiveness across SAP and non-SAP systems.

How to Become Event-Driven

Event-driven architecture isn’t new. Stock exchanges, airlines, and telecom networks have relied on it for years to process millions of transactions in fractions of a second. What is new is that event-driven thinking has moved from niche to necessary – especially for organizations running SAP.

Today’s SAP landscapes don’t live in a single place or moment. Business processes stretch across clouds, data centers, and time zones. Finance runs on S/4HANA, manufacturing depends on connected devices, and customer data flows in from CRM systems, e-commerce platforms, and AI-driven analytics. In this environment, batch updates and nightly synchronization jobs simply can’t keep up.

Legacy integration approaches – message queues, service buses, scheduled ETL pipelines – were designed for predictability, not speed. They move data when it’s convenient, not when it’s needed. That’s fine for static systems, but modern business runs on motion. AI models, digital twins, and supply-chain optimizations all depend on knowing what’s happening right now.

That’s where becoming event-driven begins: by seeing your business as a living network of events. Every order created, invoice approved, or sensor reading captured is an event – a piece of information announcing that something has changed. Instead of storing those changes and waiting for another system to ask for them, you broadcast them the moment they occur.

In an event-driven world, your applications, microservices, and AI agents don’t poll or request data – they listen. They subscribe to the events that matter to them and act the instant something relevant happens. The choreography of business logic emerges naturally: when a sales order closes, finance is notified automatically; when stock levels drop, procurement responds without being told.

This isn’t about replacing everything you already have. It’s about introducing a new way for your systems to communicate – one that’s more fluid, scalable, and adaptable to change. Adding a new application or service no longer means rewriting integrations or breaking old ones; it simply plugs into the event stream and starts contributing.

So how do you get there? What does it take to move from traditional integration to a truly event-driven enterprise?

That’s where strategy comes in.

High Level Strategic Approach

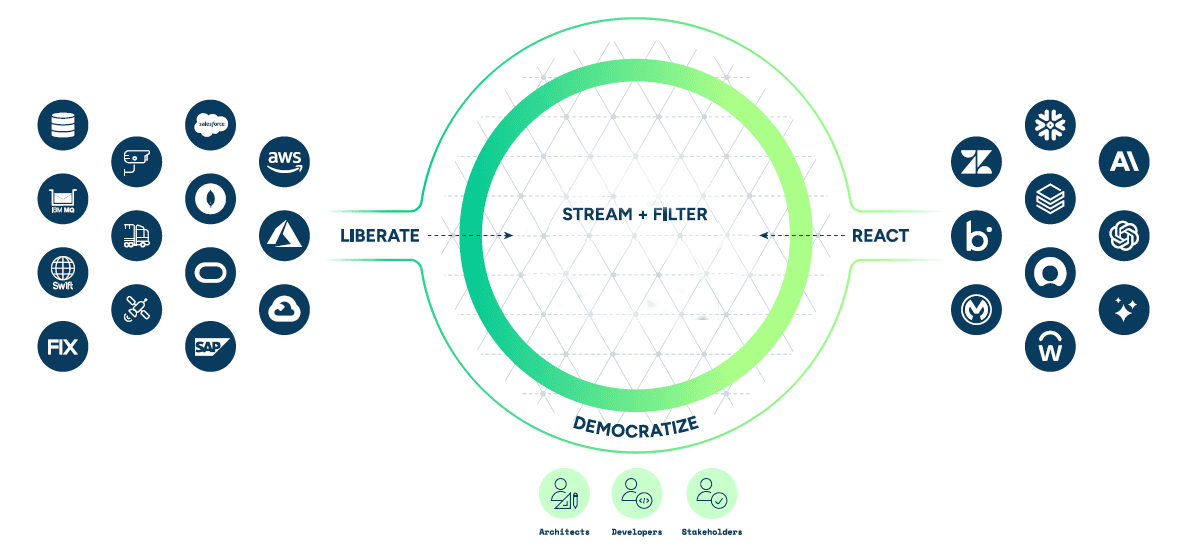

For organizations that rely on SAP, becoming event-driven isn’t about adopting a single technology – it’s about shifting how information moves through the business. Traditional SAP environments have long depended on structured, predictable data exchanges: nightly jobs, scheduled integrations, and carefully managed dependencies. But real-time responsiveness requires a new mindset – one where data isn’t just shared but set free. With SAP Integration Suite, advanced event mesh (powered by Solace), organizations can extend this responsiveness across SAP and non-SAP systems.

At a high level, there are four key stages in that transformation:

1. Liberate

The first step is breaking data out of its silos. In most enterprises, critical information is locked inside core systems – from ERP and CRM to manufacturing control systems and IoT sensors. When that data can be published as events, it’s no longer confined to a single process or application. It can move freely across the organization in real time, ensuring that the moment something changes – a customer places an order, a shipment is delayed, a machine shows signs of wear – that information is immediately available to anyone or anything that needs to act on it.

2. Stream & Filter

Once data is liberated, it needs to flow intelligently. Event streams act as the nervous system of a business: constantly transmitting signals between systems, services, and teams. Intelligent routing ensures each part of the organization receives the right information at the right time – not an overwhelming flood of everything. This shift from request – driven to subscription – driven communication creates a flexible architecture where systems can adapt and scale as business needs evolve.

3. React

With information moving in real time, the next step is to empower systems to respond automatically. Whether it’s analytics software updating dashboards, AI models detecting anomalies, or business workflows triggering follow-up actions, the goal is to close the gap between knowing and doing. In an event-driven enterprise, processes no longer wait for humans to check reports or run jobs – they respond to change as it happens.

4. Democratize

Finally, real-time data shouldn’t live only in the domain of architects and developers. When event streams are cataloged, searchable, and easy to access, business teams can discover and use them too – powering analytics, AI models, and decision-making without bottlenecks. This democratization of live data turns real-time responsiveness from an IT initiative into a company – wide capability. With AEM, organizations can extend this responsiveness across SAP and non-SAP systems.

Practical Implementation Process

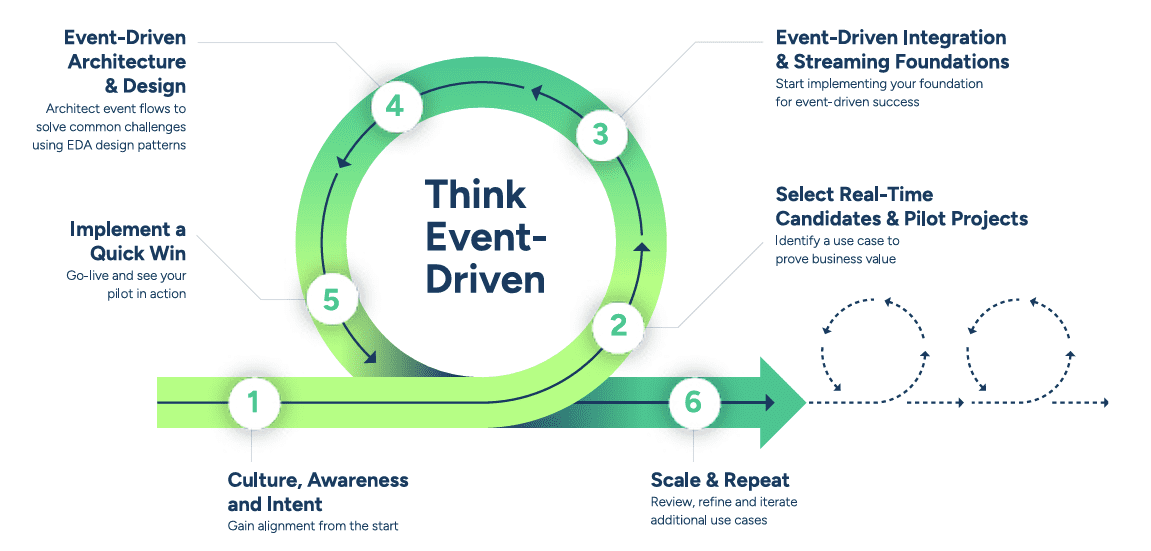

So how do you put all of this into practice?

Becoming event-driven isn’t a switch you flip – it’s a journey that touches people, processes, and technology. The good news is that there’s a clear path forward. Over the past several years, organizations across industries – including many with complex SAP landscapes – have followed a set of repeatable steps that make the shift to event-driven architecture faster, smoother, and far less risky.

These six steps form the foundation of the Think Event-Driven approach: a practical method used by leading enterprises to build, scale, and grow event-driven systems with confidence.

The rest of this paper breaks down each step, explaining what it involves, why it matters, and how you can apply it in your own environment.

The rest of this piece will break down each of these steps, explaining the importance, offering keys to success, and providing some examples you can apply to your own situation.

Step 1: Gain Alignment (SAP – Aware Version)

Every event-driven journey starts with people.

Before your systems can start speaking in events, your teams need to start thinking in them. For SAP-centric organizations, this means helping long-time ERP, integration, and business process experts adopt a new way of connecting systems and surfacing data. Event-driven architecture (EDA) often begins as a small, focused initiative – perhaps tied to a single S/4HANA process – but its success depends on early alignment across IT, architecture, and business leadership.

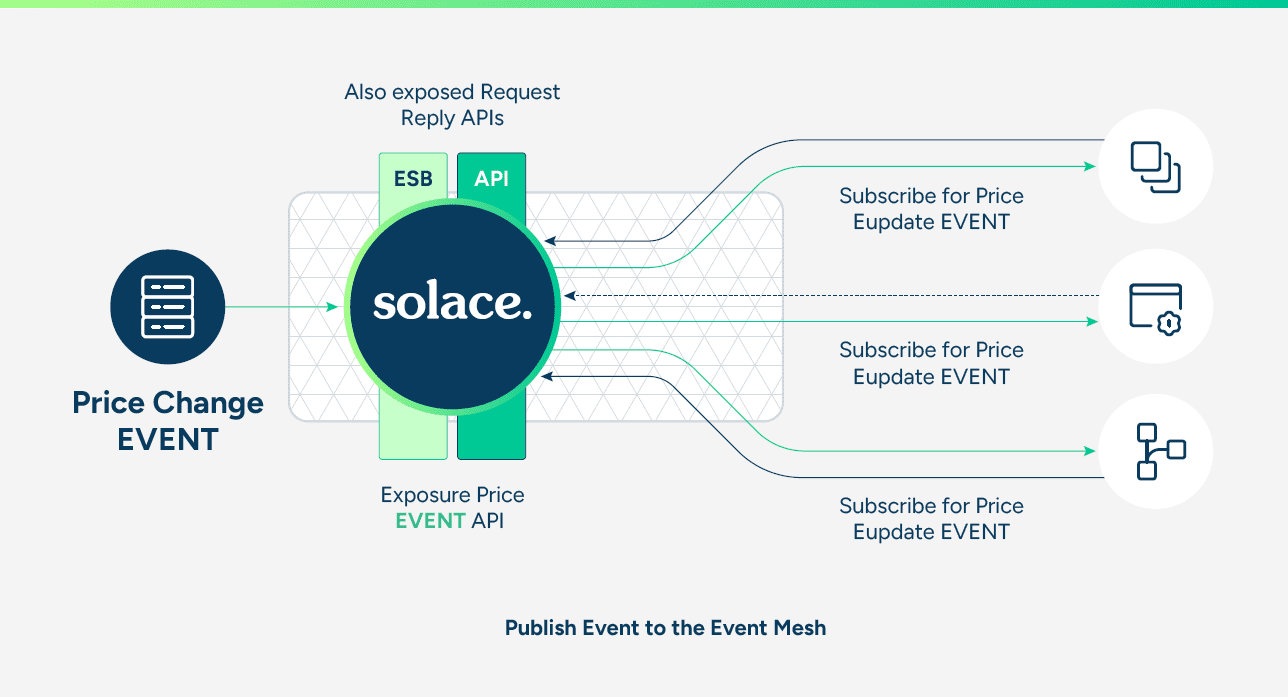

For example, your ESB or PI/PO team will need to start thinking in terms of choreography rather than orchestration. Your API management team should begin to explore event-driven APIs rather than request/reply patterns. And business stakeholders who depend on SAP data need to understand that instead of waiting for nightly updates or manual refreshes, EDA allows their processes to react the instant something changes – like a customer order, material movement, or supplier update.

Most people in IT were trained to think procedurally. Whether you started out coding in ABAP or building workflows in SAP Cloud Integration, much of that work has traditionally centered on function calls or synchronous APIs. Event-driven design introduces a small but important cultural shift: rather than calling other systems directly, each application, service, or AI agent listens for the events it cares about and responds automatically.

As an architect, once you know which SAP and non-SAP applications consume and produce which events, you can choreograph their interactions through a distributed network of event brokers – what’s known as an event mesh.

Take time to build understanding and support. Read up on event-driven design, host knowledge sessions, and connect the concepts to familiar SAP processes – like how “business events” in S/4HANA can now be streamed across the enterprise. The goal isn’t to push a toolset yet, but to foster an event – first mindset that sees agility, responsiveness, and better customer experiences as the outcome.

Once you’ve laid that foundation, you’re ready to look at where real-time thinking can deliver the biggest impact.

Step 2: Identify Candidates for Real-Time (SAP – Aware Version)

Not every system needs to be real-time, and not every SAP process can be made event-driven overnight. But many will benefit from it – especially those that cross boundaries between SAP and other platforms.

Start by mapping your processes and integrations. You’ve probably already spotted pain points where batch jobs, IDocs, or ETL processes slow down your operations. Maybe it’s an order management flow where sales orders created in S/4HANA take hours to appear in your CRM. Or a supply chain process where updates from your manufacturing system lag behind reality. Or perhaps it’s your AI models that depend on stale data rather than live events.

When choosing where to begin, look for projects that do three things:

- Alleviate pain points. Target integrations that are brittle or slow – like file transfers between SAP and non-SAP systems, or manual reconciliation between finance and operations data.

- Deliver visible business impact. Aim for scenarios leadership understands: improving the customer experience, reducing delays in order-to-cash, or enabling real-time inventory visibility.

- Create momentum with a quick win. Focus on a project that can be delivered fast and show tangible results – like streaming product master data from S/4HANA to downstream systems, or instantly publishing equipment status events from SAP Plant Maintenance to a dashboard or analytics platform.

SAP offers a rich ecosystem of event sources – from S/4HANA’s Event Enablement Framework to SAP BTP extensions – making it easier to start small but meaningful. The key is to find one scenario that demonstrates what’s possible when systems stop polling for updates and start reacting to them.

As you identify candidates, include the right stakeholders. Not all of them will be technical – many events originate in the business itself. Bringing both business and IT teams together early helps ensure your event-driven initiative aligns with real priorities and gains lasting support.

Step 3: Build Your Foundation (SAP – Aware Version)

Once you’ve identified your first real-time project, it’s time to think about what will make it work – and scale.

For organizations running SAP, this step often means complementing your Integration Suite or BTP strategy with the runtime and design–time elements that make event-driven communication possible. The goal isn’t to replace your existing architecture, but to extend it – so SAP systems, cloud apps, and data services can all participate in the same event flow.

Event-driven architecture isn’t just about moving data faster. It’s a structural shift in how your SAP and non-SAP systems interact. That shift depends on decomposing large, tightly coupled business processes into smaller, independent components – microservices, extensions, and increasingly, AI agents – that communicate through events rather than direct calls.

To make that work, you need a runtime fabric that allows these parts to publish and subscribe to events reliably, securely, and in real time – across hybrid environments. Think of it as the nervous system that connects your digital core (S/4HANA, SuccessFactors, Ariba) with everything else that powers your business.

Just as important is your design-time visibility: the ability to describe, document, and visualize your events. In SAP terms, that might mean cataloging which S/4HANA business events exist, what payloads they carry, and which external systems consume them. Without that shared understanding, event-driven systems can become just as opaque as the point-to-point integrations they were meant to replace.

Your architecture also needs to be hybrid by design. Real-time responsiveness doesn’t come from putting everything in one place; it comes from connecting everything – whether it’s running in an SAP data center, on SAP BTP, or in other clouds.

This is where an event-driven integration platform becomes essential. Even at its simplest, it allows your SAP applications, cloud extensions, and AI agents to communicate through events rather than synchronous REST calls or batch integrations. Starting with this foundation ensures your first project doesn’t just solve one problem—it becomes the cornerstone of a scalable, connected, event-driven enterprise. AEM acts as a foundation for this, providing dynamic event routing and simplified lifecycle management.

The core components of that foundation include:

- Micro-Integrations

- Event Broker

- Event Mesh

- Event Portal

- Topic Taxonomy

Each plays a key role in turning event-driven theory into practical, working architecture.

Micro-Integrations

A key enabler of your event-driven foundation is the use of micro-integrations – lightweight, purpose-built components that do more than just connect systems.

In SAP landscapes, micro-integrations are the glue that binds S/4HANA and BTP to the broader enterprise ecosystem. They can enrich events generated by SAP’s event enablement framework, convert legacy formats like IDocs into modern payloads, or adapt cloud-native data into formats that SAP applications understand.

Unlike traditional connectors or middleware flows, micro-integrations perform logic and transformation in motion. They can run inside SAP Integration Suite as low-code integration flows, be deployed as custom extensions on SAP BTP, or even exist as serverless functions in the public cloud.

Whether enriching events before publishing them to downstream systems or adapting legacy data for use in analytics or AI, micro-integrations help unify your enterprise into a truly event-native SAP ecosystem – where every business change can trigger the next intelligent action.

Event Broker

At the heart of every event-driven architecture is the event broker – the runtime engine that makes publish/subscribe communication possible.

Think of it as a digital switchboard for your enterprise: every application, AI agent, and microservice can publish information about what just happened, while others subscribe to the events they care about. The event broker ensures those messages move quickly, reliably, and securely – without systems having to know anything about each other.

In an SAP environment, the event broker becomes the connective layer between your S/4HANA system, your SAP BTP extensions, and all the surrounding applications that depend on SAP data. It’s what allows a “sales order created” event from S/4HANA to instantly reach a CRM system, trigger a workflow in SAP Build Process Automation, or feed into an AI modelvall without manual intervention or polling for updates.

Each event is published to the broker under a defined topic, following a topic hierarchy (sometimes called a taxonomy). That structure makes it easy for subscribing systems – whether they’re SAP applications, cloud services, or analytics tools – to filter and consume only what’s relevant.

The best event brokers are built on open protocols and APIs. Just as TCP/IP gave organizations the freedom to choose their networking equipment, open standards for eventing (such as MQTT, AMQP, or REST-based APIs) ensure long-term flexibility and interoperability across your landscape. This openness is especially valuable for SAP customers running hybrid architectures – some on-premises, some on SAP BTP, and others in multiple clouds.

By using open standards and well-documented APIs, teams can extend event-driven integrations easily, draw on community innovation, and avoid being tied to any single vendor or technology stack. It’s what makes the event broker not just a piece of middleware, but the foundation of a flexible, future-proof integration strategy.

Event Mesh

An event mesh is the natural next step after introducing an event broker. It’s what happens when you connect multiple brokers together into a distributed network that can move events seamlessly across your enterprise – no matter where your applications, systems, or data reside.

Just as the internet works because routers share routing tables, an event mesh works because brokers share topic subscriptions and route events dynamically. The result is a kind of “digital nervous system” that carries information from any source to any destination, automatically optimizing how those events travel.

Most organizations running SAP have already discovered that their applications are everywhere: some in on-premises data centers, others in SAP BTP, and still more in multiple clouds or edge environments like factories, branch offices, or retail stores. In such a world, relying on point-to-point integrations or manual data transfers just doesn’t scale.

With an event mesh in place, geography and deployment models stop mattering. A “goods issue” event generated in an on-premises S/4HANA system can be instantly consumed by a cloud-based analytics engine, a logistics partner, or an AI forecasting model. Each system simply connects to its local event broker – just as you connect to your home Wi-Fi router to access any website on the internet – while the mesh ensures every subscriber receives the events they care about, no matter where those events originate.

There are many ways an event mesh strengthens your SAP landscape:

- Connects and choreographs microservices across SAP and non-SAP domains using publish/subscribe, topic filtering, and guaranteed delivery.

- Bridges hybrid environments by securely pushing events between on-premises SAP systems and cloud-based applications.

- Accelerates IoT and edge scenarios, enabling real-time visibility from connected devices or manufacturing systems into S/4HANA and analytics platforms.

- Supports Data-as-a-Service (DaaS) initiatives by streaming live operational data across lines of business for analytics, machine learning, and AI-driven insights.

- Improves responsiveness by enabling business processes to react immediately to changes instead of waiting for batch updates.

Over time, your event mesh becomes the connective tissue that makes your SAP ecosystem truly real-time—bridging the gap between systems of record and systems of innovation.

How you deploy that mesh – across data centers, clouds, or edge locations – will influence the kind of event broker you choose. But no matter how it’s implemented, an event mesh turns your distributed enterprise into a unified, event – aware network capable of responding to change the moment it happens.

Event Portal

Just as an API portal gives you visibility into your web services, an event portal provides the same for your event-driven ecosystem. It’s both a design-time and runtime view into your event mesh – a single pane of glass where architects, developers, and business analysts can define, discover, and manage the flow of events across your enterprise.

An event portal provides a governed, visual environment where you can model the events your systems produce and consume. Architects can design events, define schemas, and establish relationships between them – all through an intuitive interface. Once events are modeled, you can easily link them to the microservices, applications, or AI agents that use them.

For SAP customers, this governance layer is critical. As your landscape grows to include SAP S/4HANA, SAP BTP applications, and extensions built through SAP Integration Suite or CAP (Cloud Application Programming Model), maintaining a clear view of what events exist – and who owns them – becomes essential. An event portal helps prevent duplication, supports compliance and lifecycle management, and provides a foundation for self-service discovery across teams.

Over time, your event definitions are stored in an event catalog, creating a searchable library of reusable assets. Developers and integrators can browse this catalog to find existing events – say, “sales order created” or “goods receipt posted” – and subscribe to them instead of rebuilding the wheel.

A mature event portal can also automate parts of your deployment pipeline, such as pushing configuration details to event brokers or promoting applications across environments (development, test, production). This level of visibility and automation not only accelerates project delivery but also ensures consistency across your SAP and non-SAP systems.

In short, an event portal gives you the blueprint for your real-time enterprise – a living map of how data moves through your business, ready for everyone to use and build upon.

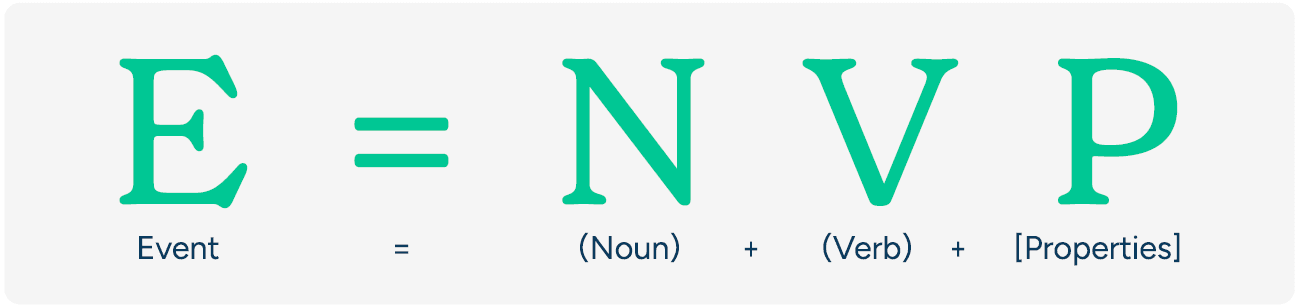

Topic Taxonomy

If the event broker is the circulatory system of your enterprise, then topic taxonomy is its language – the set of rules that ensures every signal gets to the right place.

Each event in an event-driven architecture carries a topic, a descriptive label that tells the broker what kind of event it is and who might care about it. Topics typically follow a hierarchy, such as sales/order/created or inventory/item/updated, allowing subscribers to listen for exactly what they need. Wildcards make it possible to capture broader patterns, like subscribing to sales/# to receive all sales-related events.

For SAP landscapes, establishing a governed topic naming convention early on is one of the most important design decisions you’ll make. It should align with your SAP business domains and event sources – for example, topics that map to S/4HANA modules (like FI, MM, or SD) or to business objects (like sales orders, materials, or vendors). A clear and consistent taxonomy ensures interoperability between SAP and non-SAP systems, making it easier to route events across hybrid architectures.

You can think of topics as metadata that tells a story. Just as a well-formed sentence in English has a subject, verb, and context, a well-modeled event follows the formula E = NVP (Event = Noun + Verb + Properties). For example, salesorder/created/region = NA is a simple, human-readable way to convey exactly what happened, where, and about what object.

A solid taxonomy helps avoid chaos as your event ecosystem grows. It keeps your brokers efficient, your subscriptions clean, and your developers confident that the event they’re publishing or subscribing to means exactly what they think it does.

- Noun identifies the subject – it defines what the event is about. This is usually the core business entity, such as an order, payment, customer, flight, or shipment. The noun anchors the event in the domain language of your business and directly informs topic naming. Choosing clear, consistent nouns makes it easier for teams to filter, route, and reason about events across systems.

- Verb captures the action – what happened to the entity. Common verbs include created, updated, canceled, or shipped. Verbs define the business event and often correlate to transitions in a process or state machine. Selecting precise verbs helps align event flows with real-world processes and supports choreography across systems.

- Properties are the payload – the structured data that adds full context to the event. These include identifiers, timestamps, descriptions, and any business-relevant fields that consumers need to act on the event. Properties should be lean but informative: enough to enable downstream action without overwhelming consumers or tightly coupling them to internal schemas.

Following this simple formula will give your events a clear structure that makes them easier to understand, route, and reuse. Not only does this make them more intuitive, it makes it easier to enforce consistent topic taxonomy. For example:

| Event Name | Noun | Verb | Properties |

|---|---|---|---|

| order.created | order | created | orderId, items, customerId, timestamp |

| payment.failed | payment | failed | paymentId, reasonCode, orderId |

| product.updated | product | updated | productId, price, category |

When naming your events and structuring payloads, this model helps align developers and business stakeholders alike. It also supports smarter topic naming and filtering across your event mesh.

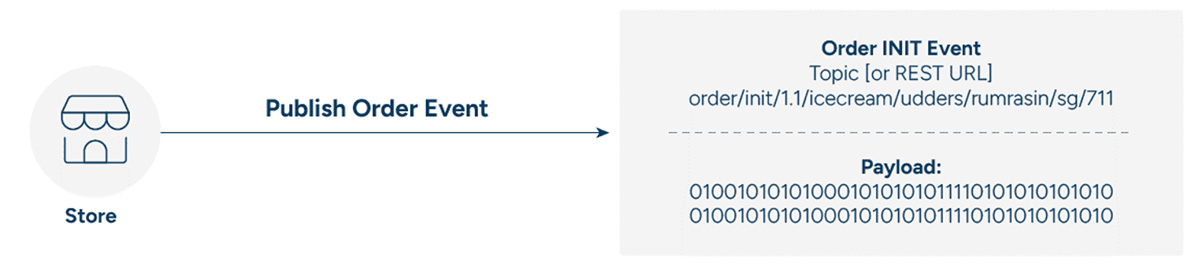

Let’s say a CPG company receives an order for a specific product – like a new SKU of energy bars – via a regional e-commerce partner in Southeast Asia. The company has structured its event topics to reflect key metadata like event type, product category, brand, region, and channel.

| Event | Topic |

|---|---|

| New Order | order/init/1.1/snacks/energybars/sea/lazada |

| Validated Order | order/valid/1.1/snacks/energybars/sea/lazada |

| Order Shipment | order/shipped/1.1/snacks/energybars/sea/lazada |

Using these topic structures:

- Order validation services subscribe to

order/init/1.1/>to catch all new orders across categories and regions. - Regional processors can subscribe to

order/valid/*/snacks/*/sea/*to handle validated orders for snack products in Southeast Asia. - Analytics engines or downstream systems might subscribe to

order/>to capture a full view of order activity across the business.

By applying topic taxonomy best practices, the company ensures efficient routing, localized processing, and global observability – all without tight coupling between systems.

Because events from the publisher (microservice, application, legacy-to-event adapter) have topics as metadata, consumers can use it to subscribe to events.

| Event | Topic | Subscriber |

| New Order | Lazada ecommerce store site | Order validation microservice |

| Validated Order | Order Validation Microservice | Order processor for snack products in Southeast Asia |

| Order Shipment | Any upstream | Data lake or AI/ML ingestor |

Topic subscriptions would be:

Consume and validate all orders of version 1.1 and publish the order valid message: order/init/1.1/>

Consume and process all valid orders for ice cream originating in Singapore: order/valid/*/snacks/*/*/sg/*

All orders no matter what stage, category, location: order/>

Step 4: Design the Flow for your Pilot Project

At its core, an event flow is simply a business process translated into a technical one. It’s the path an event takes – from the moment it’s generated, to when it travels through your event broker, and finally to when it’s consumed and acted upon by another system or service.

Starting small with a pilot project is the best way to learn by doing. It lets you experiment, refine your approach, and show tangible results before you expand to a wider implementation. As you identify the events and map their flows, your event catalog begins to take shape. Some event portals even include AI-assisted tools to help suggest related events or relationships. Whether you maintain this catalog in a spreadsheet or a full – fledged event portal, it becomes the foundation of reusable event assets that future projects can build upon.

When choosing your pilot flow, look for a use case that aligns with your current priorities – perhaps part of a modernization initiative, a process that’s causing performance pain, or an in–flight transformation that would benefit from greater agility. It could be driven by business innovation, such as improving customer responsiveness, or by technical goals like reducing point-to-point dependencies or optimizing API load. The ideal pilot delivers a quick win that builds confidence and becomes a model for future event-driven implementations.

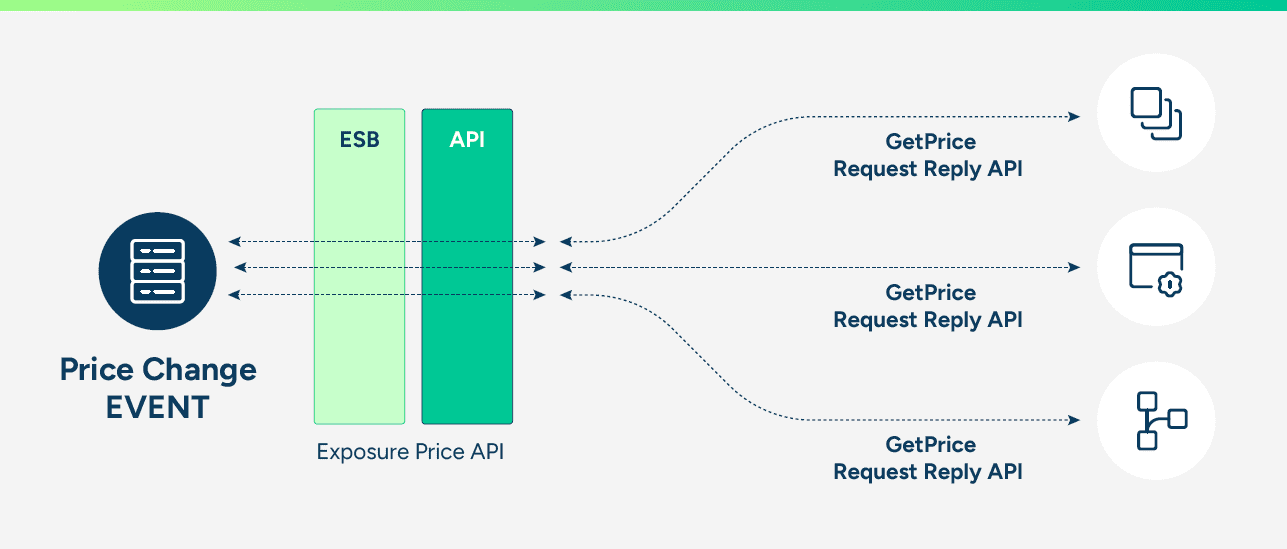

Consider this product price change flow as an example:

At its core, an event flow is simply a business process translated into a technical one. It’s the path an event takes – from the moment it’s generated, to when it travels through your event broker, and finally to when it’s consumed and acted upon by another system or service.

Starting small with a pilot project is the best way to learn by doing. It lets you experiment, refine your approach, and show tangible results before you expand to a wider implementation. As you identify the events and map their flows, your event catalog begins to take shape. Some event portals even include AI-assisted tools to help suggest related events or relationships. Whether you maintain this catalog in a spreadsheet or a full-fledged event portal, it becomes the foundation of reusable event assets that future projects can build upon.

When choosing your pilot flow, look for a use case that aligns with your current priorities – perhaps part of a modernization initiative, a process that’s causing performance pain, or an in-flight transformation that would benefit from greater agility. It could be driven by business innovation, such as improving customer responsiveness, or by technical goals like reducing point-to-point dependencies or optimizing API load. The ideal pilot delivers a quick win that builds confidence and becomes a model for future event-driven implementations.

Step 5: Develop and Deploy Event-Driven Elements

Once pilot flows have been identified and an initial event catalog is starting, the next step is to start an event-driven design by decomposing the business flow into event-driven microservices and agents, and identify events in the process.

Decomposing an event flow reduces the total effort required to ingest event sources, as each microservice or agent will handle a single aspect of the total event flow. New business logic can be built using microservices, while existing applications – SAP, mainframe, custom apps – can be event-enabled with adapters.

Orchestration vs. Choreography

There are two ways to manage how microservices and agents interact within an event-driven system: orchestration and choreography.

With orchestration, services communicate in a call-and-response pattern. One component issues a command, waits for a reply, and then tells the next one what to do. It’s structured and predictable, but also tightly coupled – each step depends on the last, and if one link in the chain fails, the entire flow can stall.

Choreography, on the other hand, flips that model. Instead of following a central script, each service reacts to events as they happen. When one service publishes an event – say, a sales order is created or a delivery is confirmed – any service interested in that event can respond, independently and in real time. The result is a loosely coupled system where parts can evolve or fail independently without bringing the business process down.

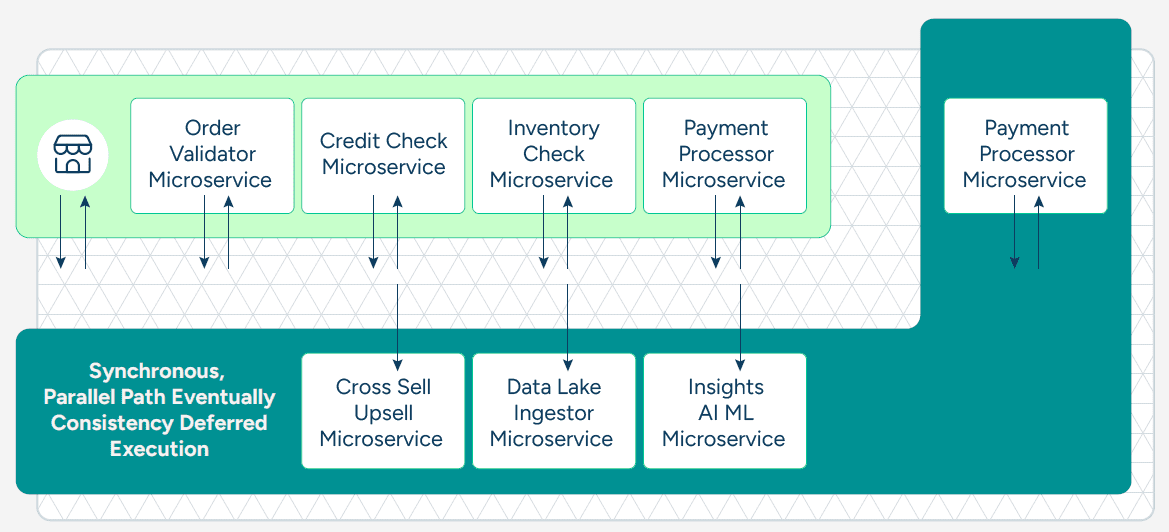

In an SAP environment, this distinction becomes especially valuable. For example, a core business process in SAP S/4HANA – like order fulfillment – may still require some synchronous orchestration steps (for instance, confirming credit or updating inventory in real time). But once the key transaction is complete, the asynchronous work can happen event-driven: updating analytics in SAP Data Warehouse Cloud, triggering customer notifications in SAP Marketing Cloud, or sending updates to third-party logistics providers.

When designing your flow, it helps to identify which steps truly need to be synchronous – those that must complete before the process can move forward – and which can be asynchronous, happening in parallel or after the fact. Tasks like logging, auditing, or enriching data for reporting can safely run later, using event messages to stay in sync.

You can model these interactions manually at first, but as your system grows, an event portal becomes invaluable. It provides a visual map of how events, services, and APIs connect, making it easier to choreograph complex processes and ensure that both synchronous and asynchronous flows remain clear, scalable, and governed.

One of the problems with a RESTful synchronous API-based system is that each step of the business flow – each microservice – is inline. When a system submits an order, should it wait for all the order processing steps to be completed, or should only a few mandatory steps be inline?

If you think about it, all the “insights” processes do not need to happen before the point of sale systems get an order confirmation – they are just going to slow down the overall response time. In reality, only the order ingestion and validation need to be inline – everything else can be done asynchronously.

The event mesh provides guaranteed delivery, so the non-inline microservices can get the data a little after the fact, but in a throttled, guaranteed manner. The event stream flows into the systems in a parallel manner, thereby further improving performance and latency.

Parasitic Listeners

One of the most powerful advantages of event-driven architecture is that once events start flowing, they can be consumed by new applications without touching existing integrations.

These consumers – often called parasitic listeners – quietly “tap into” the event stream for purposes such as analytics, auditing, compliance, or feeding data lakes. They don’t interfere with the core business logic or flow; they simply observe what’s already happening.

In practice, this means an analytics platform, monitoring tool, or AI model can subscribe to a wildcard-filtered stream of events, receiving only the data that’s relevant to its function. No changes are needed to your microservices, your ESB, or your ETL processes.

For SAP customers, this opens up exciting possibilities. Imagine compliance systems automatically consuming finance-related events from SAP S/4HANA, or a data lake on SAP Datasphere continuously updated in real time with order or inventory changes. These listeners operate independently yet stay fully aligned with the core process, creating transparency and insight without introducing new dependencies or overhead.

This “listen-without-impact” capability is one of the reasons event-driven architecture scales so gracefully. Once the event mesh is in place, any authorized system – internal or external – can consume the data it needs, when it needs it, without slowing down the rest.

Event Sources and Sinks – Dealing with Legacy Applications

There are a couple of event sources and sinks that you should be aware of. Some sources and sinks are already event-driven, and there’s little processing you’ll need to do to consume these events. Let’s call the others “API-ready.” API-ready means they have an API that can generate messages that can be ingested by your event broker, usually through another microservice or application.

Although there’s some development effort required, you can get these sources to work with your event broker fairly easily. Then there are legacy applications that aren’t primed in any way to send or receive events, making it difficult to incorporate them into event-driven applications and processes.

Here’s a breakdown of the three kinds of event sources and sinks:

| Event-Driven | API-Ready | Legacy | |

|---|---|---|---|

| API Status? | Natively event-driven | Have a REST/SOAP API, with schemas | No standards-based API, though there might be various ways to connect |

| Push/Pull Abilities? | Can push events, can consume events | Request/reply, no notion of topics or push | Data needs to be pulled, or polled, but may also be triggered |

| Adapters for Streaming? | None needed | Need microservices or streaming pipeline to transform request-reply source into a streaming destination, and publish intelligent topic | Need an integration adapter (JDBC, MQ, JCA, ASAPIO, Striim, legacy ESBs) installed close to the source of the destination of the event to transform and pub/sub events |

Event-Driven Applications

Event-driven applications can publish and subscribe to events, and are topic and taxonomy aware. They are real-time, responsive, and leverage the event mesh for event routing, eventual consistency, and deferred execution.

API-Ready Applications

While API-ready applications may not be able to publish events to the relevant topic, they can publish events that can be consumed via an API. In this case, an adapter microservice can be used to subscribe to the “raw” API (an event mesh can convert REST APIs to topics), inspect the payload, and publish the event to appropriate topics derived from the contents of the payload. This approach works better than content – based routing, as content – based routing requires the governance of payloads in very strict manners down to semantics, which is not always practical.

Legacy Applications

You’ll probably have quite a few legacy systems that are not even API-enabled. As most business processes consume or contribute data to these legacy systems, you’ll need to determine how to integrate them with your event mesh. Are you going to poll the system? Or invoke them via adapters when there is a request for data or updates? Or are you going to off – ramp events and cache them externally? In any case, you’ll need to figure out how to event-enable legacy systems that don’t even have an API to talk to.

The key to accommodating legacy systems is identifying them early and getting to work on them as quickly as possible.

For more details and examples, read How to Tap into the 3 Kinds of Event Sources and Sinks.

Go Cloud-Native As You Can

The accessibility and scalability of the cloud make it a natural fit for event-driven architecture. In many ways, an event mesh and the cloud are built on the same principles – elasticity, decentralization, and always – on connectivity. Deploying them together allows your systems to scale seamlessly, respond faster, and evolve continuously.

That said, most enterprises – especially those running SAP landscapes – live in a hybrid reality. You can’t expect all your events to originate or be processed in the cloud. Core systems like SAP S/4HANA, manufacturing platforms, or on-premises databases still generate critical events that drive business operations. Ignoring those would mean leaving valuable insights behind.

With an event mesh in place, though, it doesn’t matter where your data lives. Events move freely and securely between on-premises environments and multiple clouds, so your architecture can be hybrid by design.

For example, you might have your data lake and analytics in SAP Datasphere or Azure, AI and machine learning services in Google Cloud, and core business processes anchored in SAP S/4HANA on-premises or in private cloud. Meanwhile, logistics operations in one region and customer applications in another can exchange data instantly through the same event mesh.

As 5G connectivity and edge computing become ubiquitous, your architecture must handle two seemingly opposite patterns – multi-cloud and intelligent edge – with equal agility. AEM enables seamless multi-cloud and hybrid event distribution with built-in governance and security.

By using standardized protocols, a well-structured topic taxonomy, and a resilient event mesh, you gain the freedom to run business logic wherever it makes the most sense – without being tied to a specific platform or provider. That flexibility is what makes an event-driven SAP environment truly cloud-ready.

An order management process may see these microservices in an event flow following a point of sale:

- Order validator

- Credit check

- Inventory check

- Payment processor

- Order processor

An order for rum raisin ice cream is initiated when a point of sale system makes an API call to submit it:

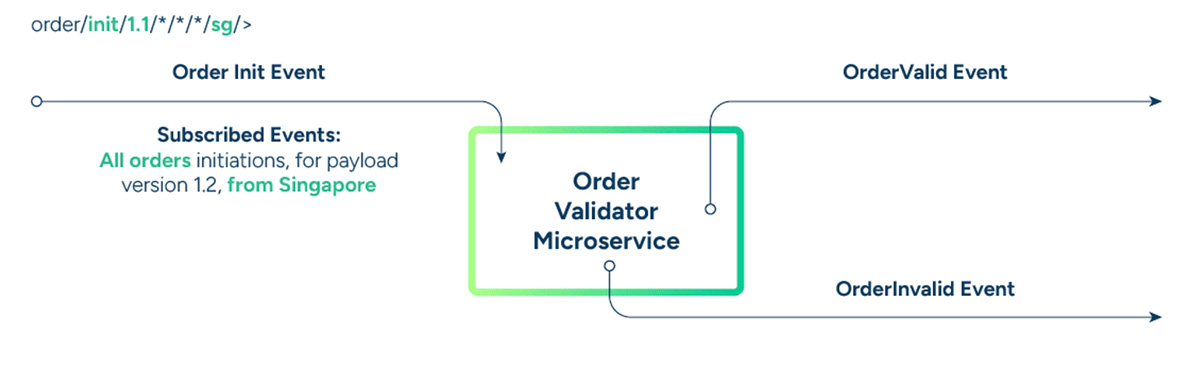

The Order Validator microservice consumes the new order event, and produces the valid order, or invalid order event. The valid order event is then consumed by the Credit Check microservice, which similarly produces the next events.

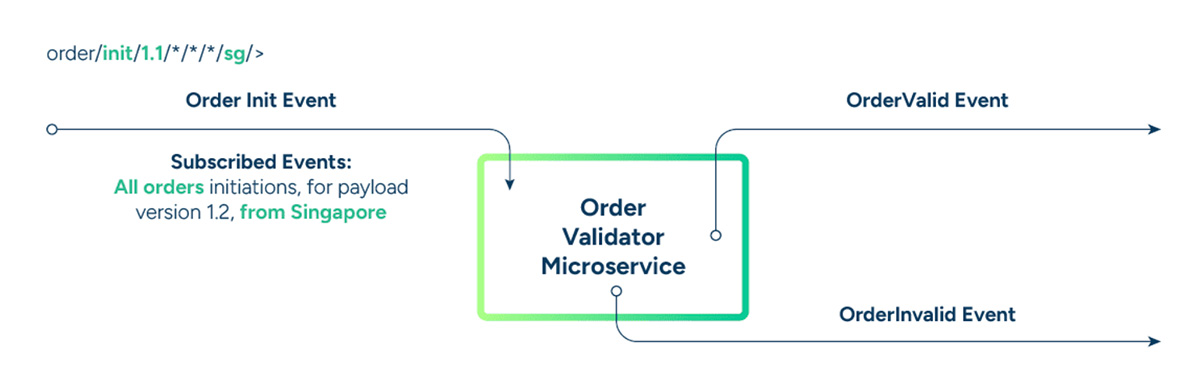

Subscribed Events: all order initiations, irrespective of version and product

Published Events: order validation status with other meta data

Issue: Order validation rules have been changed from Singapore to the US and payload has changed from JSON to protobufs.

Solution: A new (reused) microservice is created to serve the business logic of validating orders from the US with the new payload and rewire topic subscriptions. The new microservices start listening to the relevant topic, consuming order initiation events from US of the version 1.2. Nothing else changed!

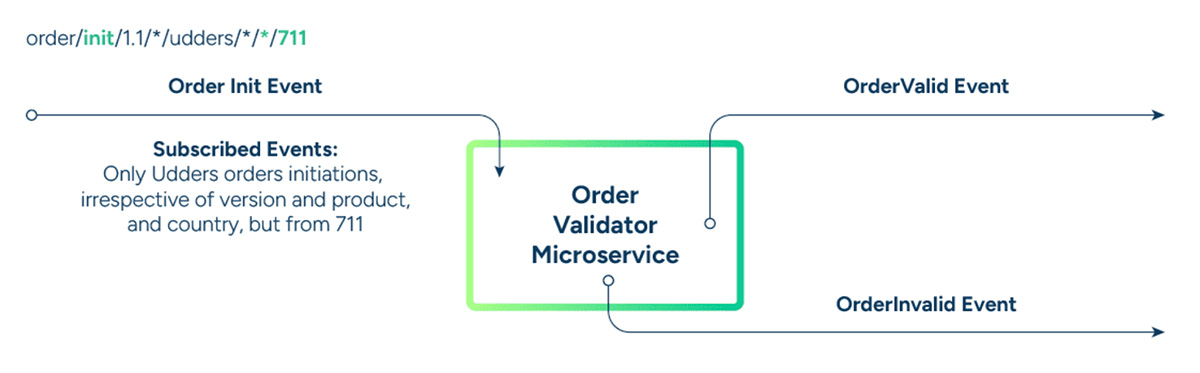

Issue: Want to monitor all orders coming from the 711 chain.

Solution: Implement a microservice with the relevant Insights business logic, look up the event in the event catalog, and start subscribing to events using wildcards.

Step 6: Scale and Repeat as your SAP event ecosystem expands, AEM ensures consistent performance and visibility.

Once your first event-native application goes live, something important happens: your event catalog begins to take shape. Every event defined, published, and consumed adds to this growing library – creating a foundation that accelerates every subsequent project.

That catalog isn’t just documentation; it’s a launchpad for innovation. It shows stakeholders what’s possible and helps developers discover and reuse existing events instead of rebuilding them from scratch. The more complete and well-governed your catalog becomes, the faster your teams can deliver new, real-time capabilities.

In an SAP landscape, this evolution is particularly powerful. Events from SAP S/4HANA, SAP SuccessFactors, or SAP Ariba can quickly become reusable building blocks for new processes and analytics. For example, a “purchase order created” event might first be used to trigger supplier notifications – and later reused to update an AI-driven demand forecasting model or synchronize finance data in SAP Datasphere.

As you scale, the benefits compound. Reusability drives consistency, faster delivery, and alignment across lines of business. The event portal becomes a shared space where architects, developers, and business analysts can collaborate on designing and consuming events – turning what started as a pilot into an enterprise-wide transformation.

Culturally, this is when the organization begins to shift. Integration or middleware teams might manage the event mesh and event catalog, while individual lines of business start using and contributing to it. Some organizations establish federated models, with localized event brokers supporting specific regions or business units – all connected by a common mesh.

Over time, more systems will produce and consume events, often reusing what already exists. That’s how your event-driven journey snowballs – each new use case amplifying the value of the last.

And it all starts with that first success story. Once stakeholders see real-time responsiveness in action – faster decisions, smoother workflows, happier customers – the demand for event-driven innovation spreads naturally. You don’t have to convince anyone; the results speak for themselves.

Conclusion

Constantly changing, real-time business needs demand one thing: digital transformation. The world isn’t slowing down, and neither can your enterprise. The challenge is finding a way to modernize your architecture cost – effectively and efficiently – without disrupting the systems your business already depends on.

That transformation isn’t just technical – it’s cultural. Most IT professionals were trained to think procedurally. Whether you started with Fortran, C, Java, or Node, the default mindset has always been synchronous: function calls, RPCs, web services, APIs, and orchestrated flows through ESBs. But today’s real-time world runs asynchronously. Shifting from “call and wait” to “publish and respond” requires new thinking, new skills, and new tools.

In an event-driven architecture, microservices don’t need to call each other directly, creating a fragile distributed monolith. Instead, events flow freely across an event mesh, ready to be consumed and acted upon by applications, AI agents, and analytics systems – wherever they live. This is what enables true responsiveness, agility, and continuous innovation.

For SAP customers, that evolution is already underway. With SAP Integration Suite, advanced event mesh (AEM), enterprises can connect SAP and non-SAP systems in real time, extending the value of their digital core and unlocking new levels of automation, insight, and resilience.

By following the six steps outlined in this paper, you can take a structured, low-risk approach to becoming event-driven – one that builds momentum through quick wins and scales naturally as your organization evolves.

The tools are here. The path is proven. The next step is yours.

Sumeet Puri is Solace's chief technology solutions officer. His expertise includes architecting large-scale enterprise systems in various domains, including trading platforms, core banking, telecommunications, supply chain, machine to machine track and trace and more, and more recently in spaces related to big data, mobility, IoT, and analytics.

Sumeet's experience encompasses evangelizing, strategizing, architecting, program managing, designing and developing complex IT systems from portals to middleware to real-time CEP, BPM and analytics, with paradigms such as low latency, event driven architecture, SOA, etc. using various technology stacks. The breadth and depth of Sumeet's experience makes him sought after as a speaker, and he authored the popular Architect's Guide to Event-Driven Architecture.

Prior to his tenure with Solace, Sumeet held systems engineering roles for TIBCO and BEA, and he holds a bachelors of engineering with honors, in the field of electronics and communications, from Punjab Engineering College