Microservices are extremely popular these days, and for good reason. They provide a blueprint that makes it easier for developers to repeatedly create robust and scalable applications. While there is no official industry-adopted definition of microservices, there are some generally accepted attributes that make up a microservice:

- Small and single in purpose

- Communicate via technology agnostic protocols

- Support continuous integration

- Independently deployable.

Many of these attributes are interrelated – since services are to be small and single in purpose, they must communicate with each other to provide real business value, and to be independently deployable they need to be small and single in purpose. While each of these are vital attributes, the ability to communicate without being tightly coupled to one another is a critical aspect of microservices architecture.

Note: For a deeper read you can take offline, get our latest paper on event-driven microservices:

The Architect's Guide to Building a Responsive, Elastic and Resilient EnvironmentSr. Architect Jonathan Schabowsky addresses the challenges of microservices architecture and shares his perspective on the modern messaging integration patterns architects can leverage.

Smart Endpoints and Dumb Pipes

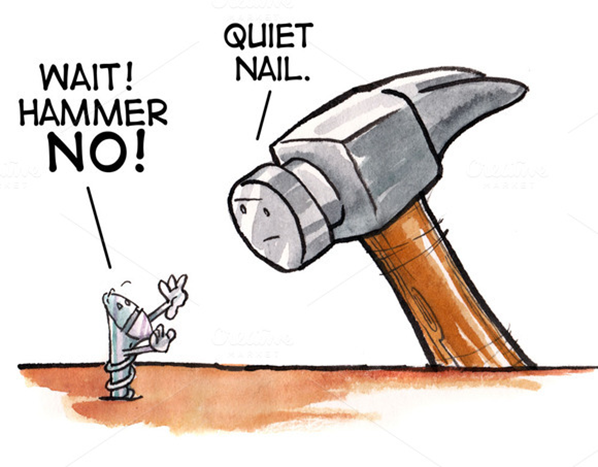

Well-known author and developer Martin Fowler advocates what he calls “smart endpoints and dumb pipes” for microservices communication. In the past, Enterprise Service Buses ruled the SOA universe and it was common to embed orchestration and transformation logic into the infrastructure. This meant that the pipe itself was “smart” and the industry treated the endpoints as “dumb”. There were multiple problems with this approach: the tooling was complex and expensive, and it was difficult to troubleshoot when problems occurred in production environments.

Today, with microservices, the IT community has embraced the reverse approach where services own their domain-centric logic (“smart endpoints”) and only utilize the “dumb pipes” as a transport mechanism. Most communications between microservices is via either HTTP request-response with resource API’s or lightweight messaging. While these two mechanisms are by far the most commonly used, they’re quite different, so I’d like to explain when it comes to deciding between REST vs Messaging for Microservices, which scenarios call for each. It’s important to not just have each of these tools in our toolbox, but to know which to use when.

Share with your followersLet’s Talk about REST vs Messaging for Microservices

Representational State Transfer (REST) was defined by Roy Fielding in his 2000 PhD dissertation entitled “Architectural Styles and the Design of Network-based Software Architectures”. Dr. Fielding was a part of the process of defining HTTP, and was called upon time and again to defend the design choices of the web. Through his work on HTTP, he distilled his model into a core set of principles, properties and constraints, now called REST.

Why is that important? It is my belief that we owe a great debt of gratitude to Dr. Fielding. RESTful interactions have become vital to enterprise computing as it enables many APIs on the web today. With that said, lets define what problems REST solves best:

- Synchronous Request/Reply– HTTP (the network protocol on which REST is transported) itself is a request/response protocol, so REST is a great fit for request/reply interactions.

- Publicly Facing APIs – Since HTTP is a de facto transport standard thanks to the work of the IETF, the transport layer of the APIs created using REST are interoperable with every programming language. Additionally, the message payload can be easily documented using tools such as Swagger (OpenAPI Specification). And due to the wide range of security threats present on the internet, the security ecosystem for REST is robust, from firewalls to OAUTH (Authentication/Authorization).

Deeper Considerations When Using REST

Due to the popularity of RESTful services today, I see many companies falling into the trap of using REST as an “all-in-one” tool. Certainly, some of this popularity is due to the power REST provides based on its own merits. Developers are also used to designing applications with synchronous request/reply since APIs and Databases have trained developers to invoke a method and expect an immediate response.

In fact, Martin Thomson once said, “Synchronous communication is the crystal meth of distributed software” because it feels good at the time but in the long run is bad for you. This over reliance on the use of REST and synchronous patterns have negative consequences that apply primarily to the communication between microservice within the enterprise and that in some cases are at odds with the principles of proper microservice architecture:

- Tight Coupling –There will always be some coupling of services around the interface (specifically around the data) but when invoking a RESTful service, the developer is assuming that the message will only ever need to be delivered to one place. What happens when another service or component comes online in the future and needs the data? Sure you can update the code to add the new endpoint, but that displays the flaw: unnecessary coupling. Soon your simple microservice has become an orchestrator which defies the microservice’s attribute “single in purpose”.

- Blocking – When invoking a REST service, your service is blocked waiting for a response. This hurts application performance because this thread could be processing other requests. Think of it this way: What if a bartender took a drink order, made the cocktail and waited patiently for the patron to finish the drink before moving on to the next customer? That customer would have a great experience, but all of the other customers would be thirsty and quite unhappy. The bar could add additional bartenders, but would need one for each customer. Obviously, it would be expensive for the bar, and impossible to scale up and down as patrons came and went. In many ways, these same challenges occur when threads are blocked in applications waiting for RESTful services to respond. Threads are expensive and difficult to manage, much like bartenders!

- Error Handling – HTTP was built for the web and we have all seen our browsers get stuck trying to access a webpage. Usually we click the refresh button and the page displays. But what if it fails again? Try to refresh again? Does one start to implement a human form of exponential back off by getting a cup of coffee and trying again in a few minutes? We do not know what to do as every webpage is different and has unique behavior. The same type of issue occurs when directly invoking a RESTful service. Should this complex retry logic reside in a service’s code? If it does the service is even more tightly coupled to other services – again violating the key principle of keeping microservices architecture single in purpose and small in size.

Messaging for Event-Driven Microservices

The solution to many of the shortcomings associated with RESTful/synchronous interactions is to combine the principles of event-driven architecture with microservices. Event-driven microservices are inherently asynchronous and are notified when it is time to perform work. In many cases, asynchronous communications is how many of our daily interactions take place. Take Facebook: It would be incredibly inefficient to navigate to each friend and check to see if they have a status update. Instead we are notified when a friend has updated their status so we can go see that cute new picture of their cat. Obviously, that makes us more productive as individuals. (in our use of Facebook anyway…)

The benefits of messaging for event-driven microservices are many and varied:

- Loose Coupling – Using messaging, specifically publish/subscribe functionality, services do not have knowledge of other services. They are notified of new events, process that information and produce/publish new information. This new information then can be consumed by any number of services, thanks to publish/subscribe. Loose coupling allows microservices to be ready for the never-ending changes that occur within enterprises.

- Non-Blocking – Microservices should perform as efficiently as possible, and it is a waste of resources to have many threads blocked and waiting for a response. With asynchronous messaging applications can send a request and process another request instead of waiting for a response. This becomes clear when revisiting the bartender analogy. Bartenders are complex individuals and can service multiple patrons and interleave the execution of multiple tasks at the same time. They go from patron to patron and process multiple orders without blocking/waiting on any single patron so every patron has a drink and leaves a good tip!

- Simple to scale – As applications and enterprises grow, the ability to increase capacity (or dynamically scale to optimize costs) becomes one of the most important advantages of microservice architecture. Since each service is small and only performs one task, each service should be able to grow or shrink as needed. Event driven architecture and messaging make it easy for microservices to scale since they’re decoupled and do not block. This also makes it easy to determine which service is the bottleneck and add additional instances of just that service, instead of blindly scaling up all services, which can be the case when microservices are chained together with synchronous communications. The ability to scale using event driven architecture has been proven by companies such as Linked-in and Netflix so you can rest assured it will work for your enterprise.

- Greater Resiliency and Error Handling –In the past few months major airlines have experienced data center issues that resulted a cascade of application synchronization problems. The impact of these problems was massive: flight cancellations, angry customers and the loss of millions of dollars, not counting damage to their reputations. Microservices failure scenarios become tricky when considering in-progress transactions. Messaging platforms that offer guaranteed delivery can act as the source of truth in the event of massive failures and enable rapid recovery without message loss. In the case of less massive failures (service failure) the use of messaging allows healthy services to continue processing since they are not blocked on the failed service. Once healed, the failed service will start processing the data that had accumulated during the downtime, making the system eventually consistent. Additionally, code becomes much cleaner and readable as all the cumbersome retry and error handling logic is gone. In event driven microservices the messaging tier handles the retry of failed messages (unacknowledged messages) which frees the service to be small in size and single in purpose.

Summary

Event-driven microservices should be considered more often by developers and architects as they provide the foundation to build awesome systems and applications. To learn more, check out the “microservices” section of our Resource Hub for a variety of microservices-related content.

To learn more about how message exchange patterns can unlock the full benefits and value of event-driven microservices, take a look at this blog post where I walk through through a real-world example. Additionally, in this post I compare microservices choreography vs orchestration and explain the benefits of choreography.

Explore other posts from categories: For Architects | Products & Technology

Jonathan Schabowsky

Jonathan Schabowsky