If you want to work with real-time streaming data, the two most popular feeds you will come across are Wikipedia updates and Twitter tweets. While capturing Wikipedia updates used to be quite trendy a few years ago, recently, capturing tweets from Twitter and running sentiment analysis on this has become trendier.

In this post, I would like to show you how you can write a Twitter publisher that will use Twitter’s API (under the hood) to get tweets as they are published in real-time and publish them to Solace PubSub+ Event Broker using JMS.

You can find my code on GitHub.

Twitter/PubSub+ Connection Settings

My Twitter publisher is based on Hosebird Client (github) by Twitter which is a Java HTTP client for consuming Twitter’s standard Streaming API. The client is easy to use and if you follow the instructions/explanation on README.md, you will be able to understand how to set it up.

To be able to access Twitter’s API, you will need to get a Twitter developer account and register your app. Once you have been approved, you will have access to consumerKey, consumerSecret, token, and tokenSecret that you will need to populate in resources/twitter.yaml file.

Similarly, you will need to populate your Solace PubSub+ connection information in resources/broker.yaml. You will need host, vpn, username, and password. You can easily spin up a PubSub+ instance using docker container or through PubSub+ Event Broker: Cloud (sign up for a 30-day free trial).

Subscribing to Tweets

The Twitter API allows you to subscribe to multiple keywords and get real-time tweets that contain those keywords. Since we are going through a pandemic right now, I have decided to pick covid-19 and coronavirus as my keywords. You can pick different keywords.

List<String> terms = Lists.newArrayList("covid-19", "coronavirus");

hosebirdEndpoint.trackTerms(terms);

Publishing to PubSub+ Topic

As soon as we connect, we will start receiving relevant tweets as JSON strings. We are interested in publishing these tweets to topics on Solace PubSub+ Event Broker. Since PubSub+ supports rich hierarchical topics and dynamic filtering using wildcards, we should put useful information on our topics which the consumers can use to filter the data.

With this in mind, I will use “tweets/v1/covid/” + language of the tweet as my topic structure. Each tweet has a field called lang which tells you the language of the tweet. For example, if the tweet is in English, value of lang will be en. So, for tweets that are in English, they will be published to topic: tweets/v1/covid/en. If a tweet is in French, it will be published to: tweets/v1/covid/fr.

if(msg!=null){

JSONObject jsonMsg = (JSONObject) parser.parse(msg);

String lang = (String) jsonMsg.get("lang");

publishMessageToSolace(session, msg, "tweets/v1/covid/" + lang);

// Control the msg publish rate

Thread.sleep(1000);

}

Why did I include language in the topic? I did so because later, I would like my consumers to have the flexibility to filter tweets based on language. For example, I might only want tweets that are in English. With Solace’s support for rich hierarchical topics, we can dynamically filter data using wildcards. My consumer can simply subscribe to tweets/*/*/en and get only English tweets. If my consumer wanted tweets in all languages, it can subscribe to tweets/>.

That’s pretty much it. To get started with my code, you have to add your twitter and broker information in the respective config files, decide which keywords you want to get tweets for, and which topic you want to publish messages to.

Running the Code

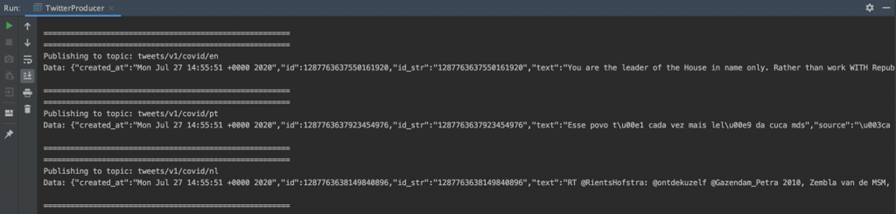

Once you start the publisher, you will notice the following output (via IntelliJ):

As you can see, we are getting tweets in different languages: en, pt, nl.

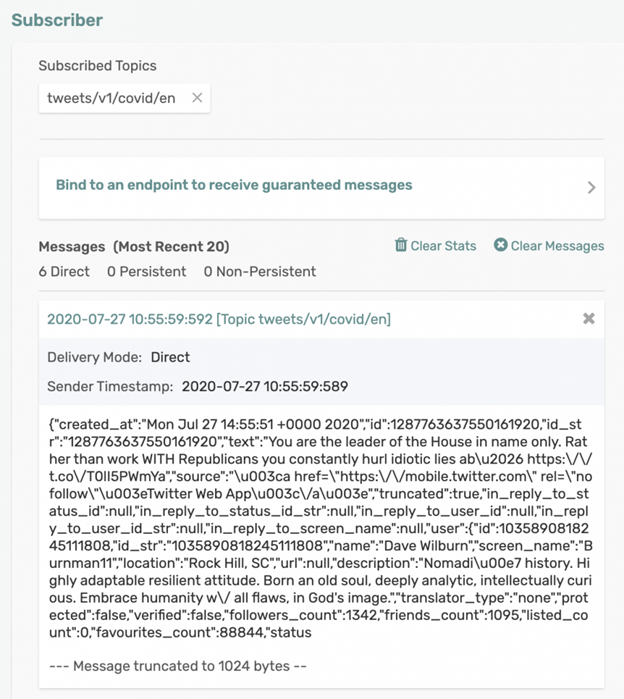

However, our subscriber can decide which topic to subscribe to and here is an example of a subscriber (via Solace’s Try Me! app on the management UI) subscribing to just English tweets via tweets/v1/covid/en topic.

Once you have these tweets being distributed over Solace PubSub+ Event Broker, your applications can use any of the different open APIs and protocols Solace supports to consume this data. For example, you can even create an event mesh and stream this data to an ML application in AWS or GCP to perform sentiment analysis!

Explore other posts from category: For Developers

Himanshu Gupta

Himanshu Gupta