Since the Apache Kafka broker is architected to deliver messages exactly as they are received from the publisher the added touch to encrypt messages causes a change in broker behaviour and has a significant performance cost, up to 90% decrease. Please review the Datapath section and slide 10 of this slideshare, to understand why this happens.

As Apache Kafka moves into other messaging use cases besides log aggregation and writes to big data infrastructure, it has been surrounded by connectors and bridges. This is necessary because the Apache Kafka architecture does not allow it to be an open standards multi-protocol messaging broker. This chaining of additional processes between the client and broker to do client scaling and protocol translation obfuscates the end client and breaks per client connection security features. This is because many clients share the same connections into the Apache Kafka brokers.

To enable ACLs with Apache Kafka, first SASL needs to be enabled to expose client credentials, but also the ACLs need to be injected into Zookeeper to be distributed across the Apache Kafka cluster. This re-introduces dependencies on Zookeeper that Apache Kafka has been moving away from, but also means that Zookeeper is now part the messaging system’s security infrastructure. This implies Zookeeper needs to be run securely and will likely require separate Zookeepers specifically for Apache Kafka data. The reason to initially use Zookeeper was because it was already available in big data solutions but the reason to move away from Zookeeper was for stability under performance issues. Running Zookeeper securely means it will no longer be “just there” and it will also be less stable under performance stress. Solace message brokers internally store and replicate ACLs without 3rd party tools. This limits the distribution and access to just the message brokers, making the overall solution more secure.

Apache Kafka has implemented a SASL interface, which only supports Kerberos, OATHBEARER and plaintext. If you require integration into enterprise authentication systems such as Active Directory, you will have to build and support this yourself, or move to licenced Confluent Platform 5.0. Only Confluent Apache Kafka has the ability to map ActiveDirectory/LDAP/Radius groups to Kafka security policies, meaning this mapping must be done manually per client. Solace message brokers allow mapping of LDAP or Radius groups to client profiles and ACL profiles.

The Solace message router was built to be multi-tenant, with role-based administration. To support this, all messaging clients as well as administrators are scoped to have access to only the resources, they need to perform their tasks. This is achieved via: roles based administrators, integration into all major enterprise authentication architectures, (Active Directory, LDAP, Radius, Kerberos, Client Certificates), client authentication and data movement segregated into application domains, publish and subscribe access control lists, as well as queue level granular access controls.

Security feature exists and is properly integrated into the product

Security feature is possible, but up to the customer to integrate into the product

Security feature does not exist

| Authentication | Apache Kafka | Solace |

|---|---|---|

| Internal | ||

| LDAP | ||

| Radius | ||

| Kerberos | ||

| Client Certs | ||

| SASL Interface | ||

| OpenId Connect |

| Authorization | Apache Kafka | Solace |

|---|---|---|

| Subscribe ACL | ||

| MQTT Per Consumer ACL | ||

| Publish ACL | ||

| MQTT Per Producer ACL | ||

| Connect ACL | ||

| Managed Subscriptions | ||

| OAuth 2.0 |

| Audit | Apache Kafka | Solace |

|---|---|---|

| Configuration changelog | ||

| Meaningful Connect/Disconnect log | ||

| Authentication reject logs | ||

| Subscription log |

| Encryption | Apache Kafka | Solace |

|---|---|---|

| Server Certificates | ||

| Client Certificates | ||

| Symmetric Certs | ||

| Encrypted connect, clear text data | ||

| Encrypted local passwords |

| DoS | Apache Kafka | Solace |

|---|---|---|

| TCP SYN flood attack | ||

| UDP flood attack | ||

| IP Frag attack | ||

| SMURF Ping flood attack |

Security Features and Differences Explained

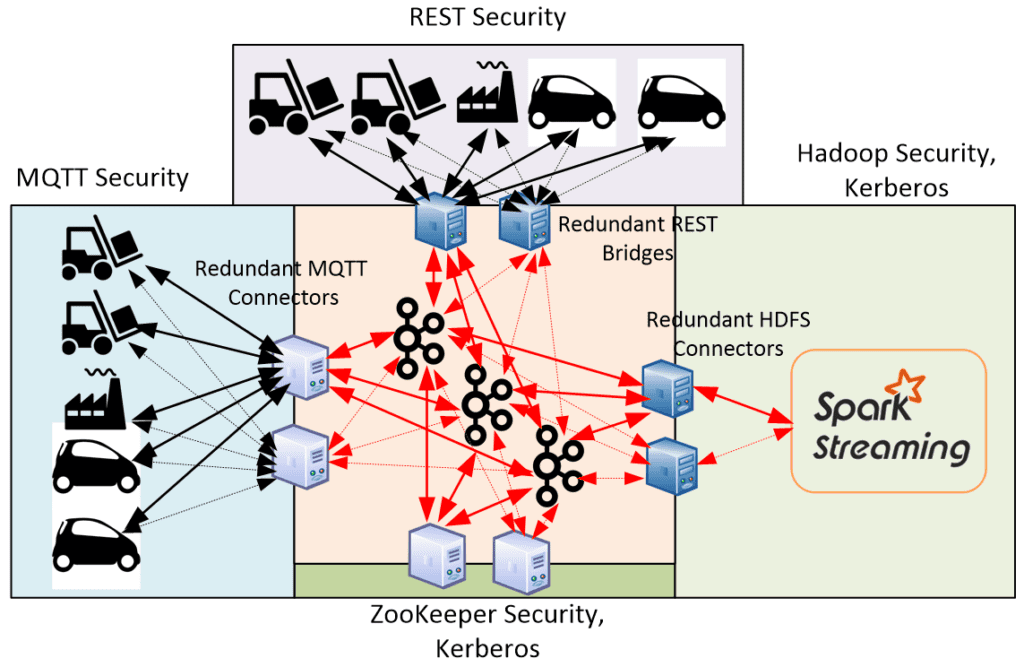

Apache Kafka connection model with bridges and connectors add frailty and complexity to the overall connection model. For example a REST bridge is stateful and multiplexes multiple REST clients. The client connection to the Apache Kafka broker is for the bridge and therefore the security credentials and ACLs are for the bridge not the end client. If we look at this from an end-to-end use case we can see the complexity. Let’s take a use case that Apache Kafka might be considered for, “push state events from IoT devices into analytics infrastructure”. With Apache Kafka this would look like this:

In this picture the red lines are secure, SASL_SSL. The black lines will be whatever authentication and authorization policy the connector or bridge provides, this could be less secure then the actual Apache Kafka connections. The end clients would inherit the authorization of the connector/bridge which is not set per end client.

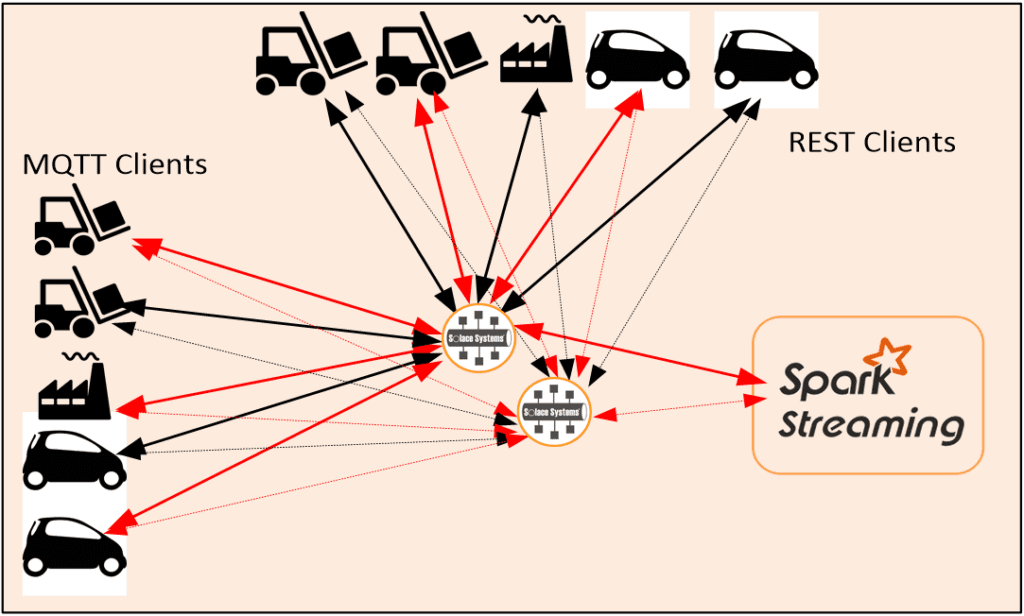

With Solace this would look like:

In this picture authentication, authorization and transport security is set once on a per client basis as an integral part of SolOS. Each client type will be treated equally with respect to security features.

Internal authentication credentials

Apache Kafka stores these username/password in clear text and leave it to the customer to secure. Solace does not store passwords but instead stores one way Salted and SHA512 encrypted hashes of the passwords.

LDAP/Radius authentication

As discussed in the introduction above, Solace integrates and supports all common Active Directory/LDAP and Radius servers, mapping groups to Solace security policies. Apache Kafka integrates into these services via the SASL interface, but it’s not yet supported. It is up to the customer to integrate and support or move to licensed Confluent Platform 5.0.

Kerberos authentication

Used for both client and server authentication and key exchange and to implement single sign on network security. Both Apache Kafka and Solace have similar implementations of Kerberos authentication.

SASL (Simple Authentication and Security Layer)

Abstraction layer that Apache Kafka uses to expose access to authentication services. This provides a basic level of integration with these services.

OAuth2.0 and OpenId Connect

Emerging standards for Authentication and Authorization. Originally built for access to resources via web messaging, these standards are becoming important in IoT deployments. Apache Kafka supports OAUTHBEARER (a portion of OAuth2.0). Solace will soon support OAUTH2 and OpenId Connect.

Publish/Subscribe/Connect ACLs

Apache Kafka relies on Zookeeper to maintain and distribute ACL policies whereas Solace does this internal to the message broker. Both Solace and Apache Kafka have similar allow/deny with exception type policies with wildcard matching.

Solace supports MQTT per producer/consumer ACLs which prevent client spoofing.

Managed Subscriptions

Solace has the ability to direct subscription requests to a subscription manager that can add subscriptions on behalf of the end client. This allows business logic such as access to fee liable data to be applied at subscription add time.

Server/Client Certificates

Both Solace and Apache Kafka uses the certs either asymmetrically or symmetrically to validate server and client identity during TLS negotiation at the TCP session layer. Solace further can map the client cert CN into a messaging layer to apply authorization security policies.

Encrypted Connection then cleartext data

In many cases it is only the actual authentication message exchange that needs to be encrypted, and the actual data exchange does not contain sensitive data. Solace provides ability to authenticate over a secure TLS session then downgrade the session to clear text for actual data exchange. For more information on TLS authentication with cleartext data please see TLS/SSL Service Connections.

DoS attacks

Since the Solace messaging appliance implements a custom TCP/IP stack in hardware the entire stack can be, and is, hardened against DoS attacks. For the Software Broker, the software message router is distributed inside a VM that is tuned to mitigate DoS attacks. The Apache Kafka broker leaves it to the customer to consider and implement DoS prevention and mitigation strategies.

Conclusion

Solace enables messaging architectures that enable consistent multi-protocol client authentication and authorization security across the enterprise with deep integration into enterprise authentication services in a minimal set of components.

Apache Kafka implements an industry standard SASL interface for simple authentication integration and an ability to implement a distributed set of authorization policies.