After almost a year working in the world of event-driven architecture and development – and given that I come from a REST API background, which I’m sure a lot of you can identify with – I thought I would share my learning experience. So all aboard! You’re going to embark on my journey to learning event-driven architecture from a software developer’s perspective. This journey will take you through:

- The origin and evolution of web services

- Popularity of REST APIs and the impact on education

- Mapping REST concepts/terms to their EDA equivalents

- Building web applications: REST vs. event-driven

- Is EDA a silver bullet?

So, you can either choose your own adventure and read the section of your choice or jump aboard and take the event-driven development ride from the beginning (recommended!).

1. The origin and evolution of web services

Before taking your seat on this event-driven development train, let me give you a quick history lesson on why REST APIs are so popular and how the industry adopted this protocol for most web applications.

In the late 90s and early 2000s, HTTP started to gain traction in the internet stack by giving people the ability to interact with web services over a network. Web applications were a gold mine in the world of the internet, allowing individuals and businesses to provide services and interact with end users.

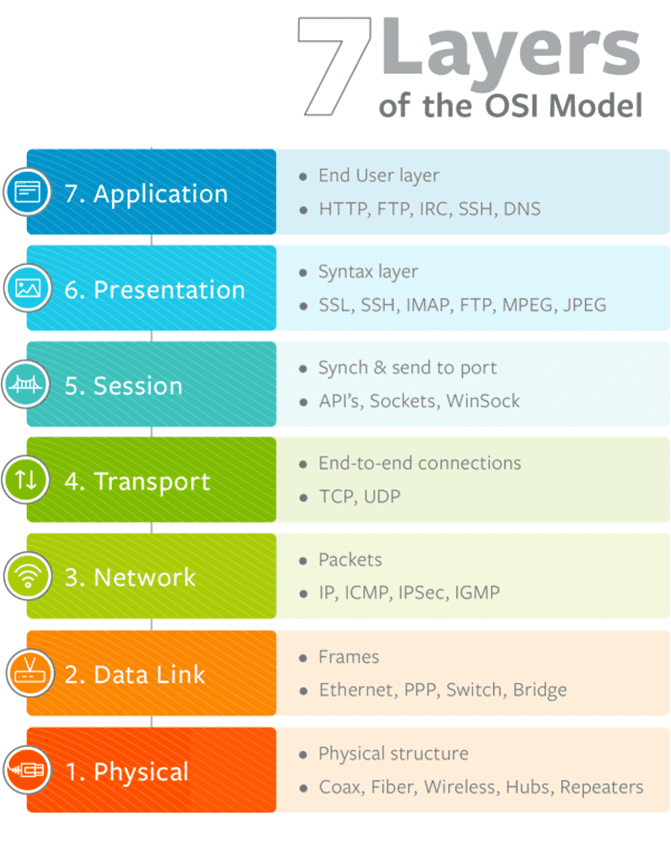

During my undergraduate degree in Communications Engineering, I learned about the OSI model that describes the functions of a networking system through a conceptual framework and a list of abstractions. Web applications rely on the application layer (the only layer the end user interacts with) to transmit information across the lower layers underneath. These web applications and services interact with the application layer through different protocols; HTTP was (and still is) the most popular choice for its simple implementation (relatively speaking!).

Protocols that communicate with web services and objects over HTTP started rising; the first of which was Simple Object Access Protocol (SOAP). Without delving into the SOAP implementation details, its complexity motivated the rise of REST to communicate with HTTP web services, which made things even simpler to build. There had to be a way for systems to communicate with each other over a protocol. That system architecture is conceptually simple: client-server architecture. A client asks for resources, waits for a response from a server, and performs an action based on this response. Commands through CRUD operations provided a skeleton framework for request-reply and point-to-point communication patterns between services.

With REST APIs gaining more popularity and industry adoption, more tooling around using REST started evolving. Tooling such as OpenAPI, Swagger, and API Portals facilitated the adoption of REST APIs for building web applications. Automation and code generation tools made learning, understanding, and implementing REST APIs simple, resulting in this implied reputation that they should be the de facto for system communication and for building web applications.

Interesting Fact: In 2000, eBAY was the first enterprise to adopt a REST architecture. Flickr followed in 2004, Amazon in 2006, and then Facebook and Twitter eventually followed suit. At the time of these companies’ adoption of REST architecture, there was no concept of event-driven APIs to interact with web services from a user-facing perspective.

2. Popularity of REST APIs and the Impact on Education

You might be wondering why REST APIs are so popular. Well, when it comes to learning foundational concepts in the software industry, whether it’s through theoretical education (schooling, online courses, college…etc.) or real-life experiences through on the job training, the learning process is based on two main factors:

- Theoretical background and fundamentals

- Industry relevance

The theoretical background piece is inevitable, and is not influenced by what’s hot and trending. Industry relevance, however, affects the educational content. It only makes sense that course content must be able to draw a direct connection to what’s being implemented in real-life use cases. And let’s face it, most people go to school not just to learn but also get a job and get paid! REST had (and still has) a high industry relevance, so naturally my education path in developing and architecting web applications did not cover learning SOAP services or even event-driven architecture since there wasn’t much in terms of industry relevance. Does this mean that event driven development was completely new to me when I was introduced to it? On the contrary! Next, I’ll explain how the concept of event-driven architecture was ingrained in different areas of learning and how this enabled me to map foundational concepts to EDA fundamentals.

During my university undergraduate degree, the concepts of event-driven systems and real-time applications were covered as different subjects. The missing element in my event-driven development journey was the bridge between those theoretical concepts and their actual implementation. I will briefly highlight four areas that covered messaging and event-driven systems – Frontend and Game Development, Programming Design Patterns, Networking Fundamentals, and Operating Systems Fundamentals – followed by a table that maps the theoretical concepts to event-driven architecture lingo.

Frontend and Game Development

Interacting with UI elements in front end development is event-driven in nature. A user executes an “event” that immediately “drives” an action in return. For example, a button does not poll its state to check if it is pressed or not, it simply registers an EventListener with a callback function that gets triggered upon an event being fired. A simple implementation takes the following format:

element.addEventListener(event, function);

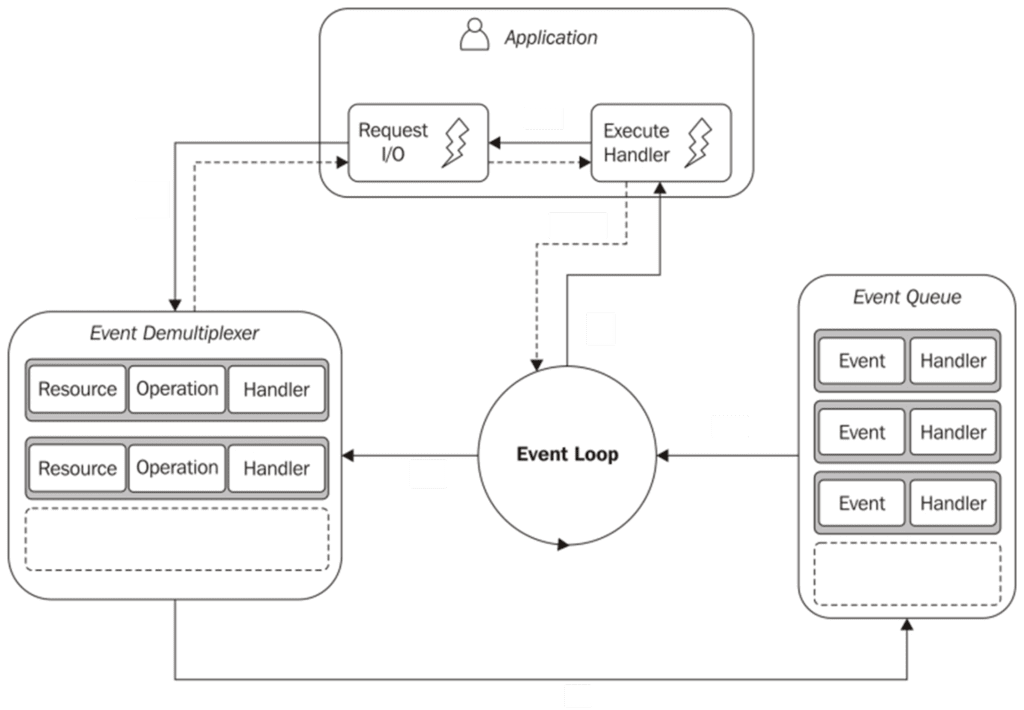

Let’s look underneath the hood and digest this further. Since JavaScript is single-threaded and can only do one thing at a time, the browser has an event loop that keeps track of all the events in an event queue which can be accessed through interfaces built on top of the core JavaScript language. Whenever an event occurs in the web browser, the event loop dispatches this event to any registered listener. Sweet and simple. Note that the event loop implements the reactor pattern design discussed in the following section.

In game development, the concepts of EventHandlers and EventDispatchers are very prevalent for asynchronous interactions with a game. The only way to use communication between objects where you don’t care about the target object receiving the event is via EventDispatchers.

Programming Design Patterns

Software design patterns present reusable solutions to common problems. For real-time applications, I will give a high-level overview of two common design patterns that present an event-driven approach versus a thread-based approach:

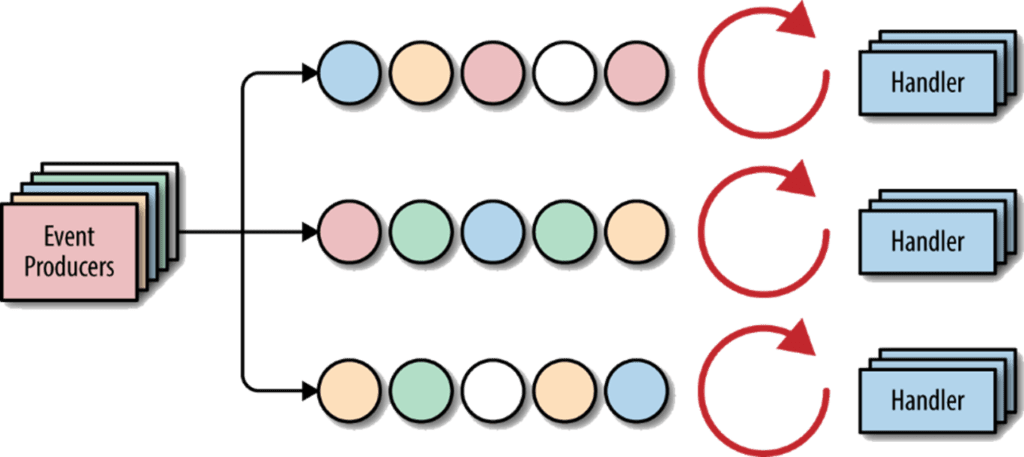

Reactor Pattern

Image source: https://developers.redhat.com/blog/2019/05/13/building-and-understanding-reactive-microservices-using-eclipse-vert-x-and-distributed-tracing/pasted-image-0-1/

This pattern relies on the concepts of dispatching events. It includes a “Reactor” that runs in a separate thread, responding to any I/O events and dispatches the work to “Handlers”. The benefit of the reactor pattern is to avoid having dedicated threads for each message, connection, or request. As seen in the diagram above, events are demultiplexed and dispatched to the responsible handler.

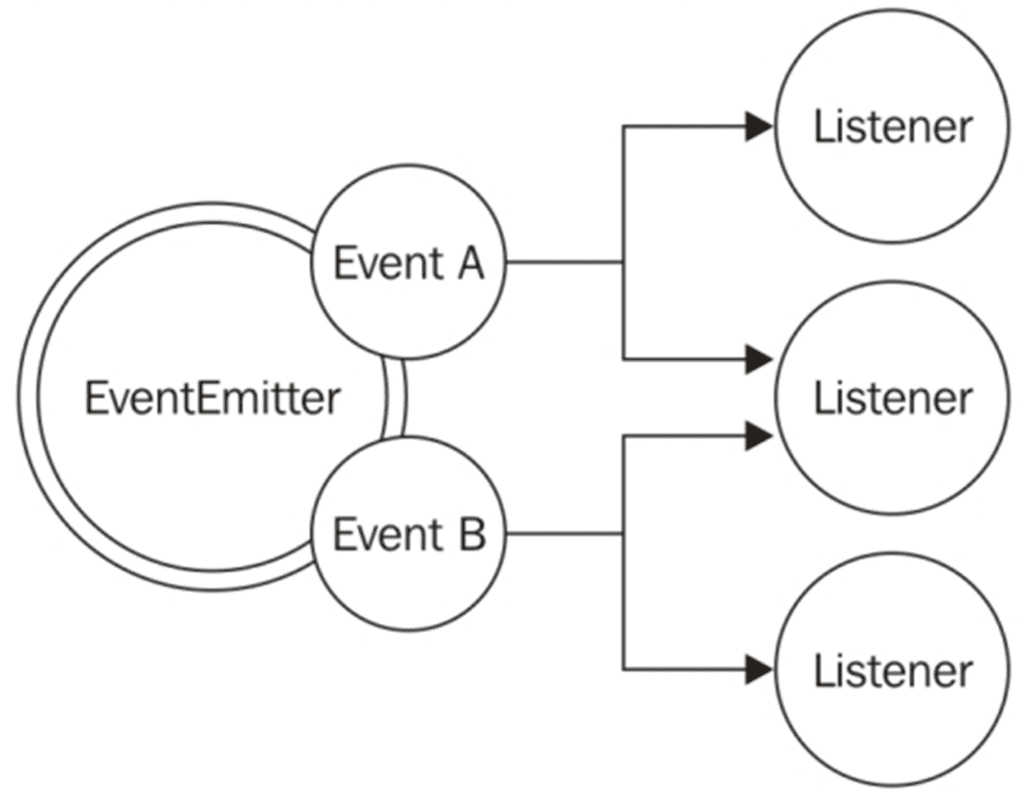

Observer Pattern

Image source: https://subscription.packtpub.com/book/web_development/9781783287314/1/ch01lvl1sec12/the-observer-pattern

The observer pattern differs from the reactor pattern in a way that each object maintains a list of its dependents. A change in state for an object will simply trigger a notification (i.e., event) to all the dependents signaling a change. The observer pattern implements a one-to-many relationship where multiple dependents could be “observing” the change in an object.

Networking Fundamentals

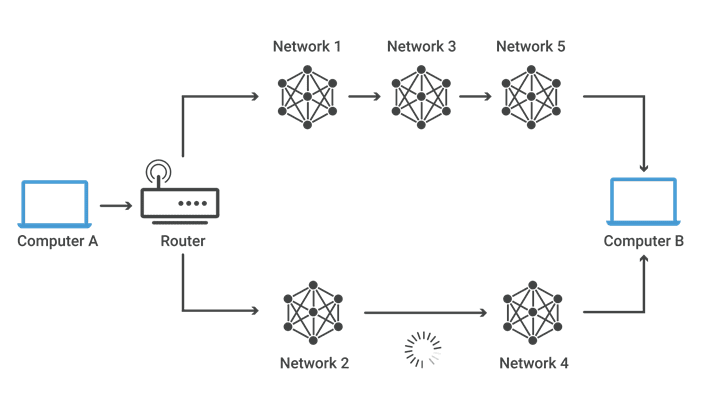

Recalling the OSI model covered earlier, the network layer was a HUGE help to me when it came to understanding event-driven architecture and development. This layer is responsible for receiving packets of data in the internet stack and delivering them to the intended destination through IP routing. Every packet traveling through the internet has a logical address to its destination (IP) that is handled by the router (middleware hardware) that literally routes the packet to other destinations in the network. These packets could either be destined to hosts within the network boundary (egress traffic) or traffic coming in from outside the network boundary (Ingress traffic).

The keywords to keep in mind here are:

- Router: a device that contains IP lookup tables mapping from and to destinations.

- IP: the address where the packet is going to or came from.

- Host: network device that sends/receives packets.

- Packet format: contains metadata about the packet including destination and data content.

Operating Systems Fundamentals

Similar to the reactor pattern discussed earlier, scheduling and threading in an operating system embodies the concepts of dispatching and demultiplexing. Events (I/O, interrupts, thread returns, etc.) are either dispatched to the target endpoint or added to a queue. There are six popular scheduling algorithms, but in a very simplistic overview, scheduling enables asynchronous activities in operating systems and minimizes any resource starvation.

3. Mapping foundational concepts to their EDA equivalents

Matching the foundational theoretical concepts discussed in the section above to the event-driven architecture equivalent aided me in my event-driven development journey. I summarized them in this table:

| Category | Foundational Concept | EDA Equivalent |

|---|---|---|

| Front-end & Game Development | Event Dispatcher | Publisher |

| Event Handler | Subscriber | |

| Event Loop | Event Broker | |

| Programming Paradigms | Reactor Pattern | Event Broker |

| Observer Pattern | Publish/Subscribe | |

| Networking | IP Routing | Topic Routing |

| Egress and Ingress Routing | Event Mesh | |

| IP Address | Topic | |

| Packet Anatomy | Message Anatomy | |

| Subnet masks | Wildcard filters | |

| Operating Systems | Scheduler | Event Broker |

| Process Scheduling Queues | Broker Queues | |

| Threads | Consumers | |

| I/O | Publish/Subscribe |

In Front-End and Game Development:

- Event Dispatchers and Event Handlers relate to publishers and consumers respectively for the nature of publishing and listening to events.

- An event loop relates to a message broker due to the nature of dispatching events to registered listeners.

In the Programming Paradigms category:

- The Reactor pattern relates to an event broker through the concept of dispatching events.

- The Observer pattern relates to Publish/Subscribe message exchange patterns through the one-to-many relationship of a publisher to listeners.

On the Networking front:

- Every packet has an IP address which is synonymous to Topics that events are sent or received on.

- The concepts of IP packet routing relates to message routing in the event broker via topic matching.

- Egress and Ingress traffic routing through multiple networks relates to the behaviour of an event mesh in event-driven architecture.

- The anatomy of packets in a network is broken down in a way that relates to a message anatomy

- The way subnet masks act as filters allowing specific IP ranges relates to using wildcards in topic matching and filtering

And finally on the Operating Systems front:

- The behaviour of a scheduler relates to an event broker through dispatching and demultiplexing of events to threads.

- Process scheduling queues keeps track of all the processes in the system similar to how a broker queue keeps track of the guaranteed messages on the broker.

- Operating system threads consume resources similar to how a consumer in an event-driven architecture consumes messages.

- Input/Output operations relate to Publish/Subscribe message exchange patterns in a way that an input device sends instructions to the operating system and the output device receives commands.

4. Building web applications: REST vs. event-driven

Now that the theoretical background of my journey is covered, I’d like to talk about how this is related to building web applications. If REST APIs have already been adopted for building applications and is considered the de facto solution in many industries, why should we bother ourselves with finding an alternative? Why should we consider an event-driven approach for operational use cases?

Increased demand for having immediate access to insights had proven that time-critical applications could not rely on REST for real-time feedback and actions. With the recent increase in demand for high availability services, trendy architectural designs like microservices and containerizations started gaining a lot of popularity. Tooling around microservices deployment strategies and container orchestration (e.g. Kubernetes, EKS, AKS, Docker Swarms, etc.) also started to become more popular. According to Forbes, data is becoming the new oil, with data being constantly streamed representing a constant flow of events. An example of this is a stock ticker; every time a stock price changes, a new event is created. It’s called “streaming data” because there are thousands of these events resulting in a constant stream of data. Reactive patterns advocate for resilient systems that are message driven as opposed to polling dependent. As per the Reactive Manifesto, “Today applications are deployed on everything from mobile devices to cloud-based clusters running thousands of multi-core processors. Users expect millisecond response times and 100% uptime.”

These design requirements motivated me to learn more about event-driven architecture and exposed me to the issues of REST-only and REST-heavy architectural choices for building applications. Keeping the Reactive Manifesto in mind, I’ve summarized a high-level comparative list between REST-based and messaging-based solutions below. Disclaimer: this is not a comprehensive list.

REST-Driven Systems |

||

Pros

|

Cons

|

|

Event-Driven Systems |

||

Pros

|

Cons

|

|

Adopting a REST-driven architectural design has several advantages to it. These include the availability of high quality, industry approved tooling and documentation; industry adoption and support; and simplicity of implementation. However, the drawback to a REST-heavy system is that it’s synchronous in nature. This means that it’s built on a request-reply communication concept, allowing only point-to-point communication: the client must always call the server, the server can not reach out to the client, and the client can not reach out to other clients directly. Through the adoption of a client-server architecture and reliance on HTTP, tight coupling in a microservices architecture could result in a distributed monolith. The one-to-one nature of REST means that a separate REST call is needed for every endpoint which makes it hard to extend the architecture without modifying the clients. I have seen that it is common to build a distributed monolith instead of the intended decoupled microservice architecture, and this leads to a lot of time and money wasted.

On the other hand, an event-driven system reduces the amount of coupling between microservices. This means that a message sender does not need to know about any consumer; the only coupling in EDA is a topic hierarchy structure. Loose coupling enables easier scaling of the system and handling of traffic spikes through shock absorbing and queuing mechanisms. In message-oriented systems, applications produce events without controlling how the consumption of those evens is done. This contrasts REST-based systems that rely on synchronous tight coupling between the client and the server.

Eventual consistency is intuitively handled in an event-driven system using a message broker, enabling parallel execution of microservices and making sure that each microservice will eventually come to a state of completion. This allows the system components to eventually become consistent with the state of the system without the need of a two-phase commit or distributed transactions. However, complexity of handling asynchronous messages when using event brokers could be a challenge. This challenge is associated with the adoption of any new architecture, which like all other developers, a healthy challenge is encouraging! Another drawback of an event-only driven system is the coupling to the messaging middleware infrastructure, so when adopting an messaging based architecture one should take into account the multi protocol compatibility along with interoperability with other message brokers.

5. Is EDA a silver bullet?

Neither REST nor event-driven architecture are best for every scenario, and choosing one depends on what you’re trying to accomplish. Large distributed systems and distributed architecture microservices are built on a polyglot of technologies and architectural patterns. An architecture that combines REST and event-driven architecture would deliver the full potential of real-time development and interoperability. Event-enabling a REST architecture allows you to combine traditional REST interactions with publish-subscribe communication, with a message broker acting as the middleware layer for message flow.

As developers in this modern world, we are no longer just concerned with applications that we write in a silo. Considering the effects of our applications on other parts of the business is becoming more important than ever as the notions of DevOps, microservices, and integrations all become every-day terminologies that a regular developer in the tech industry would hear and use. Furthermore, creating applications that would support a spike in traffic at any point in time is becoming more crucial.

Conclusion

This is my personal event-driven development journey, and like all tech journeys a developer chooses to take, there is always something to learn. I don’t know about you, but I am a developer driven by a challenge and have an urging thirst to solve technical problems using slick tools and architectures! Who knows what architectural trend will come up next with the rise of cloud infrastructures (hybrid/multi-cloud) and new technologies. Regardless of the new tech, it’s important to test it out for yourself, conduct research, keep an open mind, and be ready to learn and grow!

Thanks for taking the ride with me. Do YOU have an event driven journey or a different experience you’d like to share? Join the Solace Community or find me on Twitter @tweetTamimi!

Explore other posts from category: For Developers

Tamimi Ahmad

Tamimi Ahmad

![How to Achieve Hybrid Cloud with Cloud and On-Premises Integration [Updated 2020]](https://solace.com/wp-content/uploads/2017/04/DARK_How-to-Integrate-Cloud-and-On-Premise-Apps-768x365.png)