Solace is a 20-year-old company which started in Ottawa in 2001 as the world was just recovering from the Dot-com bubble burst. A lot has changed in the last 20 years. The world has become increasingly more connected and the amount of data streaming across digital devices continues to grow exponentially.

For many years, Solace’s customers were mostly concentrated in the financial services domain, especially capital markets. This included large investment banks such as Barclays and RBC, exchange venues, and smaller buy-side firms across North America. Over time, Solace expanded to dominate capital markets globally with most of the major global investment banks relying on Solace PubSub+ brokers for their critical message flows. In the last few years, Solace has leveraged its expertise in capital markets to expand into other verticals such as retail, IoT, payments, and manufacturing where a new need for real-time data has arisen.

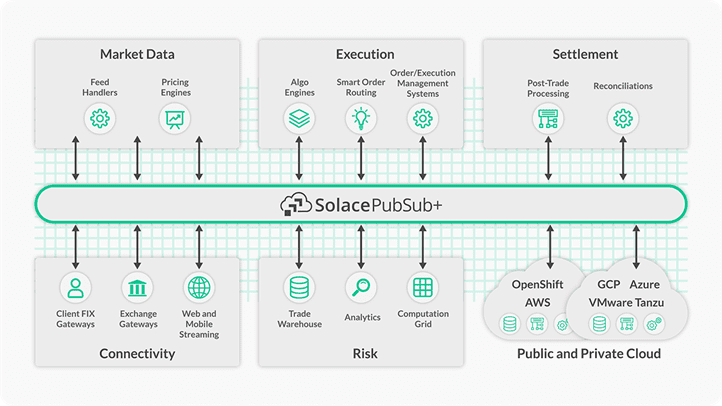

With 20 years of experience, we have observed some key use cases at almost each of our customers in capital markets. These are some of the most challenging use cases that require a battle-tested solution that can satisfy all of the regulatory requirements as well.

So, what are these use cases and how does Solace enable them?

I’ll be diving into specific use cases in a series of blog posts, but before I begin, let’s ensure we are all on the same page about what is capital markets, who are the key players, and what they are trying to achieve.

Capital Markets Overview

Capital markets is a wide-umbrella term commonly used to refer to an industry that enables parties such as businesses and individuals to exchange liquidity. You have suppliers such as banks and investors that provide liquidity by offering capital and you have businesses, governments, and individuals who seek liquidity. The suppliers are looking to earn returns on their capital whereas the counterparties are seeking cheap capital for projects.

Capital markets are composed of counterparties, mentioned above, as well as the venues where the transactions happen. The most popular venue is a public stock exchange such as NYSE, LSE, or NASDAQ where counterparties connect to exchange liquidity. Capital markets consists of primary markets and secondary markets where parties hope to raise capital by offering shares in their companies or issuing debt instruments.

The key institutions in capital markets include investment banks, broker-dealers, exchange venues, asset managers, and hedge-funds. Many of these can be grouped into two wider categories: buy-side and sell-side, where buy-side firms include asset managers and hedge-funds and sell-side include investment banks and broker-dealers.

While there are several independent broker-dealers, a lot of investment banks also have brokerage facilities where they operate as broker-dealers for their customers. Additionally, most, if not all, investment banks also have prop trading where they trade securities for their own profit. To avoid conflict of interest, these individual businesses/teams are isolated from other operations within the investment banks.

The buy-side firms include asset managers and hedge-funds who invest their investors’ money for higher returns. There are firms that specialize in different types of investment strategies such as long/short, momentum, and value. They may also focus on specific asset-classes as well such as equities, FX, fixed income, and commodities. These firms employ prime brokerage services from a broker-dealer (or investment bank with broker-dealer services) to be able to trade in exchange venues and provide leverage.

Exchange venues are simply venues that allows exchange of liquidity. They connect buyers with sellers. Historically, these venues used to be completely physical and quite chaotic. In recent times, they have moved most, if not all, of their operations to online. You don’t have to be physically present at New York Stock Exchange (NYSE) to trade 100 shares of IBM. You can do so from a GUI provided to you by your brokerage firm. Exchanges are responsible for handling orders from different counterparties and provide trade confirmations to settlement and clearing houses.

Finally, we have clearing and settlement houses that play a vital role in the lifecycle of a trade. Whenever you have multiple parties involved, you need a third-party to facilitate the transaction, handle reporting, and settle the trades. There are few large quasi-governmental settlements and clearing corporations around the world that most brokerage firms and exchange venues use. Every trade gets reported to one of these firms.

Now that we understand, at a high-level, who the key players are in capital markets, let’s discuss their specific use cases.

Capital Markets at the Core: Trade Processing

Each of the key players discussed previously has slightly different use cases and concerns but at the core of it, they are all trying to process trades. How exactly the trades are processed and what’s involved pre-trade and post-trade dictates their use cases.

The most common use cases which I will cover in this series of blog posts are grouped under two umbrellas: pre-trade and post-trade. Pre-trade covers everything that happens before the actual execution of the trade and post-trade consists of what happens after the trade has been processed.

Within pre-trade, there are several different use cases:

- Market data/reference data distribution

- Front-end UIs for information display and collecting orders

- Order Management/Execution Management Systems for trade flows

- Risk

Within post-trade, the use cases are:

- Settlement and clearing

- Reporting/PNL

- Risk

In my upcoming series of blog posts, I’ll dive deep into five of these use cases: market data, front-end UIs, order management, reporting/P&L, and risk management, starting with the distribution of market and reference data below.

Use Case for EDA: Market Data and Reference Data Distribution

Over the last few decades, trading has shifted from physical exchanges to digital ones where everything is recorded and tracked digitally. The amount of data being collected and distributed between parties has exploded recently and it has become a very profitable business to sell this data to clients.

As algorithmic trading executed by machines becomes more and more common, companies need lots of data and compute resources to drive insights about what securities to trade, where to trade, and how to track them after the trade. Traders can get aggregated securities and trading data feeds from specialized vendors such as Refinitv and Bloomberg for venues across the globe as well as direct feeds from the exchanges themselves.

White Paper: Accessing Real-Time Market Data in the Cloud with SolaceDelve into the business case for cloud-delivered market data; how an event mesh can help deploy that real-time data; and how to start the journey towards making it a reality for your organization.

There are different sets of data feeds that companies leverage based on the kind of trading activity and strategies they are deploying. Most common types of data feeds are market data or pricing feeds and reference data feeds. Market data feeds are real-time (but can be historical as well) and responsible for providing high-throughput and low-latency pricing updates for securities. These updates can be L1 price updates for Quotes and Trades or L2 market book updates. Alternatively, reference data updates are typically not real-time and generally, don’t fall in the high-throughput category either. Reference data provides data about the securities themselves such as their name, where they are being traded, different security tickers associated with them, currency they trade in etc. This information doesn’t change frequently but is extremely vital for companies to ensure proper monitoring of their positions and execution of trades.

Investment banks and hedge funds commonly have a dedicated Market Data and Tick Data team. The Market Data team is responsible for managing the infrastructure associated with market data feeds from large vendors such as Refinitiv and Bloomberg. They will also keep track of cost and entitlements. Market data feeds are expensive, and exchanges and data vendors require clients to have appropriate entitlement controls.

The Tick Data team usually leverages a specialized time-series database such as kdb+ by KX or OneTick by OneMarketData to capture, store, and analyze streaming data in real-time. These databases subscribe to the market data feeds and store the incoming data in-memory for quick processing. After a certain time period, usually end of day, the data is persisted to disk.

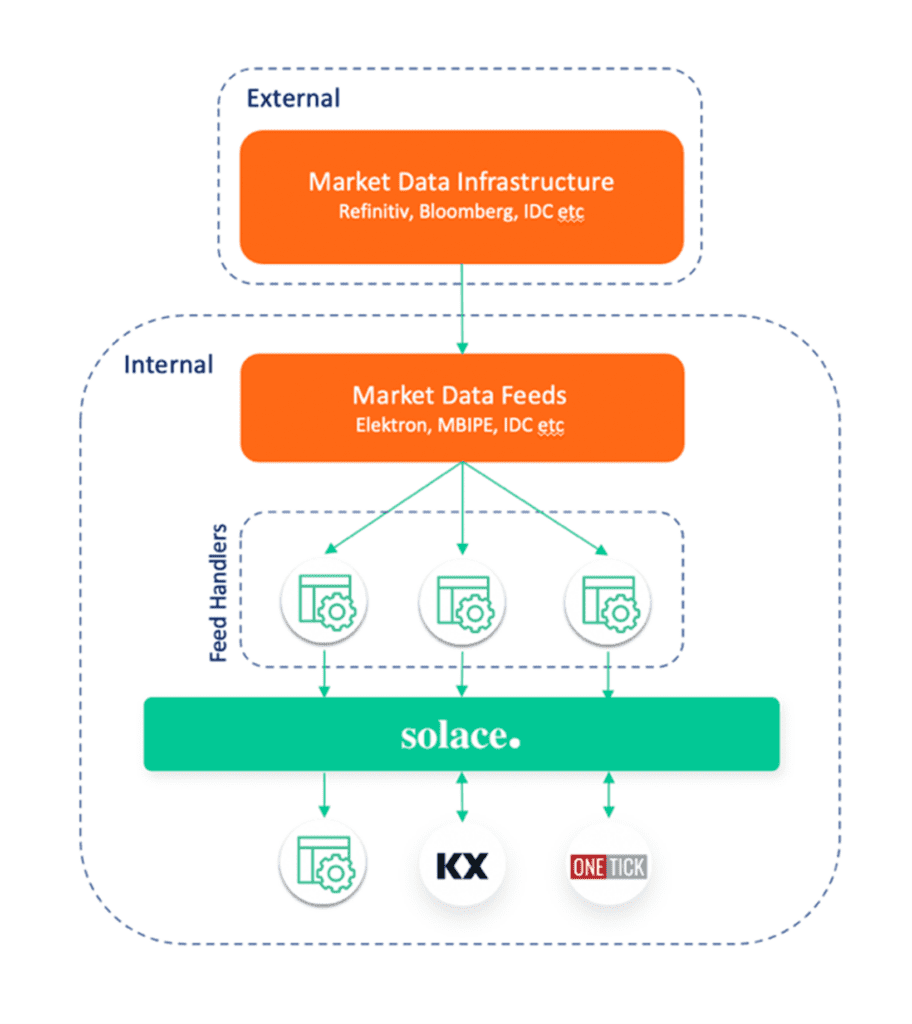

The architecture of distribution of market data typically looks like this:

In this reference architecture, there is market data infrastructure managed by data vendors. Additionally, there are market data feeds and additional infrastructure that is managed by clients. If you are getting data from Refinitiv, you might rely on their TREP infrastructure to get the Elektron data feed. You will also have to manage entitlements using Refinitiv’s DACS or Bloomberg’s EMRS service. Internal feed handlers will connect to this external infrastructure and subscribe to relevant data. If the client only trades equities in US, then there is no point subscribing to data for international securities. Feed handler processes are also responsible for normalizing the data (if the feed is not normalized) and mapping raw data fields to standardized fields. Elektron feed by Refinitiv is not normalized whereas Bloomberg’s MBPIPE feed is normalized.

This data is then published over Solace’s robust PubSub+ brokers, often the PubSub+ appliances, that specialize in handling high-throughput and low latency use cases such as market data distribution. These appliances are engineered for performance and availability. Once the data is flowing over Solace brokers, it can be easily subscribed to by downstream clients such as PNL and Risk applications, and tick databases.

Solace has integrations with the two of the most popular tick databases, kdb+ and OneTick, that most financial firms use to capture and analyze market data. You can subscribe to topics directly from these databases and capture incoming streaming market data. As the data is being captured, it can be analyzed in real-time using CEP (Complex Event Processing) and published back to solace for downstream applications to consume the derived data. For example, a common use case is to generate minutely stats with highs, lows, averages, and standard deviations. Common downstream applications interested in tick data are PNL, Risk, and TCA (transaction cost analysis).

With Solace’s support for dynamic and hierarchical topics, clients can leverage dynamic filtering. For example, feed handlers can publish normalized market data using the following topic taxonomy: marketdata/<feed>/<asset_class>/<region>/<venue>/<ticker>/<granularity>

Where a sample topic might be: marketdata/bbg/eq/us/nyse/ibm/tick

A stats process might subscribe to securities traded on NYSE only and generate minutely stats for them. Instead of subscribing to all the market data and then filtering NYSE data itself, it can simply subscribe to: marketdata/bbg/eq/us/nyse/>

As it generates stats every minute, it will publish data on a slightly different topic: marketdata/bbg/eq/us/nyse/<ticker>/stats

As referenced earlier, clients are expected to monitor access to raw data and report that to the vendor. Solace supports ACL profiles where Solace admins can limit which topics a client can publish or subscribe to. For example, since our stats process is only interested in NYSE data, we can enforce it to only have access to NYSE data. If it tried requesting data for any other exchange, it will get an authorization error.

Hybrid/Multi-Cloud and Event Mesh

Recently, financial services firms have started seriously considering having ‘market data in the cloud.’ The major market data vendors have started offering some sort of service in the cloud which enables you to directly capture data into one of the major cloud service providers services. For example, both Bloomberg and Refinitiv have feeds clients can subscribe to directly in the cloud and persist data to cloud native databases such as AWS’s RDS and GCP’s BigQuery. However, given the large investments clients have made on on-prem market data infrastructure, the large size of the datasets, and requirement for low-latency, it is difficult to see clients fully adopt market data in the cloud. Nonetheless, there is potential for a hybrid model here where on-prem market data infrastructure will remain, but subset of that data is distributed to cloud native services. An event mesh powered by Solace is the perfect solution for such a hybrid cloud requirement.

Clients can continue using their on-prem market data infrastructure and distributing data over on-prem Solace brokers. For any cloud native services, they simply need to spin up a Solace PubSub+ broker in their cloud environment and link it with the on-prem broker using dynamic message routing (DMR). An event mesh will ensure that only the data that’s required to go to the cloud will traverse the WAN link saving clients network cost as well as providing additional security. Clients don’t have to replicate all their data to their cloud environment only to discard most of it. Similarly, messages can flow in the opposite direction from cloud to on-prem. Maybe the cloud service is responsible for computing minutely bar stats. As it computes those stats, it can distribute that data over Solace PubSub+ brokers and anyone on-prem or cloud application can access it.

Solace Client Spotlight: Trading Firms and Investment Banks

One of World’s Largest Investment Banks

One of Solace’s clients, one of the world’s largest investment bank, was looking to retire a legacy messaging system and migrate to a more performant, modern, and cloud-friendly alternative. The legacy solution being used as a broker for frontend applications for several years but was lacking key features such as DMR, support for large payloads, easy-to-use UI, portability to cloud etc.

Multiple teams within the investment bank have adopted Solace’s PubSub+ broker as the next-gen messaging system as they migrate their applications away from the legacy broker. These applications have frontend layer which leverages Solace’s native support for WebSockets and large payload sizes (30MB for direct messaging. Additionally, Solace has support for message eliding as well as allows teams to leverage VPNs (virtual brokers) and keep configurations separate. Many bridging options, static bridging vs dynamic message routing, give the bank’s teams flexibility as they look to expand their Solace footprint to more regions.

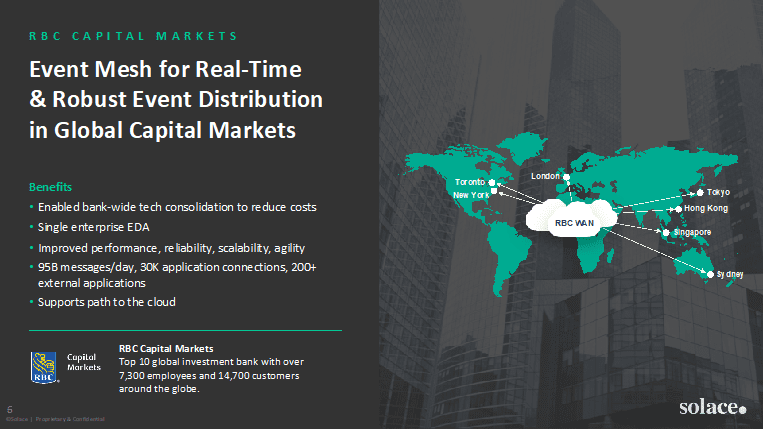

RBC Capital Markets

RBC Capital Markets is the corporate and investment banking arm of the Royal Bank of Canada, and among the top 10 investment banks in the world. Around 2010, RBC Capital Markets was looking to move away from incumbent vendors. They had a variety of messaging systems deployed across hundreds of servers, but it was impossible to seamlessly make a change to one environment without impacting the others.

RBC now has over 50 Solace event broker appliances deployed to act as the message bus of their low latency trading platform across Sydney, Hong Kong, Tokyo, London, New York, and Toronto. Solace technology ensures RBC’s rapid, reliable data flow — and does so across 25,000 application connections. They recently surpassed 118 billion messages in a single day without any data loss.

Grasshopper

Grasshopper, a high-frequency trading firm based in Singapore, was interested in building a hybrid-cloud architecture for their market data distribution platform and research infrastructure. Grasshopper wanted to capture high-frequency market data and trading events from different exchanges and process it in real-time to generate the order book which would be used by downstream trading and analytics applications. The output would be written to GCP’s BigQuery.

The challenge here is that the market data is collected from its co-located environments (Colos). These are on-prem datacenters of stock exchanges and companies choose to deploy their servers there to capture data in low-latency away. To be able to leverage both on-prem and cloud solutions, Grasshopper leveraged the Solace-enabled event mesh capabilities. They used Solace’s PubSub+ Event Broker appliances deployed in Colos and PubSub+ software brokers deployed in GCP. The brokers were then connected to form an event mesh.

The financial market data is streamed from on-prem Colos to GCP via Solace and is consumed by data pipelines written using Apache Beam and deployed via Cloud Dataflow in GCP. Solace provides Apache Beam integration with SolaceIO connector. Once the data has been successfully processed, it can be written to BigQuery. More information can be found in this Google Cloud blog post.

Conclusion

I hope you found this overview of capital markets and the first use case for real-time data helpful. Be sure to check out these next posts in the series as they are published:

Explore other posts from category: Financial Services

Himanshu Gupta

Himanshu Gupta