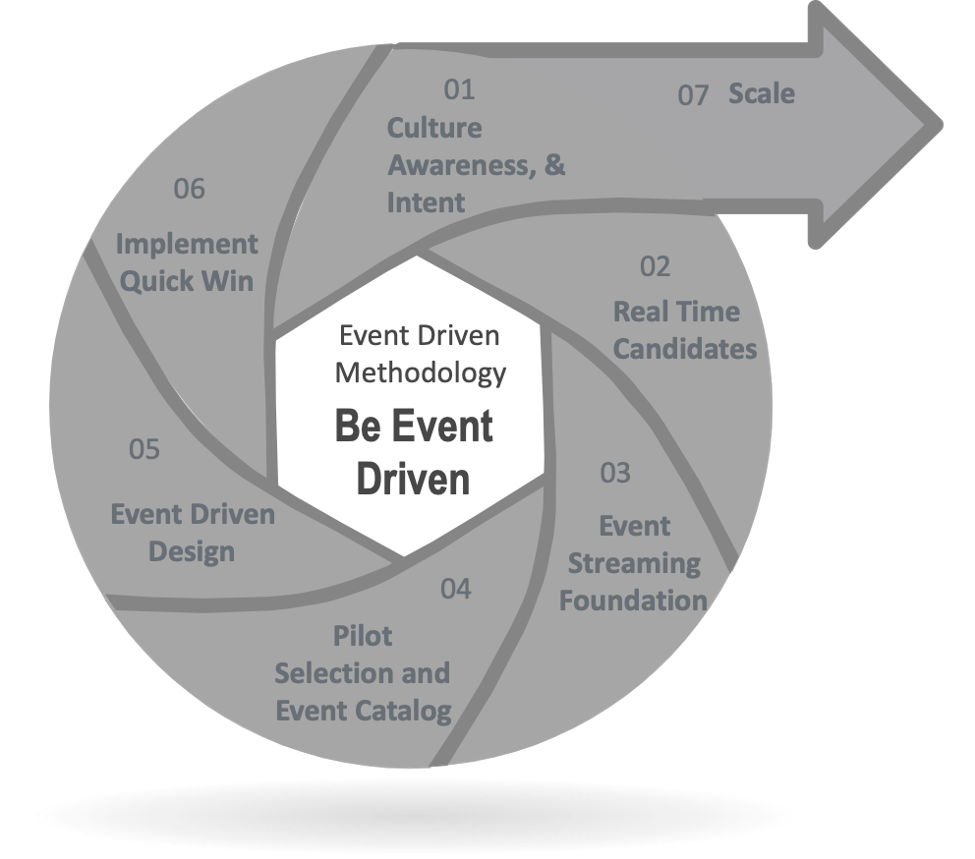

Implementing event-driven architecture is a journey; and like all journeys, it begins with a single step. To get started down this path, you need to have a good understanding of your data, but more importantly, you need to adopt an event-first mindset. In this article, I’ll explain how to implement event-driven architecture in these six steps:

- Assess Culture, Awareness, and Intent

- Identify Real Time Candidates

- Build Your Event-Driven Architecture Foundation

- Pick Pilot Application and Event Catalog

- Get a Quick Win

- Rinse and Repeat + Scale

Or, you can dive right into the details by downloading my free guide: Architect’s Guide to Implementing Event-Driven Architecture

Before you begin this journey, you must come to think of everything that happens in your business as a digital event, and think of those digital events as first-class citizens in your IT infrastructure.

Every business process is basically a series of events, whether it’s the initiation of a payment, detection of fraudulent activity, or a container landing at a port. As businesses become more real time, focus more on customer experience, application architecture needs to be upgraded to meet these needs and event driven architecture is a great paradigm to meet these needs.

For an enterprise to be truly real time, it’s essential that they stream events between applications and microservices that process them, derive insights, and make rapid decisions.

Why Event Driven?

Event-driven architecture is way of building enterprise IT systems that lets loosely coupled applications and microservices produce and consume events. These applications and microservices talk through events or event adapters. Events are routed among these applications in a publish/subscribe manner according to subscriptions that indicate their interest in all manner of topics.

In other words, rather than a batch process or an enterprise service bus (ESB) orchestrating a flow, business flows are dynamically choreographed based on each business logic component’s interest and capability. This makes it much easier to add new applications, as they can tap into the event stream without affecting any other system, do their thing, and add value. This allows for a truly real time, agile, and responsive architecture.

Event driven architecture is not new – GUIs as well as capital markets trading platforms have always been built this way. The reality is that it’s just becoming more mainstream now. This is mainly due to the fact that service-oriented architecture (SOA); extract, transform and load (ETL); and batch-based approaches need to evolve to meet real time needs.

How to Implement Event-Driven Architecture – The 6+1 Steps

To start your event driven journey, there is a 3 step strategy – event enable existing systems, modernize your platform, alert and inform. So how do you put this strategy into practice? These following six steps have been proven to make the journey to event-driven architecture faster, smoother and less risky in many real-world implementations.

Step 1: Assess Culture, Awareness, and Intent

Are you ready, aware, and have the intent to implement event-driven architecture?

I’m sure if you’re reading this, then the answer is “yes!” However, most mainstream people in IT were trained in school to think procedurally. Whether you started with Fortran or C like me, or Java or Node, most IT training and experience has been around synchronous function calls or RPC calls or Web Services to APIs – all synchronous. There are definitely exceptions to the rule – capital markets front office systems have always been event-driven because they had to be real time from the start! But more often than not, IT needs a little culture change.

Microservices don’t need to be calling each other, creating a distributed monolith. As an architect, as long as you know which applications/microservices consume and produce which events, you can just choreograph using a publish/subscribe event broker – or a distributed network of brokers (also known as an event mesh) – rather than orchestrate with an ESB.

It’s important to ponder a little, read up, educate yourself, and educate stakeholders about the benefits of event-driven architecture – agility, responsiveness and better customer experience. Build support, strategize, and ensure that the next project – the next transformation, the next microservice, the next API – will be done the event-driven way.

You will need to think about which use cases can be candidates, look at them through the event-driven lens, and articulate a go-to approach to realize the benefits.

Think real-time. Think event-driven.

Step 2: Identify Real Time Candidates

You’ve probably already thought about a bunch of projects, APIs, or candidates which would benefit from being real-time.

What are the real time candidates in your enterprise? The troublesome order management system, or the next generation payments platform that you are building? Would it make better sense to push master data (such as price updates or PLM recipe changes) to downstream applications, instead of polling for it? Is it the possibility of a real time airline loyalty points upgrade upon the scanning of a boarding pass, or real time airport ground operations optimization?

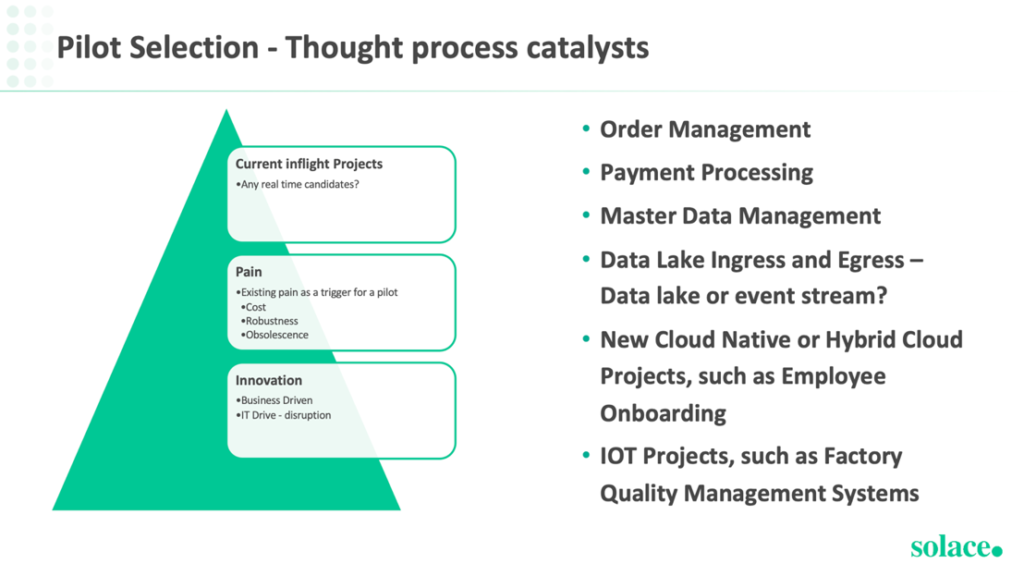

So how do you choose? Look for projects which need to be transformed for either pain removal (brittleness, performance, new functionality) or projects which will cause a medium-to-high business impact. Make a shortlist of real time candidates for your event driven journey. In any case, make sure your first project has the potential to be a quick win that will deliver business value and breathe optimism into your team.

It’s also important to bring in the stakeholders at this early stage because events are often business-driven, so not all your stakeholders are going to be technical. Consider which teams will be impacted by the transformation, and which specific people will need to be involved and buy in to make the project a success from all sides.

Step 3: Build Your Event-Driven Architecture Foundation

And now the tooling. Once the first project is identified, it’s time to think about architecture and tooling. An event driven architecture will depend on decomposing flows into microservices, and putting in place a runtime fabric that lets the microservices to talk to each other in a publish/subscribe, one-to-many, distributed fashion.

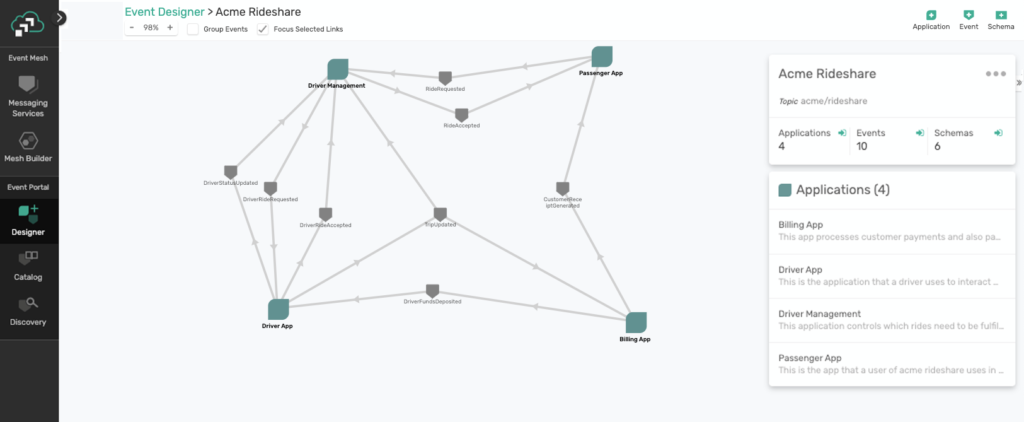

It’s also important to start the design time right, and have tooling to ensure that events can be described and catalogued, and have their relationships visualized.

You’ll want to have an architecture that’s modular enough to meet all of your use cases, and flexible enough to receive data from everywhere that you have data deployed (on premises, private cloud, public cloud, etc.). This is where the event-driven architecture platform comes in with the event mesh runtime.

Having your microservices communicate with each other using REST can lead to a “distributed monolith” that’s as tightly coupled and rigid as a traditional monolithic application. Putting in place even some of the pieces of an event streaming platform will let you build things – starting with your first project – in a way that supports the decoupling and agility you want to achieve.

The following runtime and design time pieces are essential:

- Event Broker: An event broker is the fundamental runtime component for event routing in a pub sub, low latency and guaranteed delivery manner. Applications and microservices are decoupled from each other and communicate via the event broker. Events are published to the event broker via topics following a taxonomy and are correspondingly subscribed to by one or more applications or microservices, or analytics engines or data lakes. To avoid vendor lock in, an event broker should use open protocols and APIs. With open standards, you have the flexibility of choosing the appropriate event broker provider over time. Think about the independence TCP/IP gave to customers choosing networking gear – open standards made the internet happen.

- Event Mesh: An event mesh is a network of event brokers that lets dynamically routes events between applications no matter where they are deployed – on premises or in any cloud or even at IoT edge locations. Just like the internet is made possible by routers converging routing tables, the event mesh converges topic subscriptions with various optimizations for distributed event streaming.

- Event Portal: An event portal – just like an API portal – is your design and runtime view into your event mesh. An event portal gives architects an easy GUI-based tool to define and design events in a governed manner, and offers them for use by publishers and subscribers. Once you have defined events, microservices or applications can also be designed in the event portal and choose which events they will consume and produce by browsing and searching the event catalog.

- Event Taxonomy: As you start your EDA journey, it’s important to pay attention to topic taxonomy – setting up a topic naming convention early on and governing it. A solid taxonomy is probably the most important design investment you will make, so best not to take short cuts here. Having a good taxonomy will greatly help with event routing, and the conventions will become obvious to application developers. Check out this post for a detailed look at topic hierarchy best practices.

- Organizational Alignment: Event driven architecture starts small and grows, but over time, the organization needs to be evolved so that they can start thinking events. This requires leadership buy-in. The ESB team needs to start thinking about choreography, rather than orchestration. The API teams need to start thinking about event driven APIs, rather than just request/reply.

Step 4: Pick Pilot Application and Event Catalog

The next step for implementing your event-driven architecture is to determine which event flow to get started with first. Essentially, an event flow is a business process that’s translated to a technical process. The event flow is the way an event is generated, sent through your broker, and eventually consumed.

Getting started with a pilot project is the best way to learn through experiment before you scale.

With the pilot, as you identify events and the event flow, the event catalog will also automatically start taking shape. Whether you maintain the catalog in a simple spreadsheet or in an event portal, the initial event catalog also serves as a starting point of reusable events which applications in future will be able to consume off the event mesh.

You want to choose a flow that makes the most sense for your current state modernization or pain reduction. An inflight project, or an upcoming transformation make ideal candidates, whether it’s for innovation reasons or technical debt reduction – performance, robustness, cloud adoption. The goal is for it to become a reference for future implementations. Pick a flow which can be your quick win.

Step 5: Decompose the Event Flow into Asynchronous, Event-Driven Microservices

Once pilot flows have been identified and an initial event catalog is starting, the next step is to start an event driven design by decomposing the business flow into event driven microservices, and identify events in the process.

Decomposing an event flow into microservices reduces the total effort required to ingest event sources, as each microservice will handle a single aspect of the total event flow. New business logic can be built using microservices, while existing applications – SAP, Mainframe, Custom apps – can be event enabled with adapters.

There are two ways to manage your microservices in your event flows: orchestration and choreography. With orchestration, your microservices work in a call-and-response (request reply) fashion, and they’re tightly coupled, i.e. highly dependent on each other, tightly wired into each other. With event routing choreography, microservices are reactive (responding to events as they happen) and loosely coupled — which means that if one application fails, business services not dependent on it can keep on working while the issue is resolved. You can read all about the technical differences between orchestration and choreography.

This modeling of event driven processes can be done manually at first or with an event portal, where you can visualize and choreograph the microservices.

In this step, you’ll also need to identify which steps have to occur synchronously, and which ones can be asynchronous. Synchronous steps are the ones which need to happen for which the application or API invoking the flow is waiting for a response, or blocking, Asynchronous events can happen after the fact and often in parallel – such as logging, audit, writing to a data lake. In other words, applications which are fine with being “eventually consistent” can be dealt with asynchronously. The events float around, and microservices choreography determine how they get processed. Because of these key distinctions, you should keep the synchronous parts of the flow separate from the asynchronous parts.

With your initial project chosen, your event mesh built, and a pilot event flow that you can break down into microservices, you have to get your event sources working with your event broker. Read more about that in this blog post, How to Tap into the 3 Kinds of Event Sources

Step 6: Get a Quick Win

Once the design is done and the first application gets delivered as an event native application, the event catalog also starts to get populated.

Getting a quick win with the event catalog as a main deliverable is just as important as is the other business logic, and it drives innovation and reuse.

Hopefully with the ability to do more things in real time, you can demonstrate agility and responsiveness of applications, which in turn leads to a better customer experience. With stakeholder engagement, you can help transform the whole organization!

Step 6+1: Rinse and Repeat – Scale and Snowball!

Now that you have your template for becoming event-driven and hopefully your first quick-win in place, you can rinse and repeat. Go ahead and choose your next event flow!

From here, you can walk through the steps again for the next few projects. As you go, the event catalog will be populated with more and more events. Existing events will start to get reused by new consumers and producers. A richer catalog will open up more and more opportunities for real time processing and insights.

Culture change is a constant but gets easier with showcasing success. The integration/middleware team might own the event catalog and event mesh while the application and LOB teams use it/contribute to it. LOB-specific event catalogs and localized event brokers are also desirable patterns, depending on how federated or centralized the organization’s technology teams and processes are.

As you scale, more and more applications produce and consume events, often starting with reusing existing events. That is how the event driven journey snowballs as it scales!

If you’re looking for a deeper dive into the 6 steps I have outlined here, download my free guide:

For more information on digital transformation and to assess your readiness to implement event-driven architecture, check out this Maturity Model for Event-Driven Architecture from Gartner, our Complete Guide to Event-Driven Architecture, and these statistics around event-driven architecture.

Explore other posts from categories: For Architects | For Developers

Sumeet Puri

Sumeet Puri