Two of the most widely used messaging services in the Azure Cloud platform are Azure Event Hubs and Azure Service Bus. Architects and developers take advantage of these Azure messaging services to scale and extend their applications into more advanced cloud services in Azure, such as machine learning, real-time data analytics, and data science.

Azure Event Hubs is a fully managed, real-time data ingestion service; it is often used to stream millions of events from multiple sources and build multiple data pipelines. If the solution is specific to IoT devices, there is IoT Hub, which is the same under the cover.

Azure Service Bus is a highly reliable cloud messaging service that connects applications with services. It offers asynchronous operations between server and clients, as well as other messaging capabilities such as pub/sub with queues and topics.

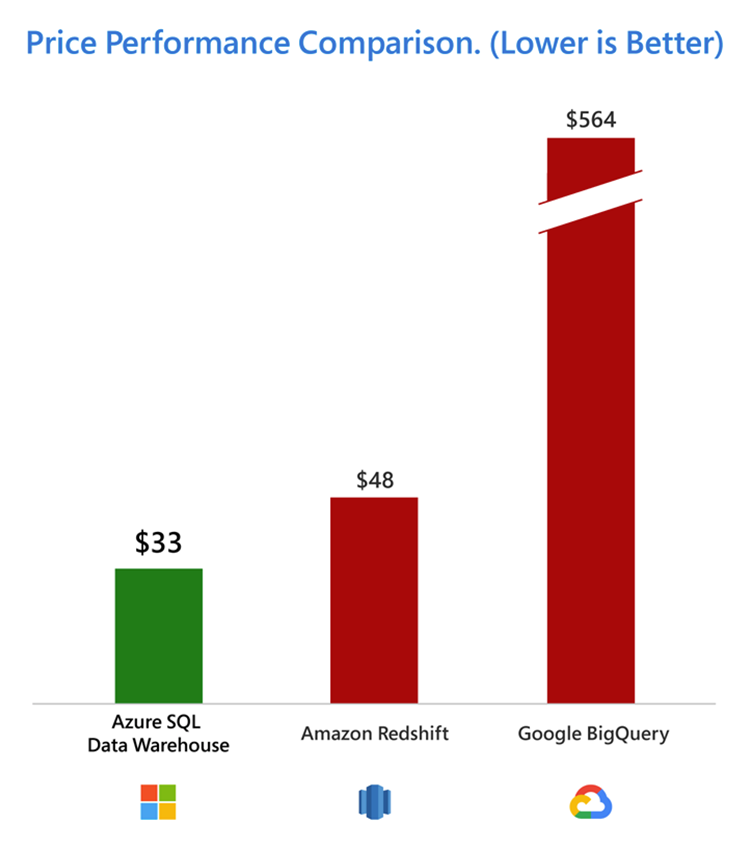

In a recent study by GigaOm, it was found that Azure SQL Data Warehouse is outperforming the competition up to a whopping 14x times and Azure is up to 94 percent cheaper.

While benefits of the Azure platform surely extend to the rest of the analytic stack (data lake storage, data science capabilities, machine learning algorithms, etc.), what if the data you wish to access/analyze comes from legacy systems or is located on another cloud platform (GCP or AWS)?

How an Event Mesh complements Azure Messaging Services

It is only wishful thinking that all cloud projects and their applications are new, and data are readily available anywhere. Quite often, sources of data come from legacy systems or 3rd party applications that are tightly restricted from access; whether it is a specific protocols or extra layers of information that needs to be extracted.

An event mesh is a network of event brokers that manages event-driven applications across on-premises, hybrid, multi-cloud, and IoT environments. It is used by large and small global enterprises that desire greater governance and control of data across a variety of environments. There may be restrictions where an application can only be transported in certain protocols or even more restricted due to security policies imposed by the information technology group. Having an event mesh not only takes care of the multiple protocols, but also governs the ins and outs of information with precise permissions and control. You can even achieve additional cost savings on cloud egress costs with an event mesh that is optimized with compression and WAN. The key is that applications/systems subscribes to only the information that they need, avoiding anything extra, bringing down the overall costs of operating in the cloud.

In addition, if you wish to access data on another cloud platform like Google Cloud Platform or Amazon Web Services, an event mesh that acts as the bridge between multiple cloud platforms and that can easily collect and organize information is likely the answer.

Governance of Data, Security and Control

Let’s say you want to control who gets access to the data, how often they get it, and what kinds of security are in place to prevent any application(s) gaining more access than it needs for the purpose of the function. With the proper design of an event mesh, separation of topics and queues and the clusters of event brokers that are highly governed provide a good and solid foundation for event-driven applications that are constantly changing, growing, and demanding better integration into cloud services.

With rich and advanced topic structures, event-driven applications and extensions to cloud services are managed and controlled, securing the needs of timeliness and “just enough” access to information.

Using PubSub+ to Manage Data Flows and Achieve Flexibility and Growth

A centralized event mesh – created using Solace PubSub+ event brokers for high value governance, design, and distribution of event-driven applications – facilitates the flow of data into the Azure ecosystem for further machine learning and artificial intelligence analysis.

An event mesh will allow you to:

- Ingest messages already in the Mesh to any Azure Event Hubs or Service Bus

- Have greater control over what topics of information you want to send to Azure

- Stop worrying about how each application connects to Azure individually; the backend is taken care of

- Send an event once and fan out to multiple recipients (who subscribe to events they are interested in)

- Save on cloud egress costs through compression and WAN optimization

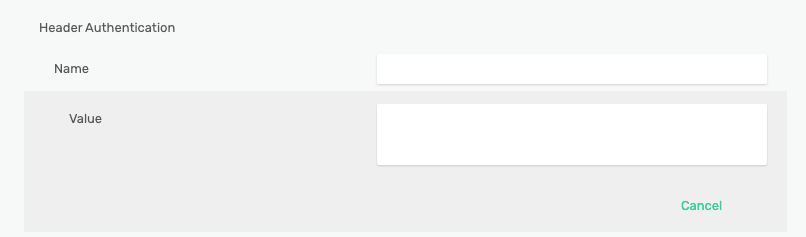

Solace PubSub+ Event Broker integrates seamlessly with Azure messaging services with the use of Azure Shared Access Signature. For outgoing REST messaging from PubSub+, users can setup REST delivery points and stream this information to Azure effectively and securely.

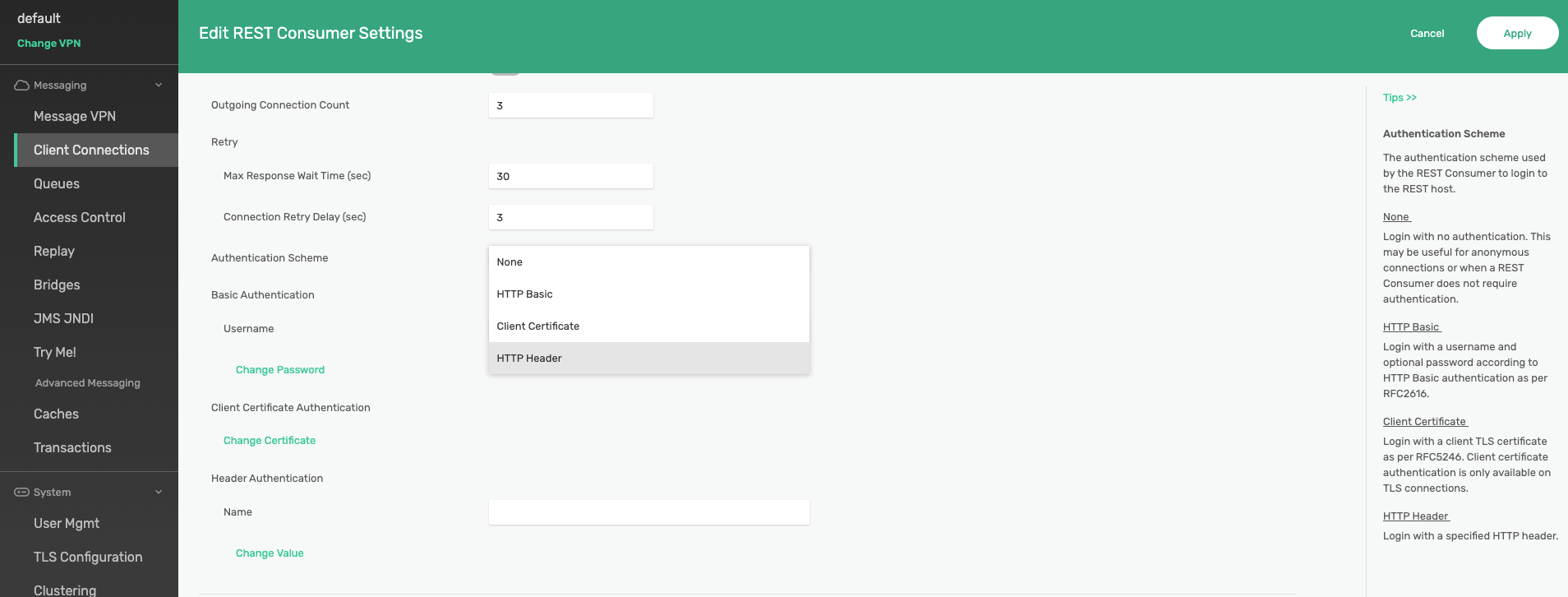

All you have to do is provide the name and the value of the HTTP header authentication (shown below) and you are all set.

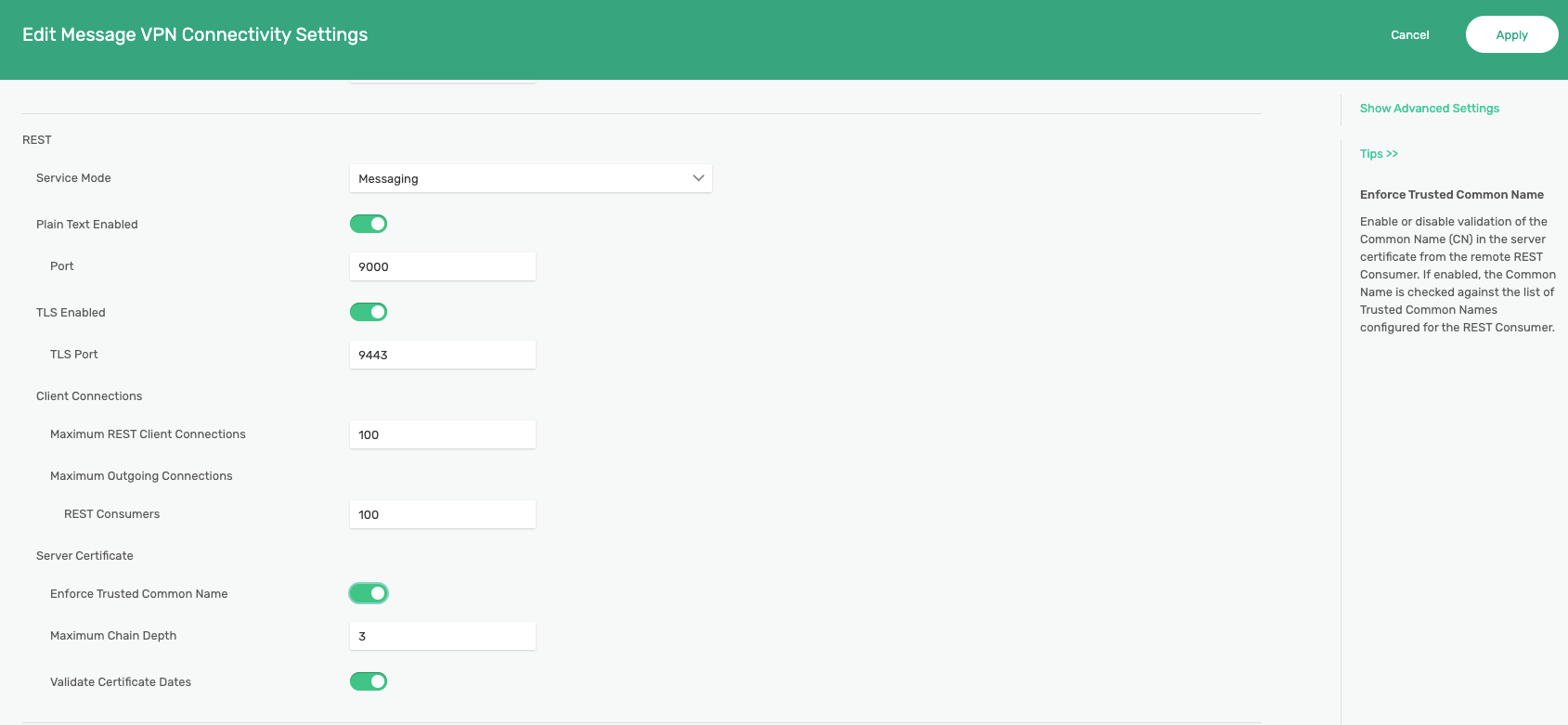

Additional Options for Transport Layer Security

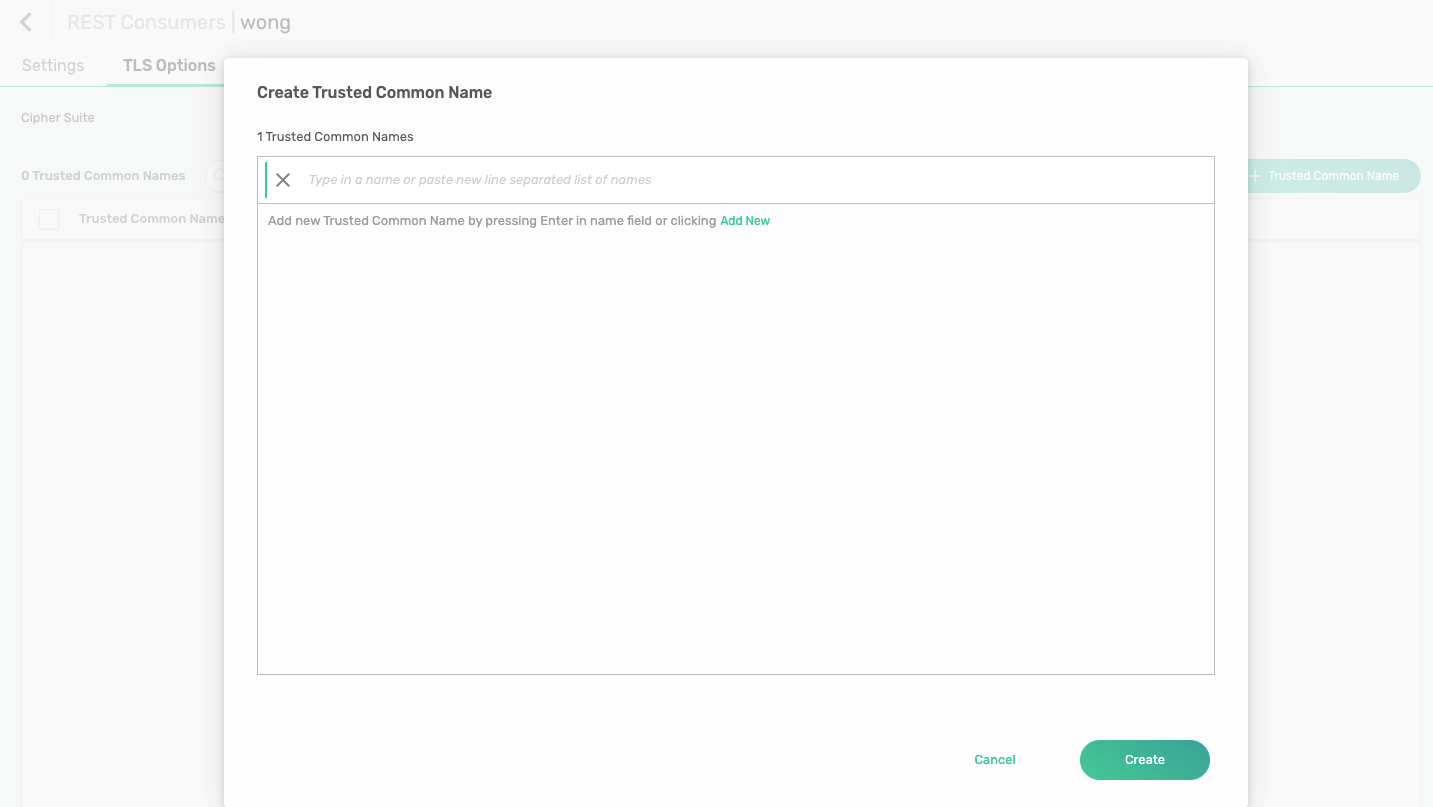

PubSub+ supports TLS connections for these REST consumers. Users have the option to valdiate these hostname using common name (CN) or subject alternative name (SAN).

Customers can choose to enable or disable validation of the common name in the server certificate from the remote REST consumer. If enabled, the common name is checked against the list of trusted common names configured for the REST consumer.

Take Full Advantage of Azure AI and Machine Learning Capabilities with PubSub+ Event Mesh

Of course, the main goal of harnessing the extended Azure capabilities with an event mesh is data governance and control. Taking care of situations where data comes from on-premises or multi-cloud sources is an added bonus. The full advantage of integrating an event mesh with Azure messaging services is that you get to control precisely what data should go into Azure. Cost savings is one benefit, control and governance is another. There is a fine balance between pushing everything to the cloud and letting AI and ML discover data versus leveraging Azure’s machine learning capabilities in certain areas of interest. It would not be ideal to get billed on massive data storage in Azure only to find out the insights aren’t worth it or it would be too expensive to maintain that model of data ingress.

With a centralized approach of integrating an event mesh with Azure messaging services (such as Azure Event Hubs, IoT Hub, and Azure or Service Bus), Solace PubSub+ is your gateway to greater growth and flexibility.

Resources

Explore other posts from categories: For Architects | For Developers

Douglas Wong

Douglas Wong